Hypothesis Testing for a Single Mean and Proportion

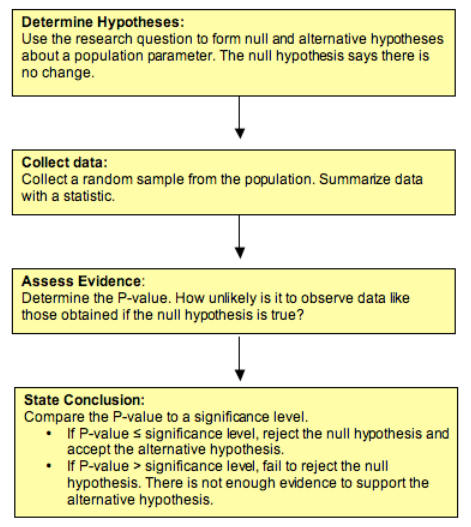

Hypothesis testing gives you a structured way to answer the question: does my sample data provide enough evidence to challenge a claim about a population? That "claim" is your null hypothesis, and the entire process revolves around deciding whether to reject it or not.

This section covers how to set up hypotheses, calculate the right test statistic, and interpret your results using p-values and significance levels.

Formulation of Hypotheses

Every hypothesis test starts with two competing statements about a population parameter (either a mean or a proportion ).

Null hypothesis (): This is the "nothing special is happening" claim. It states that the population parameter equals a specific value. You assume it's true unless the data gives you strong evidence against it.

- Single mean:

- Single proportion:

Alternative hypothesis (): This is what you're trying to find evidence for. It contradicts the null and can take three forms:

- Left-tailed: or (you suspect the true value is less than the claimed value)

- Right-tailed: or (you suspect the true value is greater than the claimed value)

- Two-tailed: or (you suspect the true value is different in either direction)

The direction of your alternative hypothesis depends on the research question. For example, if a company claims its light bulbs last 1,000 hours and you think they last less, that's a left-tailed test.

Calculation of Test Statistics

The test statistic measures how far your sample result is from the hypothesized value, in standardized units. A larger test statistic means your sample is further from what the null hypothesis predicts.

Which formula do you use?

- Z-test for a single mean (use when population standard deviation is known):

where is the sample mean, is the hypothesized mean, is the population standard deviation, and is the sample size.

- T-test for a single mean (use when is unknown and you estimate it with the sample standard deviation ):

This uses the t-distribution with degrees of freedom instead of the standard normal.

- Z-test for a single proportion:

where is the sample proportion and is the hypothesized proportion. Notice the denominator uses (the hypothesized value), not .

Finding the p-value:

The p-value is the probability of getting a test statistic as extreme as (or more extreme than) what you observed, assuming the null hypothesis is true. A small p-value means your data would be unlikely if were true.

How you calculate it depends on the tail direction:

- Left-tailed: p-value = or

- Right-tailed: p-value = or

- Two-tailed: p-value = or

For z-tests, you use the standard normal distribution table. For t-tests, you use the t-distribution table with degrees of freedom.

Critical value approach (alternative to p-values):

Instead of computing a p-value, you can find the critical value that marks the boundary of the rejection region. If your test statistic falls in the rejection region (beyond the critical value), you reject . Both approaches always give the same conclusion.

Interpretation of Hypothesis Tests

Significance level (): This is the threshold you set before collecting data. It represents the probability of making a Type I error, which means rejecting when it's actually true. Common choices are 0.01, 0.05, and 0.10.

The decision rule is straightforward:

- If p-value : Reject . There is sufficient evidence to support the alternative hypothesis.

- If p-value : Fail to reject . There is not enough evidence to support the alternative hypothesis.

Be careful with wording here. "Fail to reject" is not the same as "accept." You're not proving is true; you just don't have enough evidence against it.

Connection to confidence intervals:

Confidence intervals offer another way to reach the same conclusion. If the hypothesized value falls inside the confidence interval, you fail to reject . If it falls outside, you reject .

- Single mean (z-interval):

- Single mean (t-interval):

- Single proportion:

Note that the proportion confidence interval uses in the standard error, while the hypothesis test uses . This is a common source of confusion.

Degrees of freedom: For a single-mean t-test, degrees of freedom = . This value affects the shape of the t-distribution. With smaller samples, the t-distribution has heavier tails (meaning you need a more extreme test statistic to reject ). As grows, the t-distribution approaches the standard normal.

Additional Considerations in Hypothesis Testing

- Statistical power is the probability of correctly rejecting a false null hypothesis. Higher power means you're less likely to miss a real effect. Power increases with larger sample sizes, larger effect sizes, and higher levels.

- Effect size measures the magnitude of the difference between your sample result and the hypothesized value. A result can be statistically significant (small p-value) but have a tiny effect size that doesn't matter in practice. Always consider both.

- Central Limit Theorem (CLT): This is why the z-test and t-test work. The CLT states that the sampling distribution of the sample mean approaches a normal distribution as sample size increases, regardless of the population's shape. For proportions, the normal approximation works well when and .