Central Limit Theorem

Applying the Central Limit Theorem

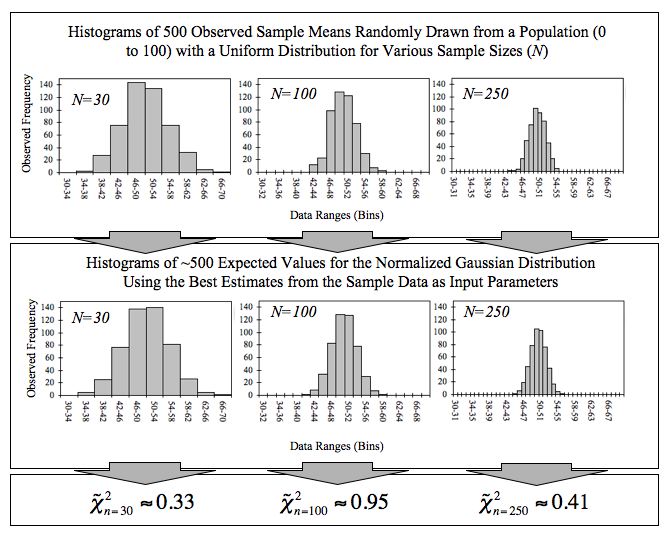

The central limit theorem (CLT) says that the sampling distribution of the sample mean will be approximately normal as long as certain conditions are met. This holds true regardless of the shape of the original population distribution, which is what makes it so useful.

Conditions for the CLT to apply:

- Sufficiently large sample size: Typically . If the population is already normal, smaller samples work fine. If the population is heavily skewed, you may need a larger .

- Independence: Each observation in the sample must be independent of the others. In practice, this means using random sampling and, for sampling without replacement, the sample should be less than 10% of the population.

- Population not extremely skewed: With very skewed distributions, you need a larger sample size before the CLT kicks in.

Properties of the sampling distribution (when CLT applies):

- Mean of the sampling distribution equals the population mean:

- Standard deviation of the sampling distribution (called the standard error) is:

Notice that the standard error shrinks as increases. Larger samples produce less variability in sample means.

To calculate probabilities for sample means, follow these steps:

-

Verify the CLT conditions are met.

-

Identify , , and .

-

Calculate the standard error:

-

Convert the sample mean to a z-score:

-

Use a standard normal table or calculator to find the probability associated with that z-score.

The CLT also applies to sums of independent random variables. When working with sums instead of means:

- Mean of the sum:

- Standard deviation of the sum:

The sum's standard deviation grows with , which is the opposite direction from the standard error of the mean.

Law of Large Numbers Connection

The law of large numbers says that as sample size increases, the sample mean converges to the population mean. In notation: as , .

The CLT builds on this idea but goes further. The law of large numbers tells you where the sample mean is heading (toward ). The CLT tells you the shape of the sampling distribution along the way (approximately normal) and how spread out it is (via the standard error).

Both concepts share the same core insight: larger samples give you more accurate and predictable estimates of population parameters, because sampling variability decreases as grows.

Central Limit Theorem for Means vs. Sums

The CLT works for both sample means and sample sums, but you need to recognize which one a problem is asking about.

Use the CLT for means when:

- The question asks about a sample mean or average (e.g., mean height, average income)

- You need a probability like

- You standardize using

Use the CLT for sums when:

- The question asks about a total (e.g., total sales, combined weight)

- You need a probability like

- You standardize using

A quick way to tell: if the problem says "total" or "sum," use the sum version. If it says "mean" or "average," use the mean version. In both cases, check that the CLT conditions (large , independence, not extremely skewed) are satisfied before proceeding.

Statistical Inference and the Central Limit Theorem

The CLT is what makes most of statistical inference possible. Because the sampling distribution is approximately normal, you can use it to:

- Construct confidence intervals: Estimate a population parameter within a range of plausible values, using the standard error to determine the margin of error.

- Perform hypothesis tests: Assess whether observed sample data are consistent with a claimed population parameter by calculating how likely the result would be under the null hypothesis.

- Quantify sampling error: The difference between a sample statistic and the true population parameter. The CLT tells you this error decreases as sample size increases, at a rate proportional to .

These techniques all rely on knowing the shape and spread of the sampling distribution, which is exactly what the CLT provides.