Hypothesis Testing for Two Population Means with Known Standard Deviations

When you want to know whether two groups truly differ on some measurement, you need more than just eyeballing the sample averages. The two-sample z-test gives you a formal way to decide whether an observed difference between two group means is statistically significant or likely just sampling variability. This test applies specifically when the population standard deviations are already known, which is uncommon in practice but forms the foundation for understanding more general two-sample procedures.

Test Statistic for Two Population Means

The z-test statistic measures how far the observed difference between sample means falls from the hypothesized difference, scaled by the standard error. Here's the formula:

Each component:

- and : the sample means from group 1 and group 2

- : the hypothesized difference between the population means (often 0, meaning "no difference")

- and : the known population standard deviations for each group

- and : the sample sizes drawn from each population

The numerator captures how far the observed difference is from what you'd expect under . The denominator is the standard error of the difference, which accounts for variability in both groups and both sample sizes. A larger means the observed difference is harder to explain by chance alone.

Sampling Distribution of the Difference in Means

The quantity has its own sampling distribution. When the population standard deviations are known, this distribution is normal (not approximately normal) if both populations are normal. If the populations aren't normal, the Central Limit Theorem still makes the distribution approximately normal as long as both sample sizes are large ( and ).

Two key properties of this distribution:

- Mean:

- Standard error:

These hold as long as the two samples are independent, meaning the selection of individuals in one sample doesn't influence the other.

Conducting the Hypothesis Test

Step 1: State the hypotheses.

- Null hypothesis: (where is the hypothesized difference, usually 0)

- Alternative hypothesis (pick one based on the research question):

- Two-tailed:

- Left-tailed:

- Right-tailed:

Step 2: Choose a significance level (commonly 0.05 or 0.01).

Step 3: Calculate the test statistic using the z-formula above.

Step 4: Find the critical value(s) or p-value.

| Test type | Critical value(s) | P-value calculation |

|---|---|---|

| Two-tailed | ||

| Left-tailed | ||

| Right-tailed |

Step 5: Make your decision and interpret.

- Critical value approach: Reject if the test statistic falls in the rejection region (beyond the critical value). Otherwise, fail to reject .

- P-value approach: Reject if the p-value is less than . Otherwise, fail to reject .

Always state your conclusion in context. For example: "At the 0.05 significance level, there is sufficient evidence to conclude that the mean test score for School A differs from that of School B."

Worked Example

Suppose you're comparing average SAT math scores between two school districts. You know and . You collect samples of and students, finding and . Test whether the means differ at .

-

vs.

-

-

Calculate the test statistic:

- For a two-tailed test at , the critical values are . The p-value is .

- Since (or equivalently, ), you fail to reject . There is not sufficient evidence at the 0.05 level to conclude the mean SAT math scores differ between the two districts.

Notice how close this was to the boundary. A slightly larger sample or a slightly bigger difference would have tipped the result the other way.

Additional Considerations

Confidence intervals offer a complementary perspective. A confidence interval for is:

If this interval contains 0 (when testing ), you'd fail to reject , consistent with the hypothesis test.

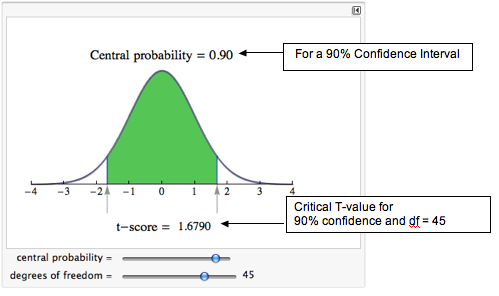

Why z and not t? This procedure uses the z-distribution because the population standard deviations are known. When and are unknown and estimated from the samples, you switch to a t-test, which accounts for the extra uncertainty in estimating those parameters. Degrees of freedom become relevant in that t-test setting, not here.