Continuous Distributions

Continuous distributions model random variables that can take any value within a range, like heights, weights, or waiting times. Unlike discrete distributions where you sum probabilities for individual outcomes, continuous distributions use areas under curves (integrals) to find probabilities. This section covers the two main continuous distributions you need to know (uniform and exponential), how to read and interpret density functions, and how to calculate means and variances for continuous random variables.

Continuous Distributions

Continuous Probability Calculations

Continuous Uniform Distribution

A uniform distribution applies when every value in a range is equally likely. The PDF is flat, meaning no value is more probable than any other.

- PDF: for

- CDF: for

- Probability over an interval: for

Because the PDF is constant, probability calculations reduce to a simple ratio: the length of the interval you care about divided by the total length of the distribution's range. For example, if a random number is chosen uniformly between 0 and 1, the probability it falls between 0.2 and 0.5 is .

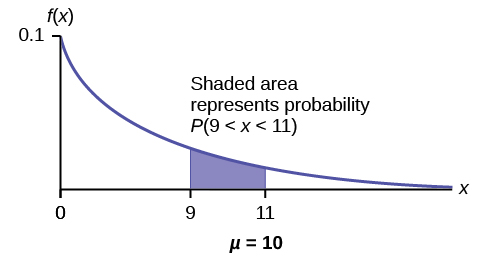

Exponential Distribution

The exponential distribution models the time between events in a Poisson process, where events occur continuously and independently at a constant average rate .

- PDF: for

- CDF: for

- Survival probability (event hasn't occurred by time ):

The memoryless property is unique to the exponential distribution among continuous distributions. It states:

This means the probability of waiting an additional units doesn't depend on how long you've already waited. For instance, if a light bulb's lifespan is exponentially distributed, a bulb that's been running for 1,000 hours is no more likely to fail in the next hour than a brand-new bulb.

Interpretation of Density Functions

Probability Density Function (PDF)

A PDF describes the relative likelihood of a continuous random variable taking on a given value. Two critical rules govern every PDF:

- for all (the function is never negative)

- The total area under the curve equals 1

The value of at a single point is not a probability. Instead, the area under over an interval gives you the probability that falls in that interval. So for a height distribution equals the area under the PDF curve between 160 cm and 180 cm.

This also means for any single value . There's no area under a curve at a single point, so the probability of hitting any exact value is zero.

Cumulative Distribution Function (CDF)

The CDF gives the probability that is less than or equal to :

The CDF is the running total of area under the PDF from the left. Its key properties:

- Non-decreasing: as increases, can only stay the same or go up

- Bounded: and

- Right-continuous: no jumps (for continuous distributions)

The quantile function is the inverse of the CDF. Given a probability , the quantile function returns the value such that . This is how you find percentiles: the 90th percentile is the value where .

To find the probability that falls between two values using the CDF:

Analysis of Continuous Distributions

Expected Value (Mean)

The expected value is the "balance point" of the distribution, calculated as a weighted average across all possible values:

For the specific distributions covered here:

- Uniform on :

- Exponential with rate :

Linearity of expectation holds for continuous variables just as it does for discrete ones:

Variance and Standard Deviation

Variance measures how spread out the distribution is around the mean :

The computational formula is often easier to work with:

To use this, you calculate separately, then subtract the square of the mean.

For the specific distributions:

- Uniform on :

- Exponential with rate :

Variance scales quadratically under linear transformations:

Note that adding a constant shifts the distribution but doesn't change its spread, which is why drops out.

Standard deviation is the square root of variance: . It's in the same units as , making it more interpretable than variance.

Advanced Concepts in Continuous Distributions

- The normal distribution (Gaussian distribution) is a symmetric, bell-shaped distribution fully characterized by its mean and standard deviation . It shows up constantly in statistics because of the Central Limit Theorem.

- A moment-generating function provides a compact way to compute moments (mean, variance, etc.) and can uniquely identify a distribution.

- Kurtosis measures how heavy or light the tails of a distribution are compared to a normal distribution. High kurtosis means more extreme outliers are likely.

- The likelihood function is used in parameter estimation. Given observed data, it measures how probable those observations are for different parameter values, forming the basis of maximum likelihood estimation.

Applying Continuous Distributions

Continuous Probability Calculations

Uniform Distribution Applications

A bus arrives at a stop at a uniformly random time between 7:00 AM and 7:15 AM. What's the probability it arrives within the first 5 minutes?

- Identify the parameters: , (minutes after 7:00)

- Apply the formula:

A bolt's length is uniformly distributed between 9.9 cm and 10.1 cm. The probability its length falls between 9.95 cm and 10.05 cm is .

Exponential Distribution Applications

Customers arrive at a store at an average rate of 6 per hour, so per hour (or equivalently, per minute). The probability the next customer arrives within 10 minutes:

- Use the CDF:

The probability of waiting more than 10 minutes: .

Interpretation of Density Functions

Reading a PDF

When you look at a PDF, pay attention to:

- Domain: the range of possible values (where )

- Peaks (modes): where the distribution is most concentrated. A distribution can be unimodal (one peak), bimodal (two peaks), or multimodal.

- Symmetry and skewness: a symmetric PDF is a mirror image around its mean. If the right tail stretches further, it's right-skewed; if the left tail stretches further, it's left-skewed.

Remember that the height of the PDF at a point doesn't give you a probability directly. A PDF can even have values greater than 1 (for example, the uniform distribution on has ). What matters is the area under the curve.

Using the CDF in Practice

The CDF converts questions about ranges into simple subtraction:

- : read directly

To find a percentile, you go in reverse: set and solve for .

Analysis of Continuous Distributions

Calculating Mean and Variance

For distributions beyond uniform and exponential, you'll need to integrate directly. The steps:

- Verify the function is a valid PDF (non-negative and integrates to 1)

- Compute over the support

- Compute over the support

- Find variance using

Comparing Distributions

The mean and variance together tell you a lot about how two distributions differ. For example, if two queuing systems both have exponential service times but different rates ( vs. customers per minute), the second system has a shorter average wait ( min vs. min) and less variability ( vs. ).

Changing distribution parameters affects both the center and spread. In the exponential case, increasing decreases both the mean and the variance simultaneously, since both depend on .