Constrained Optimization

Overview of Constrained Optimization

Constrained optimization is the problem of finding the maximum or minimum of a function when the variables aren't free to take any value they want. Instead, they must satisfy one or more conditions (constraints) that restrict where you can look for solutions.

- The objective function is the quantity you're trying to optimize, expressed as a function of your decision variables (e.g., ).

- A constraint is a condition the variables must satisfy for a solution to count. For example, a budget limit or a surface equation.

- The feasible region is the set of all points that satisfy every constraint simultaneously. Your optimal point must live somewhere in this region.

Key Components and Concepts

Decision variables are the independent variables you can adjust to optimize the objective function.

Constraints come in two flavors:

- Equality constraints require the variables to satisfy a specific equation, like . These are the type Lagrange multipliers handle directly.

- Inequality constraints specify a range of acceptable values using , , etc. These require additional techniques beyond standard Lagrange multipliers.

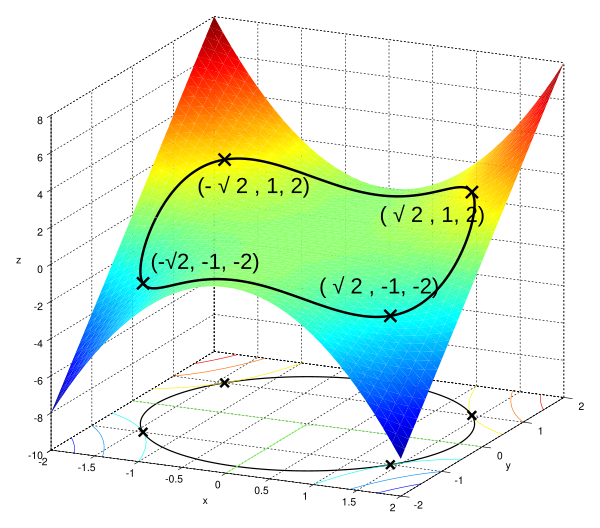

Graphing the feasible region (when possible in 2D or 3D) helps you visualize where the constraint boundaries intersect and where the optimum might occur.

Lagrange Multipliers

Introduction to Lagrange Multipliers

The core idea behind Lagrange multipliers is geometric: at a constrained extremum, the gradient of the objective function must be parallel to the gradient of the constraint. If they weren't parallel, you could move along the constraint surface in a direction that still improves the objective function, meaning you haven't found the extremum yet.

The Lagrange multiplier is the scalar that relates these two gradients:

This condition, combined with the constraint itself, gives you a system of equations to solve.

Solving Constrained Optimization Problems

To optimize subject to the constraint :

-

Form the Lagrangian function:

-

Take partial derivatives of with respect to each variable and set them equal to zero:

- (this just recovers the constraint )

-

Solve the resulting system of equations simultaneously for , , and .

-

Evaluate at each critical point to determine which gives the maximum and which gives the minimum.

-

Check boundary behavior or use second-order conditions if you need to classify the critical points rigorously. The bordered Hessian matrix is the standard tool here: its determinant tells you whether a critical point is a constrained max, constrained min, or neither.

Note on sign convention: Some textbooks write and others write . Both work because can be positive or negative. Just be consistent with whichever your course uses.

For problems with multiple constraints and , you introduce a separate multiplier for each:

The same procedure applies, but the system of equations is larger.

Interpreting

The multiplier has a concrete meaning: it measures the rate of change of the optimal value of with respect to relaxing the constraint. If your constraint is a budget , then tells you approximately how much the optimum improves per unit increase in budget. This interpretation shows up constantly in economics and engineering.

Applications

Economic Applications

- Profit maximization: Maximize profit subject to production constraints like limited labor or raw materials.

- Cost minimization: Minimize a cost function subject to a required output level, e.g., produce at least units.

- Utility maximization: A consumer maximizes subject to a budget constraint . This is one of the most classic Lagrange multiplier setups, and here represents the marginal utility of income.

- Resource allocation: Distribute limited resources across activities to maximize total output or minimize total cost.

Other Applications

- Engineering design: Optimize parameters like weight or efficiency subject to physical constraints (strength requirements, material limits).

- Portfolio optimization: Maximize expected return for a given level of risk, or minimize risk for a target return.

- Environmental management: Minimize pollution subject to economic or regulatory constraints.

- Transportation and logistics: Minimize shipping cost or delivery time subject to capacity and demand constraints.