Gradient and Directional Derivatives

Relationship between Gradient and Directional Derivatives

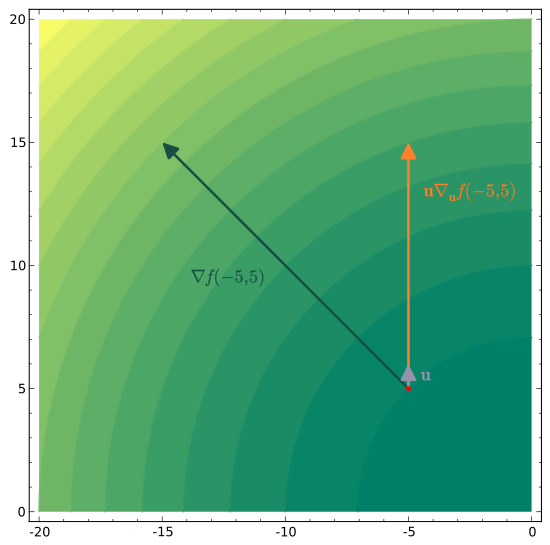

The gradient vector points in the direction of the maximum rate of change of a function at a given point. Its components are the partial derivatives with respect to each variable:

One geometric fact worth remembering: the gradient is always perpendicular to the level curves (in 2D) or level surfaces (in 3D) of the function at the point where it's calculated. This makes sense if you think about it: along a level surface, doesn't change at all, so the direction of greatest change must point away from that surface, i.e., normal to it.

The directional derivative measures the rate of change of in the direction of a unit vector . The core formula connecting it to the gradient is:

Because this is a dot product, you can also write it as:

where is the angle between and . This form makes the sign behavior obvious:

- Positive when has a component along the gradient direction (), meaning is increasing.

- Negative when points partly against the gradient (), meaning is decreasing.

- Zero when is perpendicular to the gradient (), meaning you're moving along a level surface.

Properties of Gradient and Directional Derivatives

Since , the extreme values follow directly from the range of cosine:

- Maximum directional derivative: , occurring when is parallel to (, so ).

- Minimum directional derivative: , occurring when is antiparallel to (, so ).

In other words, the gradient's magnitude tells you the steepest possible rate of increase, and the gradient's direction tells you which way to go to achieve it. Moving opposite to the gradient gives the steepest decrease.

If is differentiable at a point, then the directional derivative exists in every direction at that point and equals . This is sometimes called the gradient theorem for directional derivatives, and it's what justifies using the dot product formula rather than the limit definition every time.

Differentiability and Chain Rule

Differentiability in Multivariable Functions

A function is differentiable at a point if it's continuous there and its partial derivatives exist and are continuous in some neighborhood of the point. (A sufficient condition: if all partial derivatives are continuous near the point, then is differentiable there.)

Differentiability is a stronger condition than just having partial derivatives. For example, has partial derivatives at (both equal zero), but it's not differentiable there because the function doesn't behave linearly in all directions near that point.

When is differentiable at a point , two things follow:

- The tangent plane exists, with the gradient vector as its normal.

- The linear approximation is valid:

This approximation estimates near the point and also gives the equation of the tangent plane to the graph (or to the level surface, depending on context).

Multivariable Chain Rule

The chain rule for multivariable functions handles composite functions where the inputs are themselves functions of other variables.

If is differentiable and , are differentiable functions of , then:

This extends naturally: if depends on variables and each variable depends on parameters, you sum over all intermediate variables for each parameter. Tree diagrams can help you keep track of which paths contribute to each derivative.

A common application is finding the rate of change of a quantity along a parametric curve. If a particle moves along through a scalar field , then the rate of change of along the particle's path is:

Notice this is just the directional derivative formula scaled by the particle's speed. If is a unit vector, you recover the directional derivative exactly. This is one of the cleanest ways to see how the chain rule and the gradient-directional derivative relationship are really the same idea from two different angles.