The chain rule for functions of several variables extends the single-variable chain rule to composite functions where intermediate variables depend on one or more independent variables. Mastering this rule is essential for the rest of Calculus IV, since it underpins coordinate transformations, implicit differentiation in higher dimensions, and optimization with constrained variables.

Derivatives of Composite Functions

The Chain Rule and Composite Functions

In single-variable calculus, the chain rule says that if , then . The multivariable chain rule does the same job, but now the "inner function" can output several variables, and the "outer function" can accept several inputs. Instead of multiplying two ordinary derivatives, you sum products of partial derivatives.

A composite function arises whenever one function feeds into another. For example, suppose and a path through the -plane is given by , . Then the composition is a single-variable function of built from a two-variable function.

To set up the chain rule, identify two pieces:

- The outer function , which takes the intermediate variables (here and ) as inputs.

- The inner functions (here and ), which express the intermediate variables in terms of the independent variable(s).

Partial Derivatives and the Total Derivative

Partial derivatives measure how changes when you vary one intermediate variable while freezing the others. For :

- is the rate of change of with respect to , holding constant.

- is the rate of change of with respect to , holding constant.

The total differential combines these into a single expression that captures how changes when all variables move at once:

The multivariable chain rule follows directly. If and are both functions of a single variable , divide the total differential by :

Each term pairs a partial derivative of the outer function with the ordinary derivative of the corresponding inner function. You then sum over every intermediate variable.

Worked example. Let with and .

- Compute the partial derivatives of : , .

- Compute the derivatives of the inner functions: , .

- Apply the chain rule: .

- Substitute , : .

You can verify this by differentiating directly.

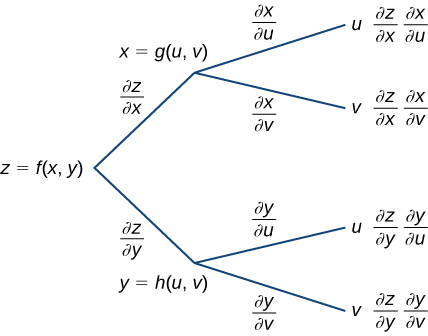

When the intermediate variables depend on two (or more) independent variables, you get partial derivatives on both sides. If and , then:

The pattern is the same: for each independent variable, sum over all intermediate variables.

Multivariable Calculus Fundamentals

Multivariable Functions and Differentiability

The chain rule formula above requires that be differentiable at the point in question. A function is differentiable at a point if its partial derivatives exist and are continuous in a neighborhood of that point. (Having partial derivatives exist at a single point is not enough on its own.)

Differentiability guarantees that can be well-approximated by a linear function near that point:

This linear approximation is exactly the total differential, and it's what makes the chain rule valid. If is not differentiable, the chain rule formula can fail even when the partial derivatives exist.

Gradient Vector and Jacobian Matrix

The gradient vector packages all partial derivatives of a scalar-valued function into one object:

It points in the direction of greatest increase of and its magnitude gives the rate of that increase. The chain rule for a scalar function composed with a path can be written compactly as .

When the outer function is vector-valued, the gradient generalizes to the Jacobian matrix. For , the Jacobian is the matrix whose -entry is :

The most general form of the multivariable chain rule is then matrix multiplication of Jacobians. If maps and maps , then:

Every version of the chain rule you'll encounter in this course (scalar with one parameter, scalar with several parameters, vector-valued compositions) is a special case of this single matrix equation. When you're unsure how to set up a chain rule problem, writing out the Jacobians and multiplying them will always give the correct answer.