Optimization Problem Components

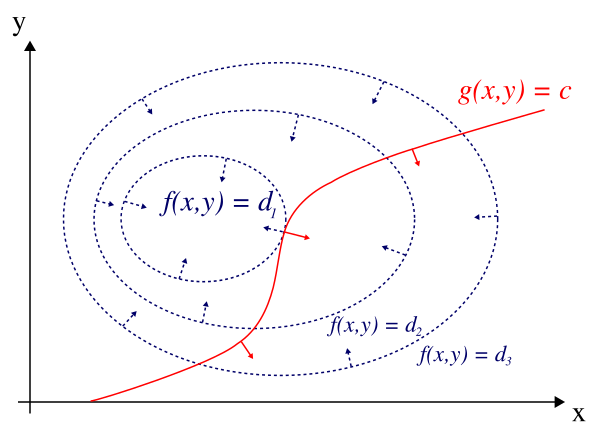

Constrained optimization is about finding the maximum or minimum of a function when your variables aren't free to take on any values they want. In Calculus IV, this means optimizing a multivariable function subject to one or more constraint equations that restrict which points you're allowed to consider.

Every constrained optimization problem has three parts:

- Objective function : the quantity you're trying to maximize or minimize (cost, distance, temperature, etc.)

- Constraint equation(s) : the restrictions your variables must satisfy (a fixed budget, a surface the point must lie on, a relationship between variables)

- Feasible set: the collection of all points that satisfy every constraint simultaneously

The feasible set is where you're allowed to search. Your optimal solution must live there.

Constraint Classifications

Equality constraints force the solution to lie exactly on some surface or curve. For example, restricts you to the unit circle. These are the constraints Lagrange multipliers are designed to handle.

Inequality constraints (like ) allow a range of values. Lagrange multipliers apply at the boundary of an inequality constraint, but if the optimum happens to fall in the interior, the constraint doesn't come into play. In this unit, you'll mostly work with equality constraints.

Binding and Non-Binding Constraints

When a problem has multiple constraints, not all of them necessarily affect the answer.

- A binding constraint is one where the optimal solution sits exactly on the constraint boundary. Remove it, and the solution would change. For instance, if you're maximizing subject to and , and the optimum satisfies both with equality, both constraints are binding.

- A non-binding constraint is automatically satisfied at the optimum without restricting it. The solution would be the same even if you dropped that constraint entirely.

To check whether a constraint is binding, evaluate it at the optimal point. If it holds with exact equality, it's binding. If there's slack (strict inequality), it's non-binding.

For Lagrange multiplier problems with only equality constraints, every constraint is binding by definition, since the solution must satisfy exactly.

Local and Global Extrema

Just like in unconstrained optimization, constrained problems can have multiple local extrema, and you need to distinguish them from the global extremum.

- Local extrema: points where is the largest or smallest compared to nearby feasible points. A local max on a constraint curve means is larger there than at neighboring points along the curve.

- Global extrema: the absolute largest or smallest value of over the entire feasible set.

A constrained problem can have several local maxima along a constraint curve, but only one (or possibly a tie) will be the global maximum.

Finding Optimal Solutions

Here's the general process before introducing Lagrange multipliers formally:

- Write down the objective function and all constraint equations .

- Determine the feasible set by understanding what the constraints look like geometrically (a curve in , a surface in , etc.).

- Find candidate points. In earlier courses, you might substitute the constraint into to reduce variables, then take derivatives and set them to zero. Lagrange multipliers (covered next in 8.2) give you a more powerful and systematic approach: solve together with .

- Evaluate at every candidate point, and also check any boundary or endpoint behavior if the feasible set is bounded.

- Compare values to identify which candidate gives the global max or min.

A common mistake is stopping after finding one critical point. Always check all candidates. On a closed, bounded feasible set, the Extreme Value Theorem guarantees that a global max and min exist, so keep looking until you've found them both.