Search Engine Fundamentals

Search engines are how most people find information online. They use automated systems to discover, organize, and rank billions of web pages so you can get useful results in a fraction of a second. Understanding how they work helps you search more effectively and think critically about the results you see.

Principles of Search Engines

Search engines operate through four main stages: crawling, indexing, ranking, and query processing.

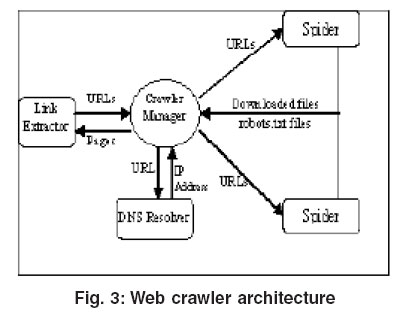

Web crawling is the discovery phase. Automated programs called web crawlers (or spiders) follow hyperlinks from page to page across the web, collecting data as they go. They regularly revisit pages to pick up changes, new content, or broken links.

Indexing is where that collected data gets organized. The search engine extracts key information from each page, including keywords, titles, and metadata, then stores it all in a massive searchable database. A core structure here is the inverted index, which maps every word to the list of pages containing it. This is what makes retrieval so fast.

Ranking algorithms determine which pages appear first in your results. Several factors go into this:

- PageRank evaluates a page's importance based on the quality and quantity of other pages linking to it. A link from a well-respected site counts more than a link from an obscure one.

- Keyword relevance measures how closely the page's content matches your search terms.

- Content quality assesses whether the page is well-written, original, and authoritative.

- User engagement tracks signals like click-through rates and how long people stay on the page.

Query processing is what happens the moment you hit "search." The engine interprets your query using natural language processing techniques, matches your terms against the index, and returns results ranked by relevance and importance.

Evaluation of Search Results

How do you measure whether a search engine is doing a good job? Researchers use several metrics:

- Precision is the proportion of your results that are actually relevant. If you get 10 results and 8 are useful, precision is high.

- Recall is the proportion of all relevant pages out there that the engine actually retrieved. High recall means it's not missing important results.

- F1 score is the harmonic mean of precision and recall. It balances the two, since optimizing for one often hurts the other. A search engine that returns every page on the internet would have perfect recall but terrible precision.

- User satisfaction looks at whether the top-ranked results actually meet the searcher's needs. Click-through rates and time spent on pages are common ways to measure this.

- Freshness refers to how up-to-date the results are. For breaking news or rapidly changing topics, this depends on how frequently the engine re-crawls and re-indexes pages.

Advanced Search and Ethical Considerations

Advanced Search Techniques

Most people type a few words into a search bar and hope for the best. These techniques give you much more control over your results.

Boolean operators let you combine terms logically:

- AND requires all terms to be present (e.g., climate AND policy returns only pages with both words)

- OR requires at least one term (e.g., college OR university broadens your results)

- NOT excludes pages containing a specific term (e.g., jaguar NOT car filters out automotive results)

Phrase search uses quotation marks to find an exact sequence of words. Searching "To be or not to be" returns only pages with that exact phrase, not pages that happen to contain those words scattered around.

Wildcards and truncation help when you're unsure of exact wording. An asterisk (*) can stand in for unknown words, so "the * of liberty" might return "the Statue of Liberty" or "the price of liberty."

Site-specific search limits results to one website or domain. The syntax is site:example.com search terms. This is useful when a site's own search function is poor.

File type search restricts results to a specific format. For example, filetype:pdf climate report returns only PDF documents matching those terms.

Ethics of Search Personalization

Search engines don't just retrieve information neutrally. The way they rank, filter, and personalize results raises real ethical questions.

Algorithmic bias is a significant concern. Ranking algorithms can reflect and reinforce societal biases and stereotypes, and because these algorithms are proprietary, there's very little transparency about how they make decisions. A search about a profession, for instance, might surface images that skew heavily toward one gender or race.

Personalization tailors your results based on your search history, location, and profile data. While this can be convenient, it creates what researcher Eli Pariser called a filter bubble: you increasingly see results that match your existing views and interests, which limits your exposure to diverse perspectives. It also raises privacy concerns, since personalization depends on extensive data collection and user profiling.

Manipulation through SEO is another issue. Search engine optimization techniques can be used legitimately to help good content surface, but they can also be exploited to push misleading or deceptive information higher in rankings. This is sometimes called "black hat SEO."

Responsibility and accountability remain open questions. Search engines play an enormous role in shaping what information people access, yet they operate with limited oversight. Balancing effective personalization with exposure to diverse viewpoints, and giving users meaningful control over how their data is used, are ongoing challenges that don't have easy answers.