Model Uncertainties

Earth system models are powerful tools for understanding how our planet works, but they come with real limitations. Every model simplifies complex processes and relies on estimates, which introduces uncertainties into predictions. Knowing where those uncertainties come from helps you interpret model results and judge how much confidence to place in them.

Parameter Estimation and Simplification

Parameterization is the process of representing complex physical processes with simplified mathematical equations that use adjustable parameters. This is necessary because many processes happen at scales too small for models to resolve directly.

- Sub-grid scale processes like convection and turbulence occur at scales smaller than a model's grid cells, so they must be parameterized rather than simulated directly.

- Parameters are often estimated from limited observations or expert judgment, which introduces uncertainty right from the start.

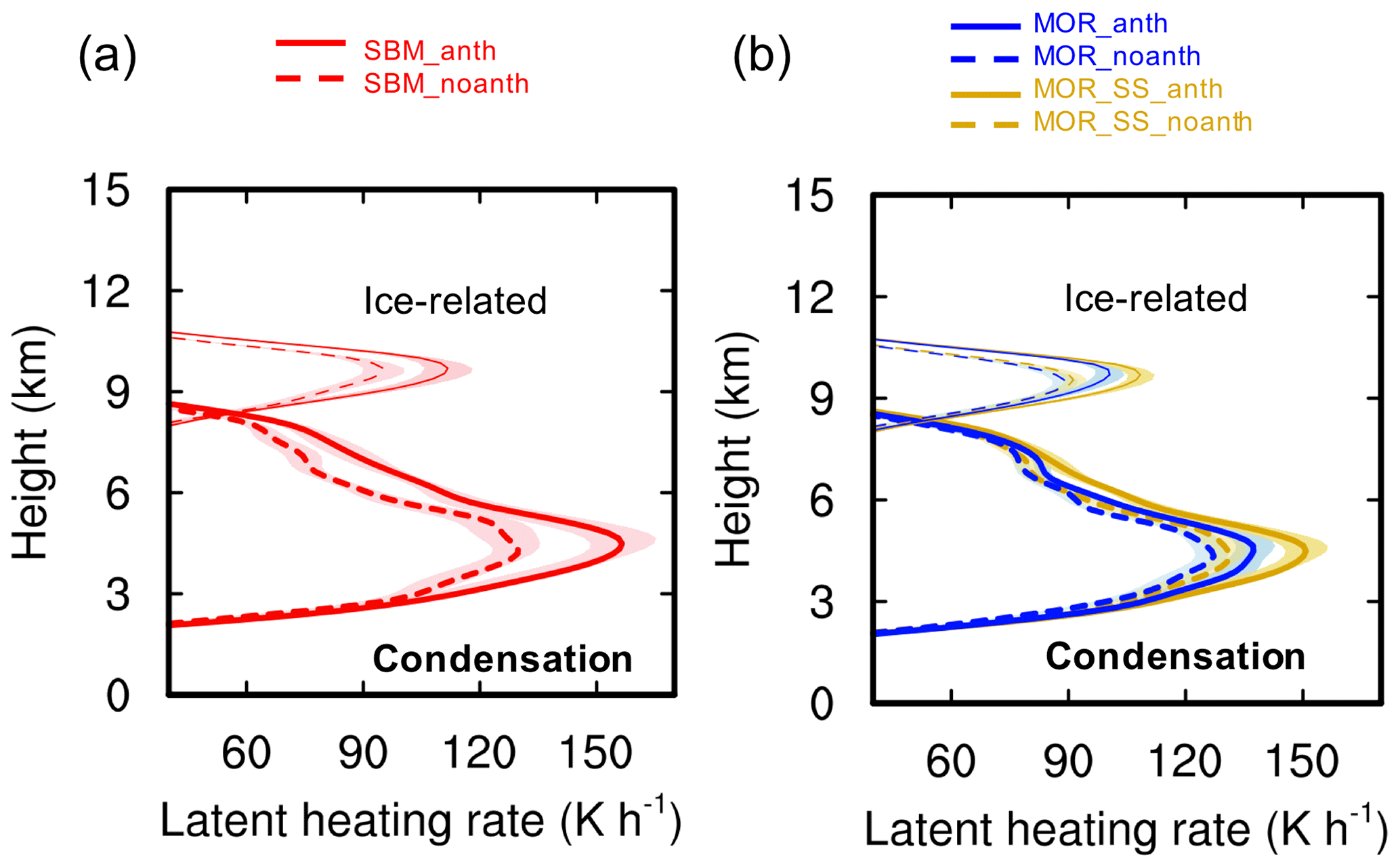

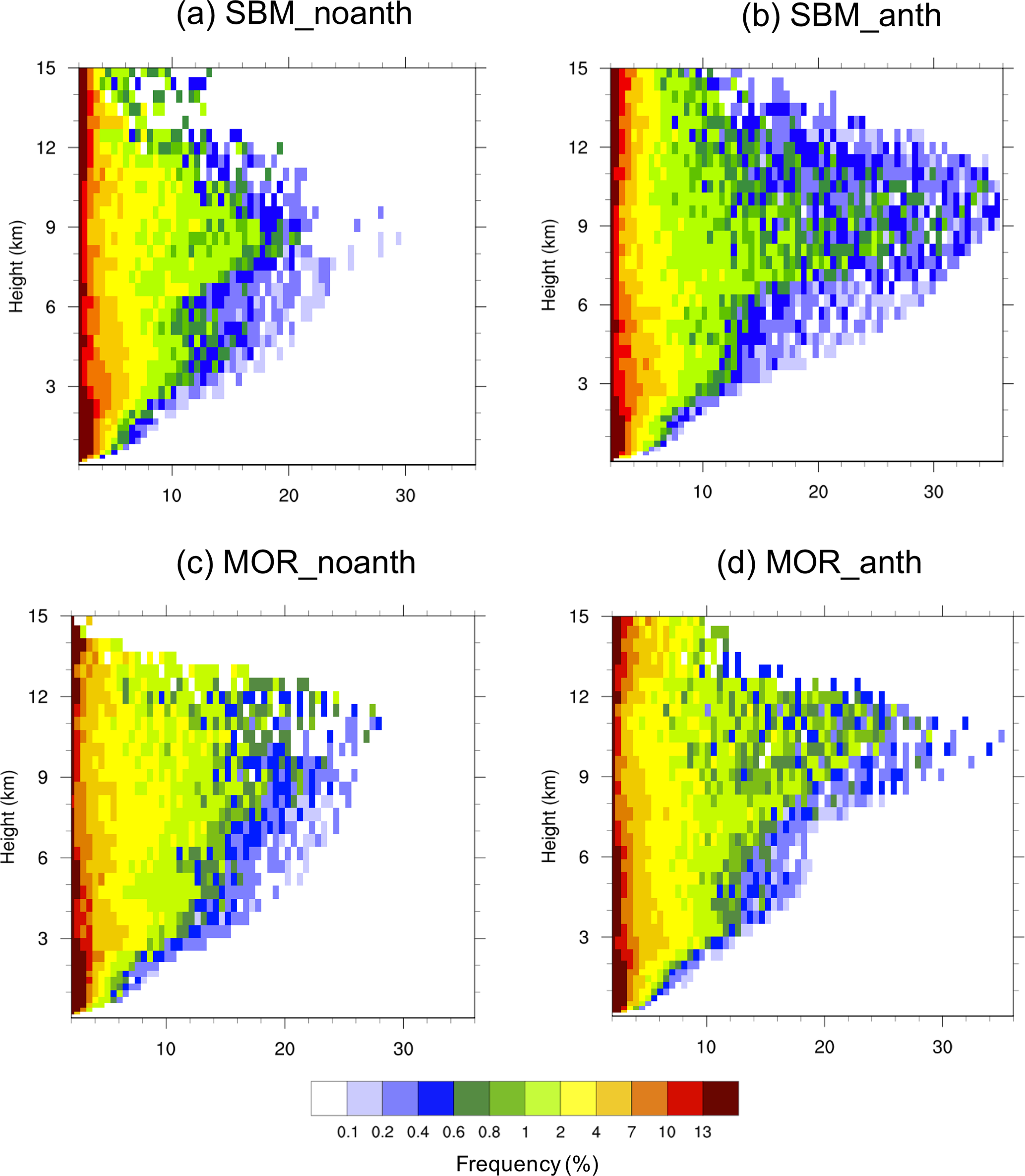

- Different parameterization schemes for the same process can produce different results. For example, two models might use different approaches to cloud microphysics and end up with noticeably different precipitation patterns.

Initial and Boundary Condition Uncertainties

Initial condition uncertainty comes from incomplete knowledge of the system's starting state (temperature, humidity, wind speed at every point in the model).

Because the Earth system behaves chaotically, even small errors in initial conditions can amplify over time. This is sometimes called the butterfly effect: a tiny difference early on can lead to dramatically different outcomes later. That's why weather forecasts lose accuracy beyond about 10 days.

Boundary condition uncertainty involves the values specified at the edges of the model domain or as external inputs, such as sea surface temperatures or greenhouse gas concentrations. Uncertainties here propagate through the entire simulation and are especially significant for long-term climate projections, where future greenhouse gas levels depend on human choices that haven't been made yet.

Model Structure and Design Choices

Even when two models use the same input data, they can produce different results because of structural uncertainty, which arises from different design decisions:

- How physical processes are represented (e.g., how ocean circulation couples to the atmosphere)

- Which processes are included or omitted (some models leave out ice sheet dynamics or permafrost carbon feedbacks entirely)

- The choice of spatial and temporal resolution, which determines what features the model can capture

On top of structural uncertainty, there's scenario uncertainty. Long-term projections require assumptions about future conditions like population growth, energy use, and technological development. Different scenarios (such as the IPCC's Shared Socioeconomic Pathways) can lead to very different projections, not because the models disagree, but because the assumed futures differ.

Model Evaluation and Comparison

Model Resolution and Complexity

Model resolution refers to the size of the grid cells (spatial) and time steps (temporal) a model uses. Resolution involves a direct trade-off:

- Higher resolution models capture finer-scale features like regional climate patterns and extreme weather events, but they demand far more computing power. A global climate model at 25 km resolution requires roughly 100 times more computation than one at 100 km.

- Lower resolution models are computationally efficient but can miss important local processes. For instance, a coarse model might fail to capture orographic precipitation (rain caused by air being forced over mountains) because the mountains themselves aren't well represented on the grid.

Choosing resolution always means balancing what you can afford to compute against what you need the model to resolve.

Intercomparison and Ensemble Approaches

Because no single model is "correct," scientists use two key strategies to quantify uncertainty:

-

Model intercomparison projects like CMIP (Coupled Model Intercomparison Project) bring together dozens of independently developed models and run them under the same conditions. Comparing outputs reveals which results are robust across models and where models disagree, pointing researchers toward processes that need better understanding.

-

Ensemble approaches run multiple simulations, varying the model used, the initial conditions, or the parameter values. The spread of results across the ensemble gives a measure of uncertainty. The IPCC's climate projections, for example, rely on multi-model ensembles to present a range of possible outcomes rather than a single prediction.

Where most models in an ensemble agree, you can have higher confidence. Where they diverge, that flags genuine scientific uncertainty.

Propagation and Cascading of Uncertainties

Uncertainties don't stay contained in one part of a model. They propagate through the modeling chain, from input data to model structure to output variables.

Cascading uncertainties happen when uncertainty in one model component affects others through feedbacks. For example, uncertainty in how the atmosphere simulates cloud cover changes how much solar radiation reaches the ocean surface, which alters sea surface temperatures, which in turn affects atmospheric circulation. Each link in the chain can amplify the original uncertainty.

The cumulative effect is that long-term projections tend to show a wide range of possible outcomes. This is why climate projections for the year 2100 span a broader range than those for 2050. Quantifying and clearly communicating these uncertainties is essential for decision-makers working on climate adaptation and risk assessment, so they understand not just the best estimate but the full range of what's plausible.