Assessment Tool Development

Describe the process of developing valid and reliable assessment tools aligned with curriculum objectives

The goal of developing assessment tools is to create instruments that accurately and consistently measure what students have learned. Without a deliberate development process, assessments can end up testing the wrong things or producing unreliable results.

Here's the process, broken into clear steps:

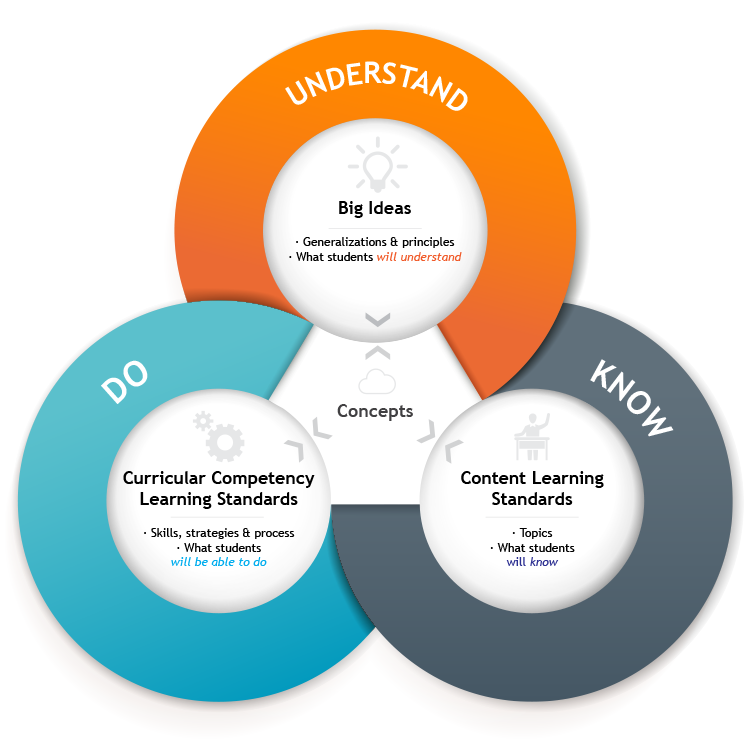

Step 1: Identify clear and measurable curriculum objectives.

- Break broad goals into specific learning outcomes. For example, "understand persuasive writing" becomes "write a persuasive essay with a clear thesis, supporting evidence, and counterargument."

- Make sure each objective is observable. If you can't see or evaluate it through student work, it's too vague to assess.

Step 2: Select appropriate assessment methods for each objective.

- Match the method to what you're actually measuring. Multiple-choice tests work well for knowledge recall, but you'd need an essay or project to assess critical thinking or synthesis.

- Consider the full range of options: traditional tests, performance tasks, portfolios, oral presentations, or projects. Different objectives call for different formats.

Step 3: Create assessment items or tasks aligned with those objectives.

- Develop questions, prompts, or activities that directly target the knowledge or skills you identified.

- Check that your items cover the key concepts proportionally. If 40% of the unit focused on a particular topic, roughly 40% of the assessment should address it.

Step 4: Establish validity.

Validity means the assessment actually measures what it claims to measure. Two types matter most here:

- Content validity: Do the items adequately cover the breadth and depth of the curriculum objectives? A math test that only covers one chapter out of three lacks content validity.

- Construct validity: Does the assessment measure the intended ability? If you want to assess problem-solving but your questions only require memorized formulas, construct validity is weak.

Step 5: Ensure reliability.

Reliability means the assessment produces consistent results. Two dimensions to check:

- Consistency across administrations: Students with the same level of knowledge should score similarly whether they take the test on Monday or Friday, or on Form A versus Form B.

- Inter-rater reliability: When multiple evaluators score the same student work, they should arrive at similar ratings. This is especially important for subjective assessments like essays.

Step 6: Pilot test and revise.

- Administer the assessment to a sample group of students representative of your target population.

- Analyze results for problems: Are instructions unclear? Do certain items confuse even strong students? Are some items too easy or too hard to differentiate performance?

- Revise based on findings. This step is often skipped in practice, but it's what separates a polished assessment from a rough draft.

Rubric Development and Application

Create effective rubrics for evaluating student performance on various assessment tasks

A rubric translates your expectations into a structured scoring tool. It defines what you're evaluating, how well students need to perform, and what each level of quality looks like. The development process follows these steps:

Step 1: Define the purpose and scope.

- Identify the specific task being evaluated (an oral presentation, a research paper, a lab report).

- Clarify which learning outcomes or skills the rubric will assess. A single task might involve multiple skills, so decide which ones the rubric will capture.

Step 2: Establish performance levels.

- Create a rating scale with distinct levels. Common scales range from 3 to 5 levels, such as Beginning, Developing, Proficient, Advanced or 1 through 4.

- Each level should represent a clear step up in quality. If two adjacent levels sound almost identical, they need sharper differentiation.

Step 3: Identify specific criteria.

- Break the task into its key components. For a research paper, criteria might include thesis clarity, use of evidence, organization, analysis, and writing mechanics.

- Each criterion should be something you can observe and evaluate in the student's work.

Step 4: Write detailed performance indicators for each criterion at each level.

This is the most labor-intensive step, and the most important. For each cell in the rubric (where a criterion meets a performance level), describe what student work looks like at that level. Use specific, objective language.

For example, under "Use of Evidence" at the Proficient level: "Incorporates relevant evidence from at least three credible sources; evidence directly supports the thesis." Compare that to the Developing level: "Includes evidence from one or two sources; connection to the thesis is present but inconsistent."

Step 5: Test and refine.

- Apply the rubric to sample student work. Can you score consistently? Are there criteria where you struggle to distinguish between levels?

- Have colleagues use the rubric independently on the same samples, then compare scores.

- Revise any descriptors that are ambiguous or that produce inconsistent scoring.

Explain the importance of establishing clear criteria and performance indicators in assessment rubrics

Clear criteria and performance indicators serve four key functions:

They create shared expectations. When criteria are explicit, both students and evaluators understand what success looks like before the work begins. There's no guessing about what the evaluator values. A student reading the rubric should be able to answer: What exactly do I need to do well?

They reduce subjectivity in scoring. Vague criteria like "good analysis" invite inconsistency because each evaluator interprets "good" differently. Specific performance descriptors anchor scoring decisions in observable features of the work. This directly improves inter-rater reliability when multiple people are grading.

They enable targeted feedback. Instead of a single grade with a general comment, a rubric lets you point to specific criteria. You can tell a student their organization was strong but their use of evidence needs work. Students can also use the rubric to self-assess before submitting, identifying their own strengths and gaps.

They clarify the path to improvement. Because performance levels show a progression from novice to expert, students can see exactly what distinguishes adequate work from excellent work. This turns the rubric into a learning tool, not just a grading tool.

Discuss strategies for ensuring fairness and consistency in the application of assessment rubrics

Even a well-designed rubric can produce unfair results if it's applied inconsistently. These strategies help prevent that:

Train evaluators on rubric use.

- Walk evaluators through each criterion and performance level before they begin scoring.

- Conduct calibration sessions: have all evaluators score the same set of sample work independently, then compare and discuss results. The goal is to reach a shared interpretation of each descriptor before scoring real student work.

Use multiple evaluators when possible.

- Have two or more evaluators score student work independently, then compare scores.

- When scores diverge significantly, discuss the discrepancy and identify which rubric descriptors were interpreted differently. This process surfaces ambiguities that need to be resolved.

Regularly review and update rubrics.

- After each use, analyze whether the rubric effectively captured differences in student performance. Were most students clustered at one level? That may signal the descriptors need recalibration.

- Collect feedback from both evaluators and students. Evaluators can flag criteria that were hard to apply; students can flag criteria that were confusing.

- Revise as needed. Rubrics should be treated as living documents, not permanent fixtures.

Make rubrics accessible and transparent.

- Share rubrics with students before they begin the assessment, not after. Students perform better when they know the criteria in advance.

- Provide anchor examples: samples of work at different performance levels so students can see what each level actually looks like in practice.

- Invite student questions about the rubric. If multiple students misunderstand a criterion, that's a sign the language needs revision.