Types of Curriculum Evaluation Models

Curriculum evaluation models give educators structured ways to assess whether a program is working and how to improve it. Without a clear evaluation approach, curriculum decisions often rely on guesswork rather than evidence. The models covered here range from broad frameworks like CIPP to more focused approaches like goal-based and expertise-oriented evaluation.

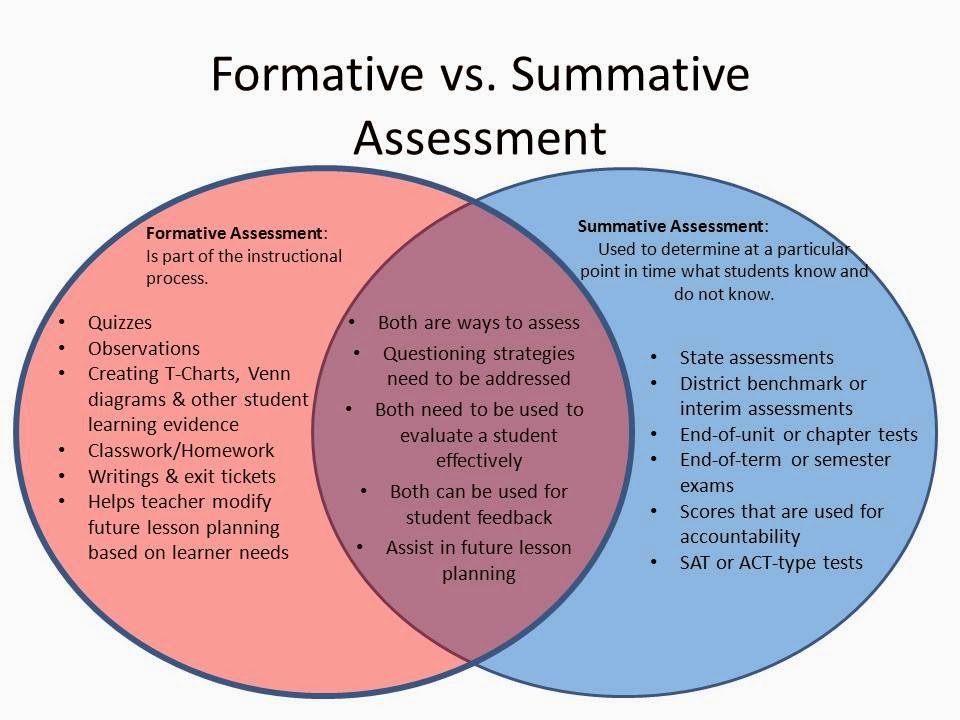

Formative vs. Summative Evaluation Models

The most fundamental distinction in curriculum evaluation is when and why you're evaluating. Formative and summative evaluation serve different purposes, and most strong evaluation plans use both.

Formative evaluation happens during curriculum development and implementation. Its purpose is ongoing improvement.

- Provides real-time feedback so teams can adjust as they go

- Focuses on the process of curriculum design and delivery

- Identifies specific weaknesses that can be fixed mid-course, such as pacing problems, unclear content sequencing, or ineffective instructional strategies

- Think of it as a check-up while the curriculum is still being built or rolled out

Summative evaluation happens at the end of a curriculum cycle or program. Its purpose is to judge overall effectiveness.

- Determines whether the curriculum achieved its intended outcomes, such as student learning gains or program-level goals

- Focuses on results rather than process

- Informs high-stakes decisions: Should this curriculum continue? Should it be expanded, revised, or discontinued?

- Resource allocation and program viability often depend on summative findings

A useful way to remember the difference: formative evaluation improves the curriculum; summative evaluation proves (or disproves) its value.

Key Components and Stages of the CIPP Evaluation Model

The CIPP model, developed by Daniel Stufflebeam, is one of the most widely used frameworks in curriculum evaluation. It's designed to be comprehensive, walking evaluators through four distinct stages that together cover the full lifecycle of a curriculum. CIPP stands for Context, Input, Process, and Product.

Context Evaluation

Context evaluation answers the question: What needs to be addressed?

- Assesses the needs, problems, assets, and opportunities within the educational setting

- Defines program goals and priorities based on contextual factors like student demographics, community needs, and existing gaps in learning

- Data collection methods typically include surveys, focus groups, and formal needs assessments

- This stage grounds the entire evaluation in the real conditions of the setting rather than assumptions

Input Evaluation

Input evaluation answers: What approach should we take?

- Examines the feasibility and potential of various curricular strategies and resources

- Compares alternative approaches to determine which is most likely to achieve program goals, considering instructional methods, materials, and available technology

- Uses tools like cost-benefit analysis and expert review to weigh options

- The goal is to select the most promising strategy before full implementation begins

Process Evaluation

Process evaluation answers: Is the curriculum being implemented as planned?

- Monitors implementation fidelity by comparing what's actually happening in classrooms to what was designed

- Uses classroom observations, teacher feedback sessions, and implementation logs to track deviations from the plan

- Provides formative feedback so adjustments can be made in real time, such as additional professional development or reallocation of resources

- This is where many curricula succeed or fail, since even a well-designed program can fall apart if implementation goes off track

Product Evaluation

Product evaluation answers: Did it work?

- Assesses the outcomes and impact of the curriculum on student learning and development

- Measures achievement of curricular goals through standardized tests, performance assessments, and other outcome data

- Also considers broader indicators like stakeholder satisfaction and cost-effectiveness

- Provides the summative judgment on whether the curriculum delivered on its promises

Evaluation Approaches and Their Characteristics

Beyond the CIPP framework, several other evaluation approaches offer distinct lenses for examining curriculum quality. Each has trade-offs, and understanding those trade-offs helps you choose the right tool for the situation.

Goal-Based Evaluation

Goal-based evaluation measures curriculum success against its own predefined objectives. It's straightforward: you set goals during planning, then check whether those goals were met.

Advantages:

- Gives the evaluation a clear focus and direction

- Directly measures whether students achieved the intended learning objectives

Limitations:

- Can miss unintended outcomes, both positive and negative. For example, a math curriculum might also develop students' collaborative skills, but a purely goal-based evaluation wouldn't capture that.

- Only as good as the goals themselves. If the original goals were poorly written or too narrow, the evaluation will be limited.

Decision-Facilitation Evaluation

This approach is designed to provide timely, relevant information to the people making curriculum decisions. Rather than just producing a final report, it feeds data to stakeholders at key decision points throughout the process.

Advantages:

- Keeps evaluation connected to real decisions, such as course sequencing changes or assessment strategy revisions

- Actively engages stakeholders like teachers, administrators, and parents in the evaluation process

Limitations:

- Can be influenced by the interests and biases of whoever holds decision-making power, whether that's administrators, school boards, or funding agencies

- Requires strong collaboration and communication among all stakeholders to work well

Expertise-Oriented Evaluation

This approach relies on subject matter experts (curriculum specialists, master teachers, or content authorities) to review and judge curriculum quality.

Advantages:

- Draws on deep professional knowledge to assess things like content accuracy, pedagogical soundness, and alignment with current research

- Can surface issues that data alone might not reveal, especially in specialized or innovative programs

Limitations:

- Inherently subjective, since conclusions depend on the qualifications and perspectives of the chosen experts

- Requires careful selection of evaluators from relevant fields to ensure credibility

Choosing and Combining Evaluation Models

No single model fits every situation. Selecting the right approach (or combination of approaches) depends on several factors:

Consider the purpose and scope:

- Use formative evaluation for ongoing development and refinement

- Use summative evaluation when you need to assess overall effectiveness and make continuation decisions

Analyze the educational context:

- The level of education (elementary, secondary, post-secondary) shapes what's practical

- Subject area matters: evaluating a STEM curriculum may require different tools than evaluating an arts program

- Institutional factors like school culture, available resources, and whether the school is public or private all affect what's feasible

Match the model to the situation:

- CIPP works well for comprehensive, large-scale curriculum reforms where you need to evaluate the full picture

- Goal-based evaluation suits targeted interventions with clearly defined objectives

- Decision-facilitation fits contexts where stakeholder involvement is central, such as community-based curricula

- Expertise-oriented evaluation is a strong choice for specialized or innovative programs that require deep content knowledge to assess

Adapt as needed. In practice, evaluators often combine elements from multiple models. You might pair a goal-based framework with expert review, or embed process evaluation from the CIPP model into a primarily decision-facilitation approach. The key is to customize your evaluation tools (observation protocols, surveys, rubrics) to fit the specific context rather than forcing a single model onto every situation.