Least Squares Estimates

Solving the Normal Equations

The normal equations give you a system of linear equations whose solution produces the least squares estimates of your regression parameters. These estimates minimize the sum of squared residuals, which is the total squared difference between observed and predicted values.

In matrix notation, the normal equations are:

where:

- is the design matrix (each row is an observation, each column is a predictor, with a column of 1s for the intercept)

- is the transpose of

- is the vector of observed response values

- is the vector of regression parameters you want to estimate

To solve for the parameter estimates, multiply both sides on the left by :

This formula only works when exists, which requires the columns of to be linearly independent. If two predictors are perfectly collinear (one is an exact linear function of the other), is singular and you can't invert it. This is called perfect multicollinearity, and it means there's no unique solution.

The matrix approach is especially efficient when you have many predictors. Instead of solving each coefficient one at a time, you get the entire vector in a single matrix operation.

Interpreting the Estimates

Each element in the vector corresponds to a specific parameter in your model:

- The intercept estimate is the expected value of the response when all predictors equal zero. This is only meaningful if zero is a realistic value for your predictors. For example, if a predictor is height in centimeters, an intercept at height = 0 has no practical meaning.

- Each slope coefficient represents the expected change in the response for a one-unit increase in that predictor, holding all other predictors constant.

The sign of a coefficient tells you the direction of the relationship: positive means the response tends to increase as the predictor increases, and negative means the opposite. The magnitude reflects the strength of that relationship, but always consider the units of measurement. A coefficient of 0.001 might represent a strong effect if the predictor is measured in thousands of dollars.

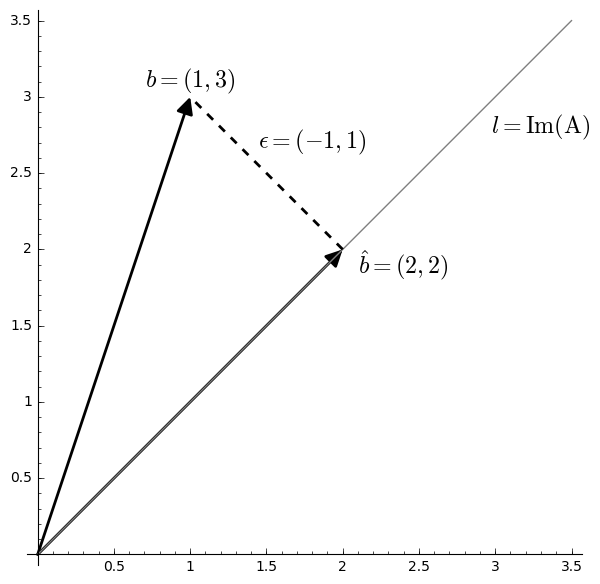

Fitted Values and Residuals

Calculating Fitted Values and Residuals

Once you have , you can compute two key quantities:

Fitted values are the model's predictions for each observation:

Residuals are the differences between what you observed and what the model predicted:

Each residual tells you how far off the model was for observation . Positive residuals mean the model underpredicted; negative residuals mean it overpredicted.

The sum of squared residuals (also called the residual sum of squares) compresses all residuals into a single measure of model fit:

This is the quantity that least squares estimation minimizes. A smaller means the model's predictions are closer to the observed data overall.

Computational Efficiency

The matrix formulation makes follow-up calculations straightforward. For example, the coefficient of determination can be computed directly from the residuals:

where is the total sum of squares (the total variability in ). Because is already available from , you don't need to recompute anything from scratch. The same applies to diagnostic tests and other goodness-of-fit measures.

Properties of Estimators

Desirable Properties

The least squares estimators from the matrix approach have three properties that make them the standard choice in linear regression:

- Unbiasedness: On average, the estimator hits the true parameter value. Formally, . This means there's no systematic tendency to overestimate or underestimate.

- Consistency: As your sample size grows, converges in probability to the true . Larger samples give you estimates that are increasingly reliable.

- Efficiency (BLUE): Among all estimators that are both linear and unbiased, least squares has the smallest variance. This is the Gauss-Markov theorem, and it holds when the errors are uncorrelated, have constant variance (homoscedasticity), and have mean zero. "BLUE" stands for Best Linear Unbiased Estimator.

Normality and Assumptions

If you additionally assume the errors are normally distributed, then is also normally distributed. This is what allows you to construct confidence intervals and run hypothesis tests (t-tests, F-tests) with exact distributions rather than relying solely on large-sample approximations.

All of these properties depend on the assumptions of the classical linear regression model:

- Linearity: The true relationship between predictors and response is linear in the parameters

- Independence: The error terms are independent of each other

- Homoscedasticity: The error variance is constant across all observations

- Normality: The errors follow a normal distribution (needed for exact inference, not for BLUE)

When these assumptions break down, the estimators may lose their desirable properties. For instance, heteroscedasticity (non-constant error variance) doesn't bias , but it does invalidate the usual standard errors. Common remedies include using robust (heteroscedasticity-consistent) standard errors or applying variance-stabilizing transformations to the variables.