Exponential family of distributions

Definition and properties

The exponential family is a broad class of probability distributions that share a common mathematical form. This shared structure is what makes GLMs possible: because these distributions all follow the same template, you can build a single modeling framework that handles normal, binomial, Poisson, and many other response types.

A distribution belongs to the exponential family if its density (or mass) function can be written as:

Each piece of this formula has a specific role:

- is the natural (canonical) parameter, a reparameterization of the original distribution parameter(s) that puts the density into this standard form.

- is the sufficient statistic, a function of the data that captures all the information the data contain about .

- is the base measure, a term that depends only on the data and acts as a normalizing weight.

- is the log-partition function (also called the cumulant function). It ensures the density integrates (or sums) to 1, and it turns out to be the key to deriving moments.

Two properties worth highlighting:

- Sufficiency. Because is sufficient, you don't lose any information about by reducing your entire dataset to . This connects directly to the factorization theorem: a statistic is sufficient if and only if the joint density factors into one part that depends on the data only through that statistic and another part that depends only on the data.

- Moments from derivatives. The mean and variance of can be read off from derivatives of , with no integration required. (More on this below.)

The family includes both discrete and continuous distributions. Which specific distribution you get depends on the choices of , , , and .

Versatility and applicability

Many of the distributions you already know belong to this family:

- Normal (Gaussian)

- Binomial

- Poisson

- Gamma

- Beta, Exponential, Geometric, Negative Binomial

Because they all share the same canonical form, a single estimation and inference pipeline (maximum likelihood, score equations, Fisher information) works across all of them. That's exactly the idea behind GLMs.

Common distributions in the exponential family

For each distribution below, the components refer to the canonical form . Working through these mappings is the best way to build intuition for the general formula.

Normal (Gaussian) distribution

The normal distribution has two natural parameters because it has two unknown quantities ( and ):

- Natural parameter:

- Sufficient statistic:

- Base measure:

- Log-partition function:

Notice how the sufficient statistic is a vector here. For a sample of size , you only need and to estimate both parameters.

Binomial and Poisson distributions

Binomial (with fixed and success probability ):

- Natural parameter: (the log-odds)

- Sufficient statistic:

- Base measure:

- Log-partition function:

The natural parameter here is the logit of . This is why logistic regression uses a logit link: it connects the linear predictor directly to the canonical parameter.

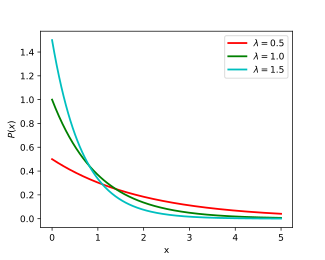

Poisson (with rate ):

- Natural parameter:

- Sufficient statistic:

- Base measure:

- Log-partition function:

Similarly, the natural parameter is , which is why Poisson regression defaults to a log link.

Gamma and other distributions

Gamma (with shape and rate ):

- Natural parameter:

- Sufficient statistic:

- Base measure:

- Log-partition function:

Other exponential-family members (Beta, Exponential, Geometric, Negative Binomial) each have their own specific mappings for , , , and . The procedure for deriving them is always the same: start from the standard density, algebraically rearrange it into the canonical form, and read off the components.

Natural parameters and sufficient statistics

Role in exponential family distributions

Natural parameters are not just an arbitrary reparameterization. They're chosen so that the distribution takes the clean canonical form shown above. In that form, the natural parameter and the sufficient statistic appear together as a dot product in the exponent. This pairing is what gives the exponential family its analytical tractability.

Sufficient statistics compress the data without losing information about . For example, if you have observations from a Poisson distribution, the single number is sufficient for . You could throw away the individual observations and still estimate just as well.

Relationship between natural parameters and sufficient statistics

The natural parameter and sufficient statistic are tightly coupled:

- They always appear multiplied together in the exponent of the canonical form.

- Changing the natural parameter changes which member of the exponential family you're working with; the sufficient statistic tells you what function of the data is relevant for that parameter.

For the normal distribution, the natural parameters are functions of and , while the sufficient statistics are and . For the Poisson, the natural parameter is and the sufficient statistic is simply .

Importance in inference and modeling

These properties make estimation straightforward:

- Maximum likelihood estimation: The MLE for exponential family distributions reduces to matching the expected sufficient statistic to the observed sufficient statistic. That is, you solve .

- Bayesian inference: Conjugate priors exist naturally for exponential family likelihoods, which simplifies posterior computation.

This is a big part of why GLMs work so well in practice. The exponential family structure guarantees that score equations are well-behaved and that iterative fitting algorithms (like IRLS) converge reliably.

Mean and variance of exponential family distributions

Deriving the mean

One of the most useful results: the mean of the sufficient statistic equals the first derivative of the log-partition function with respect to the natural parameter.

To see this in action:

- Poisson: , so . You recover the rate parameter directly.

- Normal: . You recover the location parameter.

Deriving the variance

Take one more derivative and you get the variance:

- Poisson: . The variance equals the mean, which is the defining equidispersion property of the Poisson.

- Normal: .

Because is always convex (its second derivative is a variance, which is non-negative), this also guarantees that the variance is non-negative for any member of the family.

Power of the exponential family representation

The log-partition function acts as a moment-generating device. Differentiating it once gives the mean; differentiating it twice gives the variance. Higher cumulants follow from higher derivatives.

This eliminates the need for explicit integration or summation to compute moments. For GLM theory specifically, the relationship is what defines the variance function, which in turn determines how the variance of the response relates to its mean. That connection is central to how GLMs handle non-constant variance across different distribution families.