Multiple Comparisons in ANOVA

The Need for Multiple Comparison Procedures

A significant ANOVA result tells you that at least one group mean differs from the others, but it doesn't tell you which groups differ or in what direction. The natural next step is to run pairwise comparisons between groups. The problem is that each comparison carries its own chance of a Type I error (rejecting a true null hypothesis), and those chances accumulate.

This accumulation is called the familywise error rate: the probability of making at least one Type I error across all comparisons in a family of tests. For example, with 4 groups you have 6 pairwise comparisons. If each uses , the probability of at least one false positive across all six is considerably higher than 0.05.

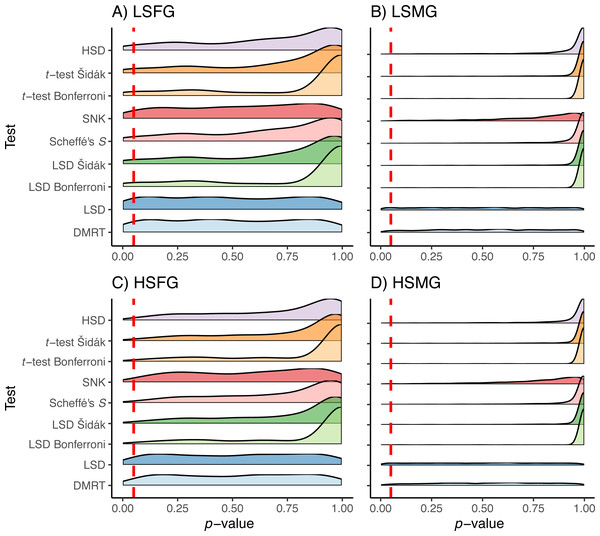

Multiple comparison procedures (also called post-hoc tests) solve this by adjusting for the number of comparisons so the familywise error rate stays at your chosen . The trade-off is that tighter error control means less statistical power to detect real differences.

Factors Influencing the Choice of Procedure

- Number of groups. Some procedures handle many groups well (Tukey's HSD), while others become overly conservative as the number of comparisons grows (Bonferroni correction).

- Sample sizes. Tukey's HSD assumes equal (or approximately equal) group sizes. When sample sizes are unequal, alternatives like Scheffé's test or the Games-Howell test are more appropriate.

- Homogeneity of variances. If group variances are substantially different, procedures that assume equal variances can give misleading results. The Games-Howell test does not require this assumption.

- Desired balance between power and error control. Conservative procedures (Bonferroni) prioritize keeping Type I errors low but may miss real differences. Less conservative procedures (Tukey's HSD) offer more power but accept slightly more Type I error risk.

Post-Hoc Tests for Pairwise Comparisons

Tukey's Honestly Significant Difference (HSD) Test

Tukey's HSD is the most commonly used post-hoc test when you want to compare every group to every other group. It controls the familywise error rate across all pairwise comparisons simultaneously.

How it works:

- Calculate the studentized range statistic , which depends on the number of groups () and the error degrees of freedom from the ANOVA.

- Use to compute a critical difference: , where is the mean square error and is the per-group sample size.

- For each pair of groups, compute the absolute difference between their sample means.

- If the absolute mean difference exceeds the HSD critical value, that pair is statistically significant.

Tukey's HSD assumes equal sample sizes and homogeneity of variances. When these assumptions are violated, consider alternatives like the Games-Howell test.

Bonferroni Correction

The Bonferroni correction is the simplest approach to controlling the familywise error rate. You divide your desired by the total number of comparisons ():

For example, with 6 pairwise comparisons and , each individual comparison uses . Only p-values below this threshold count as significant.

The strength of Bonferroni is its simplicity and flexibility: it works regardless of sample size balance or the number of groups. The weakness is that it becomes very conservative as grows, making it harder to detect real differences.

Other Common Post-Hoc Tests

- Scheffé's test is the most conservative of the common procedures. It works with unequal sample sizes and is robust to violations of homogeneity of variances. It's best suited when you're testing complex contrasts (not just pairwise comparisons).

- Dunnett's test is designed for a specific situation: comparing each treatment group to a single control group, rather than comparing all groups to each other. This narrower focus gives it more power for that particular question.

- Holm-Bonferroni method is a step-down modification of the Bonferroni correction that's uniformly more powerful. It works by ranking the p-values from smallest to largest, then comparing each to a sequentially adjusted threshold: the smallest p-value is compared to , the next to , and so on. You stop adjusting once a comparison fails to reach significance.

Interpreting Post-Hoc Test Results

Statistical Significance and Direction of Differences

Post-hoc tests produce p-values and/or confidence intervals for each pairwise comparison. A statistically significant result means the difference between those two group means is unlikely to have occurred by chance alone, given your chosen .

The direction of the difference is straightforward: compare the two sample means. If and the comparison is significant, you conclude that group A's population mean is likely higher than group B's.

Non-Significant Differences and Practical Significance

A non-significant pairwise comparison does not prove the two groups are equal. It means there's insufficient evidence to conclude they differ at the chosen level. This distinction matters: absence of evidence is not evidence of absence.

Beyond statistical significance, always consider practical significance. A difference of 0.5 points on a 100-point scale might be statistically significant with large samples, but it may not matter in any real-world sense. Think about whether the size of the difference is meaningful for your research question, not just whether the p-value cleared a threshold.

Multiple Comparison Procedures: Trade-offs

Balancing Type I Error Control and Power

Every multiple comparison procedure makes a trade-off between two goals:

- Controlling Type I errors (not claiming differences that don't exist)

- Maintaining power (detecting differences that do exist)

More conservative procedures like Bonferroni and Scheffé keep the false positive rate very low but are more likely to miss real effects. Less conservative procedures like Tukey's HSD offer better power but accept a slightly higher risk of false positives. There's no universally "best" choice; the right procedure depends on your number of groups, sample sizes, whether assumptions are met, and how costly a false positive is relative to a missed finding.

Assumptions and Limitations

- Tukey's HSD assumes equal group sizes and equal variances. When these don't hold, the Games-Howell test is a common alternative because it doesn't require either assumption.

- Scheffé's test is robust to assumption violations but sacrifices power for pairwise comparisons specifically.

- The Bonferroni and Holm-Bonferroni corrections make no distributional assumptions beyond those of the individual tests being corrected, which makes them broadly applicable.

Always check whether your data meet the assumptions of your chosen procedure. If they don't, either switch to a more robust alternative or note the limitation when reporting results.

Transparency and Justification

When reporting results, state which post-hoc procedure you used and why you chose it. For example: "We used the Games-Howell test because group variances were unequal (Levene's test, )." This kind of justification helps readers evaluate whether your conclusions are well-supported and makes your analysis reproducible.