Measuring Public Opinion

Public opinion measurement gives researchers, politicians, and journalists a way to understand what large groups of people actually think about issues. Since you can't ask every single person in a country their views, polling relies on asking a smaller group and drawing conclusions about the whole population. The accuracy of those conclusions depends heavily on how that smaller group is chosen and how the questions are asked.

Methods of Public Opinion Measurement

The most important distinction in polling is between probability sampling and nonprobability sampling. The difference comes down to whether randomness is built into the selection process.

Probability sampling involves selecting a representative sample from a population using random selection. Because every member of the population has a known chance of being chosen, researchers can generalize results to the larger population with a measurable level of confidence. There are several types:

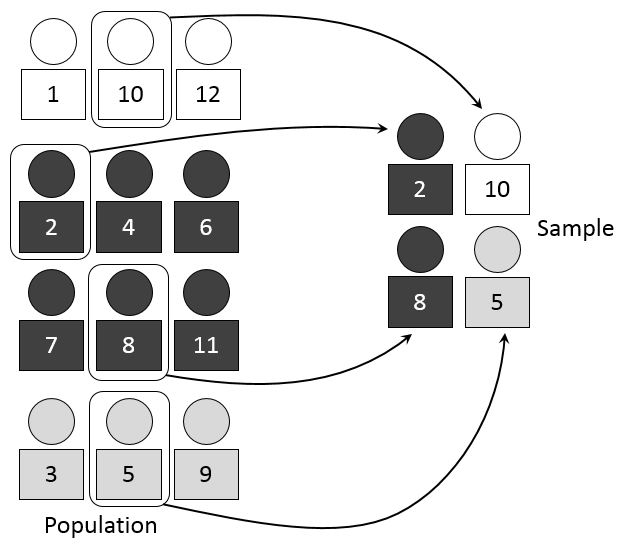

- Simple random sampling selects participants completely at random, like drawing names from a hat. Every person has an equal chance of being picked.

- Stratified random sampling divides the population into subgroups (say, by income level or age bracket) and then randomly samples within each subgroup. This ensures that key groups are proportionally represented.

- Cluster sampling divides the population into clusters (like city blocks or school districts), randomly selects some of those clusters, and then surveys everyone (or a random sample) within the chosen clusters. This is more practical when a population is spread across a large geographic area.

Nonprobability sampling does not involve random selection, which means results cannot be confidently generalized to the broader population. These methods are cheaper and faster, but they carry a higher risk of bias:

- Convenience sampling selects whoever is available and willing to participate (e.g., stopping people at a mall).

- Snowball sampling relies on participants to recruit others from their social networks. This is especially useful for reaching hidden or hard-to-find populations.

- Purposive sampling deliberately selects participants based on specific characteristics, such as interviewing only policy experts on a technical topic.

Nontraditional approaches have become increasingly common as technology evolves:

- Online polls and surveys administered through websites or apps (like SurveyMonkey) are inexpensive and fast, but they miss people without internet access.

- Social media analysis examines user-generated content to identify trends and sentiments, though social media users are not representative of the general public.

- Focus groups bring together small groups of people to discuss a topic in depth. They're useful for exploring why people hold certain views, but the small size means findings can't be generalized.

- In-depth interviews provide detailed insights from individual participants, offering rich qualitative data rather than broad statistical patterns.

Limitations of Polling Techniques

Every poll is an estimate, not a perfect snapshot of reality. Several types of error can distort results:

Sampling bias occurs when certain groups are overrepresented or underrepresented in the sample.

- Non-response bias arises when the people who respond to a survey differ in meaningful ways from those who don't. For example, busy working parents might be less likely to answer a phone poll, so their views get underrepresented.

- Volunteer bias happens when participants self-select into a study. People who choose to take a political survey, for instance, tend to be more politically engaged than the average person.

Measurement error stems from problems with the survey instrument itself or how it's administered.

- Poorly worded questions lead to confusion. A classic example is the double-barreled question, which asks about two things at once ("Do you support increased funding for schools and hospitals?"). A respondent might support one but not the other.

- Interviewer bias occurs when an interviewer's tone, phrasing, or behavior steers respondents toward certain answers.

- Social desirability bias leads people to give answers they think are more socially acceptable rather than their true opinions. This is especially common on sensitive topics like racial attitudes or drug use.

Coverage error arises when entire segments of the population are excluded from the sampling frame. If a poll is conducted only online, people without internet access (more common in rural areas and among older adults) are systematically left out.

Nonresponse error occurs when the people who decline to participate hold different opinions from those who do respond. Low response rates make this problem worse. If only 5% of people called actually complete a phone poll, there's a real risk that the 5% who responded aren't representative.

Margin of error is the range within which the true population value is likely to fall. A poll reporting 52% support with a ±3% margin of error means the true value is probably between 49% and 55%. Smaller sample sizes produce larger margins of error, making results less precise.

Factors Affecting Poll Accuracy

Sample size directly impacts precision. Larger samples reduce sampling error and produce tighter margins of error. National surveys in the U.S. typically use around 1,000 to 1,500 respondents, which yields a margin of error of roughly ±3%. Smaller local surveys may have much larger margins of error simply because fewer people are sampled.

Question wording can dramatically shift results. Consider the difference between asking "Do you support government assistance for the poor?" versus "Do you support welfare programs?" The underlying policy might be similar, but the word "welfare" triggers different reactions than "assistance for the poor." Other wording pitfalls include:

- Leading questions that push toward a particular answer ("Do you agree with most Americans that...?")

- Ambiguous or overly complex phrasing, especially double negatives ("Do you not disagree with...?")

- Question order effects, where earlier questions prime respondents to think about a topic in a certain way before they reach later questions

Open-ended questions ("What do you think about X?") capture more nuance, while closed-ended questions ("Do you strongly agree, agree, disagree, or strongly disagree?") are easier to analyze statistically. Many surveys use a mix of both.

Interviewer characteristics can introduce subtle bias. Research shows that respondents sometimes adjust their answers based on the interviewer's perceived race, gender, or age. A respondent might express different views on racial policy depending on whether the interviewer is white or Black. Standardized training protocols help minimize these effects, but they're difficult to eliminate entirely.

Timing matters more than many people realize. Public opinion can shift rapidly after major events like political scandals, economic crises, or natural disasters. A poll taken six months before an election may look nothing like the final result. Even within a single week, a major news story can move the numbers significantly.

Survey Design and Analysis

Good polling requires careful attention at every stage, from design through interpretation.

- Survey design involves structuring the questionnaire to minimize bias and maximize data quality. This includes choosing question types, ordering questions thoughtfully, and pre-testing the survey with a small group to catch confusing wording.

- Response rate is the proportion of contacted people who actually complete the survey. Higher response rates generally produce more representative results. Response rates for phone polls have dropped sharply over the past two decades, which is one reason pollsters have increasingly turned to online and mixed-mode methods.

- Data analysis techniques allow researchers to interpret results and track how public opinion shifts over time. Weighting is a common technique where analysts adjust the data so that the sample better matches the known demographics of the population (for example, if young people are underrepresented in responses, their answers are given more weight).

The bottom line: no single poll is definitive. The best approach to understanding public opinion is to look at multiple polls using different methods over time, rather than relying on any one survey.