Filter banks and wavelet transforms

Filter banks and wavelet transforms are two frameworks that accomplish the same goal: decomposing a signal into components at different frequency bands and time scales. Understanding their relationship is essential because filter banks provide the practical, discrete-time machinery that makes wavelet transforms computable. Every time you run a discrete wavelet transform, you're really running a filter bank.

Relationship between filter banks and wavelet transforms

A two-channel filter bank splits a signal using a lowpass filter and a highpass filter, each followed by downsampling by 2. The outputs map directly onto wavelet transform coefficients:

- The lowpass filter output corresponds to the approximation coefficients, capturing coarse-scale, slowly varying content.

- The highpass filter output corresponds to the detail coefficients, capturing fine-scale information like edges and transients.

A perfect reconstruction filter bank is one where you can exactly recover the original signal from these subband outputs. This mirrors the invertibility of the wavelet transform. The analysis and synthesis filters are designed so that aliasing introduced by downsampling in the analysis stage gets canceled during synthesis.

The key structural insight: if you take the lowpass output and feed it back through another two-channel filter bank, and repeat, you get a cascaded, multiscale decomposition. This iterated structure produces the dyadic (octave-band) frequency splitting that defines the discrete wavelet transform. Each level halves the bandwidth and doubles the time resolution of the approximation subband.

Properties of wavelet bases derived from filter banks

The filters you choose directly determine the properties of the resulting wavelet basis:

- Orthogonality: Orthogonal filter banks yield orthogonal wavelet bases, where basis functions don't overlap in their inner products. Daubechies wavelets are the classic example.

- Symmetry: Symmetric or antisymmetric wavelets are preferred in applications like image processing because they avoid phase distortion. Biorthogonal wavelets achieve this by relaxing the orthogonality constraint.

- Regularity: Smoother wavelet functions represent smooth signals more efficiently. Higher-order Daubechies wavelets have greater regularity but longer filter lengths.

The number of vanishing moments of a wavelet is tied to how flat the highpass filter's frequency response is near . More vanishing moments mean the wavelet is "blind" to low-order polynomials, so wavelet coefficients of smooth signals decay faster. This matters for compression and approximation quality.

Wavelet bases from filter banks

Perfect reconstruction filter banks

For a two-channel filter bank to achieve perfect reconstruction, the lowpass and highpass filters must satisfy specific algebraic conditions. One common construction uses the quadrature mirror filter (QMF) relationship:

where is the filter length. This alternating-sign, time-reversed construction ensures that the highpass filter's passband aligns with the lowpass filter's stopband, and vice versa.

The QMF condition guarantees that aliasing from downsampling in the analysis bank is exactly canceled during synthesis. The synthesis filters are time-reversed versions of the analysis filters, with the lowpass and highpass roles swapped.

Deriving wavelet bases from perfect reconstruction filter banks

Given a perfect reconstruction filter bank, you can derive the continuous-time scaling function and wavelet function that define the corresponding wavelet basis.

Scaling function : defined by the two-scale relation (also called the refinement equation):

This is a recursive definition. In the frequency domain, the solution is an infinite product:

where is the frequency response of the lowpass filter.

Wavelet function : obtained by applying the highpass filter to the scaling function:

In the frequency domain:

Note that the wavelet's Fourier transform is not simply an infinite product of terms. Rather, depends on at the finest scale and on (through ) at all coarser scales.

Together, integer translates and dyadic dilates of form an orthonormal basis for , the space of square-integrable functions.

Wavelet decomposition and reconstruction

Wavelet decomposition using filter banks

Decomposition follows a cascaded structure. At each level , you filter the approximation coefficients from the previous level and downsample:

-

Apply the lowpass filter and downsample by 2 to get the approximation coefficients:

-

Apply the highpass filter and downsample by 2 to get the detail coefficients:

-

Feed back into step 1 for the next level. Store .

-

Repeat until you reach the desired number of levels.

The original signal serves as . After levels, you have one set of approximation coefficients and sets of detail coefficients . The number of levels you choose depends on the frequency resolution you need and the signal length (each level halves the number of samples).

Wavelet reconstruction using filter banks

Reconstruction reverses the process, working from the coarsest level back to the finest:

- Upsample the approximation and detail coefficients by inserting zeros between samples:

-

Filter each upsampled sequence with the corresponding synthesis filter (lowpass for approximation, highpass for detail).

-

Sum the two filtered outputs to recover the previous level's approximation:

- Repeat until you recover , the original signal.

The perfect reconstruction property guarantees exact recovery (in the absence of quantization or rounding errors).

Efficient implementations exist to speed this up:

- Polyphase representations separate even and odd indexed filter coefficients, reducing redundant computation by operating directly at the downsampled rate.

- Lifting schemes factorize the filter bank into a sequence of simple predict-and-update steps. This enables in-place computation (no extra memory buffer) and roughly halves the number of multiplications.

Multiscale signal representation with filter banks

Multiscale analysis of signals

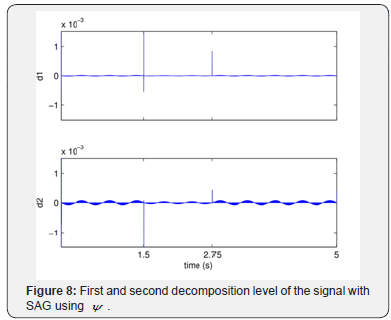

The cascaded filter bank produces a representation where each level captures a different frequency band with a different time resolution. This is the core advantage over a single-resolution analysis like the short-time Fourier transform.

- Approximation coefficients at level represent the low-frequency trend of the signal, smoothed over a window that doubles in width with each level.

- Detail coefficients at level capture high-frequency content in a specific octave band. Abrupt changes, edges, and singularities show up as large-magnitude detail coefficients at the scales where they're most prominent.

By examining how coefficient magnitudes change across scales, you can identify scale-dependent features. For instance, a sharp discontinuity produces large detail coefficients that persist across many scales, while noise coefficients tend to stay small and don't grow coherently.

Applications of multiscale signal representation

Denoising: Since noise energy is spread across all detail coefficients while signal features concentrate in a few large coefficients, you can suppress noise by thresholding. Set detail coefficients below a threshold to zero, then reconstruct. Common thresholding strategies include hard thresholding (zero out small coefficients) and soft thresholding (shrink all coefficients toward zero by the threshold amount).

Compression: Most of the signal energy typically concentrates in a small number of large wavelet coefficients. You can discard or coarsely quantize the many near-zero coefficients with little perceptual loss. Algorithms like EZW (Embedded Zerotree Wavelet) and SPIHT (Set Partitioning in Hierarchical Trees) exploit the parent-child relationships between coefficients at adjacent scales to encode the significant coefficients efficiently. JPEG 2000, for example, uses wavelet-based compression for images.

Feature characterization: The energy distribution across scales reveals signal structure. Singularities and edges concentrate energy at fine scales. Textured regions distribute energy more uniformly across scales. Smooth regions concentrate almost all energy in the coarsest approximation coefficients.