State Transition Matrix and Matrix Exponential

State equations describe how a system's internal variables change over time. Solving them is how you predict a circuit's behavior from any set of initial conditions and inputs. The two main pieces are the state transition matrix (which captures the system's natural dynamics) and the convolution integral (which captures the effect of external inputs).

Fundamental Concepts of State Transition

The state transition matrix describes how the state vector evolves over time when there's no input. For a linear time-invariant (LTI) system, it equals the matrix exponential , where is the system matrix.

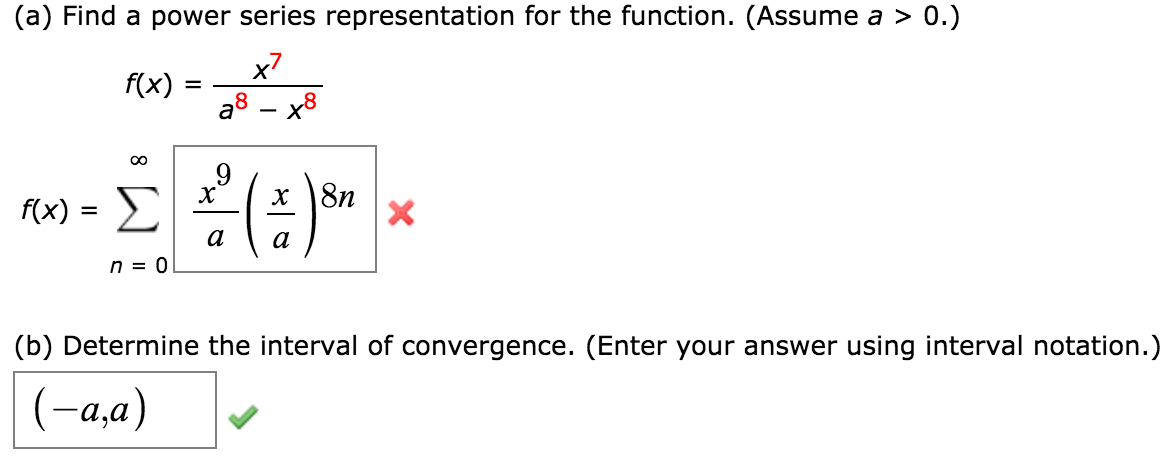

You can compute using its power series expansion:

In practice, you rarely sum this series by hand. Instead, you'll use eigenvalue methods or Laplace transforms (covered below) to find a closed-form expression.

Key properties of that show up constantly:

- (at , the state hasn't moved yet)

- (semigroup property)

- (time reversal inverts the matrix)

Eigenvalue and Eigenvector Analysis

The most common way to compute for small systems is through eigenvalue decomposition.

-

Find the eigenvalues by solving the characteristic equation .

-

For each eigenvalue , find the corresponding eigenvector by solving .

-

Form the eigenvector matrix and the diagonal eigenvalue matrix .

-

The state transition matrix is then , where .

This works cleanly when all eigenvalues are distinct. If you have repeated eigenvalues, you need the Jordan canonical form, which introduces polynomial-times-exponential terms like on the off-diagonal blocks.

The eigenvalues themselves tell you the system's natural modes. In a circuit context, they correspond to the natural frequencies you'd find from the characteristic equation of the differential equation.

Homogeneous and Particular Solutions

The complete solution to has two parts: the homogeneous (zero-input) response and the particular (zero-state) response.

Homogeneous Solution

When the input , the state equation reduces to , and the solution is:

This is the system's natural response to initial conditions alone. Stability depends entirely on the eigenvalues of :

- All eigenvalues have negative real parts → asymptotically stable (response decays to zero)

- Some eigenvalues have zero real parts, none positive → marginally stable (response neither grows nor decays)

- Any eigenvalue has a positive real part → unstable (response grows without bound)

For circuit systems, negative real parts correspond to energy dissipation through resistors. A purely imaginary eigenvalue pair means sustained oscillation (like an ideal LC circuit with no resistance).

Particular Solution

When the input , you need to account for how the input drives the system. The general particular solution uses the convolution integral:

The full solution combining both parts is:

For simple inputs (step, ramp, sinusoidal), you can sometimes use the method of undetermined coefficients to guess the form of the particular solution and solve for its coefficients. For arbitrary inputs, the convolution integral or Laplace transform approach is the way to go.

Alternative Solution Methods

Laplace Transform Approach

The Laplace transform converts the state equation from a matrix differential equation into a matrix algebra problem, which is often easier to handle.

-

Take the Laplace transform of :

-

Rearrange to isolate :

-

Solve for :

-

Take the inverse Laplace transform to get .

The matrix is called the resolvent matrix. Its inverse Laplace transform gives you the state transition matrix: . This is actually one of the most practical ways to compute for 2×2 and 3×3 systems.

This approach also connects state-space analysis to transfer function analysis. The transfer function matrix is , which bridges the gap between the state-space and frequency-domain representations you've used in earlier units.

Numerical Solution Techniques

When systems are nonlinear, time-varying, or just large enough that closed-form solutions become impractical, numerical methods take over.

Euler's method is the simplest approach. Given the current state, you step forward by a small time increment :

This is a first-order method, so errors accumulate quickly unless is very small.

Runge-Kutta methods (especially RK4) provide much better accuracy by evaluating the derivative at multiple points within each step, then taking a weighted average. RK4 is fourth-order accurate, meaning the error per step scales as .

In practice, you'll typically use tools like MATLAB's ode45, which implements an adaptive-step Runge-Kutta algorithm. It automatically adjusts the step size: smaller steps where the solution changes rapidly, larger steps where it's smooth. Simulink provides a block-diagram environment for simulating state-space models visually, which is especially useful for verifying your analytical solutions against numerical results.