Definition and terminology

A tree is a connected graph with no cycles. That single property gives trees their hierarchical shape and makes them one of the most useful structures in both mathematics and computer science. Trees show up everywhere: file systems, decision-making models, network design, and efficient search algorithms.

In graph theory terms, a tree on vertices has exactly edges. If you remove any edge, the tree becomes disconnected. If you add any edge, you create a cycle. These properties make trees the "minimal" connected graphs.

Basic tree structure

- A tree consists of nodes (vertices) connected by edges, forming a hierarchy with no cycles.

- Every pair of nodes is connected by exactly one path.

- You can visualize a tree as an inverted structure: the root sits at the top, and branches extend downward toward the leaves.

- Because trees are acyclic and connected, they're a special case of graphs that's much easier to reason about.

Root, nodes, and leaves

- Root: the topmost node that serves as the starting point of the hierarchy. In an unrooted tree, any node can be designated as the root.

- Node: any individual element in the tree.

- Internal node: a node with at least one child.

- Leaf node (also called an external node): a node with no children, sitting at the end of a branch.

- Path: the unique sequence of edges connecting one node to another. Since trees have no cycles, this path is always unique.

Parent-child relationships

- A parent node connects directly to one or more child nodes below it.

- Every node except the root has exactly one parent.

- Siblings are nodes that share the same parent.

- Ancestors of a node include its parent, its parent's parent, and so on up to the root.

- Descendants of a node include all nodes in the subtree rooted at that node.

Subtrees and branches

- A subtree is any node together with all of its descendants. Every node in a tree is the root of its own subtree.

- Depth of a node: the number of edges from the root to that node. The root has depth 0.

- Height of a tree: the maximum depth among all nodes, i.e., the longest path from root to any leaf.

- Degree of a node: the number of children it has. A leaf has degree 0.

Types of trees

Different tree types suit different problems. Choosing the right type often determines whether your algorithm runs efficiently or not.

Binary trees

A binary tree restricts each node to at most two children, called the left child and right child. This is the most commonly studied tree type.

- A full binary tree has every node with either 0 or 2 children (no node has just one child).

- A perfect binary tree has all internal nodes with exactly 2 children and all leaves at the same depth.

- A complete binary tree fills every level fully except possibly the last, which fills left to right.

- Applications include expression trees (representing arithmetic expressions) and Huffman coding (data compression).

N-ary trees

An N-ary tree (also called a k-ary or m-ary tree) generalizes binary trees by allowing each node up to children.

- A ternary tree allows up to 3 children per node; a quadtree allows up to 4.

- File systems are a natural example: a directory can contain any number of subdirectories and files.

- Traversal algorithms extend naturally from binary trees but must iterate over all children at each node.

Balanced vs unbalanced trees

- A balanced tree keeps the heights of all subtrees roughly equal, so no branch is much longer than another.

- An unbalanced tree can degenerate into a long chain (essentially a linked list), making operations slow.

- Self-balancing trees like AVL trees and Red-Black trees automatically restructure themselves after insertions and deletions to maintain balance.

- Balance guarantees that basic operations (search, insert, delete) run in time rather than .

Complete vs full trees

These two terms are easy to confuse:

- Complete tree: every level is fully filled except possibly the last, which is filled from left to right. This is the structure used in heap implementations.

- Full tree: every node has either 0 or children (no "partially filled" nodes).

- A useful fact for full binary trees: the number of leaves equals the number of internal nodes plus 1, or .

Tree traversal algorithms

Traversal means visiting every node in a tree exactly once, in some systematic order. The two main strategies differ in whether you go deep or wide first.

Depth-first search

Depth-first search (DFS) follows each branch as far as possible before backtracking.

- Typically implemented with recursion or an explicit stack.

- Has three variants for binary trees: pre-order, in-order, and post-order (detailed below).

- Time complexity: since every node is visited once.

- Space complexity: where is the height of the tree (due to the recursion stack or explicit stack).

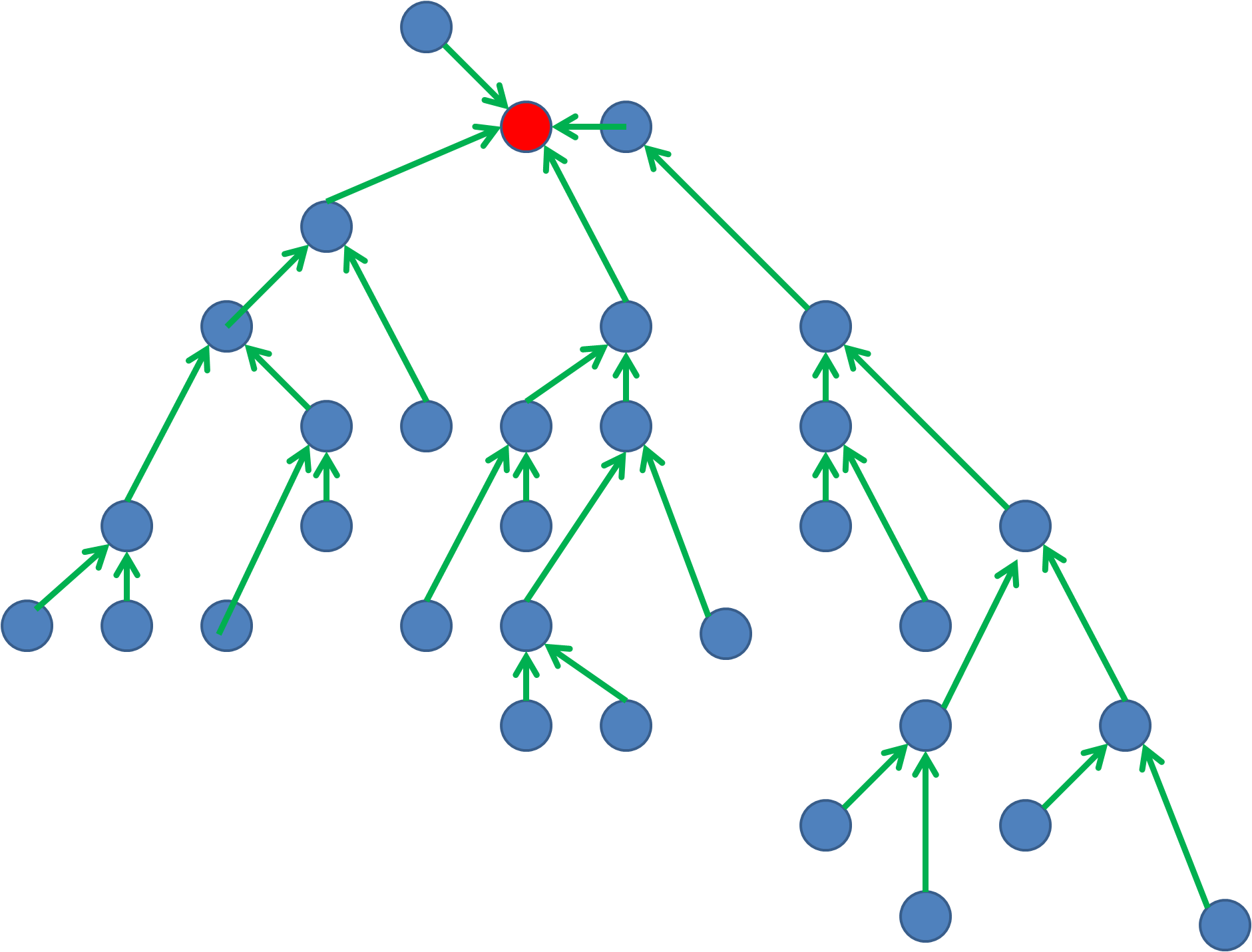

Breadth-first search

Breadth-first search (BFS) visits all nodes at the current depth before moving to the next level.

- Implemented using a queue: dequeue a node, process it, then enqueue its children.

- Particularly useful for finding the shortest path in unweighted trees or graphs.

- Time complexity: .

- Space complexity: where is the maximum width (number of nodes at any single level) of the tree.

Pre-order vs in-order vs post-order

These are the three DFS variants for binary trees. The names refer to when you process the root relative to its subtrees:

- Pre-order (root → left → right): Process the root first, then recurse into the left subtree, then the right. Useful for copying a tree or evaluating prefix expressions.

- In-order (left → root → right): Recurse left, then process the root, then recurse right. For a binary search tree, this visits nodes in sorted (non-decreasing) order.

- Post-order (left → right → root): Recurse into both subtrees before processing the root. Useful for deleting a tree (children first, then parent) or evaluating postfix expressions.

Each traversal produces a different ordering of the same nodes.

Binary search trees

A binary search tree (BST) is a binary tree that maintains a sorting invariant: for every node, all values in its left subtree are smaller and all values in its right subtree are larger.

Properties and characteristics

- The left-smaller, right-larger rule applies recursively at every node.

- Standard BSTs do not allow duplicate values (though some variants do).

- An in-order traversal of a BST produces the elements in sorted order.

- A balanced BST with nodes has height approximately .

Insertion and deletion operations

Insertion follows a simple process:

- Compare the new value to the current node, starting at the root.

- If the new value is smaller, move to the left child; if larger, move to the right child.

- Repeat until you reach a null position, then insert the new node there as a leaf.

Deletion has three cases:

- Leaf node: simply remove it.

- Node with one child: replace the node with its child.

- Node with two children: find the in-order successor (smallest node in the right subtree) or in-order predecessor (largest in the left subtree), copy its value into the node being deleted, then delete that successor/predecessor.

In self-balancing BSTs, rebalancing may follow insertion or deletion.

Time complexity analysis

- Average case for search, insertion, and deletion: .

- Worst case (when the tree degenerates into a straight chain): . This happens, for example, if you insert already-sorted data into a plain BST.

- Space complexity: for storing nodes.

- Self-balancing BSTs (AVL, Red-Black) guarantee worst-case performance by preventing degeneration.

Tree balancing techniques

When a BST becomes unbalanced, its performance degrades. Balancing techniques restructure the tree so that its height stays in , keeping operations fast.

AVL trees

- Named after inventors Adelson-Velsky and Landis (1962).

- Each node stores a balance factor: the height of its left subtree minus the height of its right subtree.

- The balance factor must be , , or at every node.

- When an insertion or deletion violates this, the tree restores balance through rotations (single or double).

- All basic operations are guaranteed .

Red-black trees

- Each node is colored either red or black, and the coloring must satisfy these rules:

- The root is black.

- All null leaves are considered black.

- A red node's children must both be black (no two consecutive red nodes on any path).

- Every path from the root to a null leaf passes through the same number of black nodes.

- Rebalancing uses color flips and rotations.

- Guarantees worst-case time for search, insert, and delete.

- Red-black trees are slightly less strictly balanced than AVL trees but tend to require fewer rotations on insertion/deletion.

B-trees and B+ trees

- Designed for systems where data lives on disk (databases, file systems) and minimizing disk reads matters.

- B-trees allow nodes to have many children (not just two), which reduces the tree's height and the number of disk accesses.

- B+ trees store all actual data in the leaf nodes; internal nodes serve only as an index. Leaf nodes are often linked together for efficient range queries.

- Both support efficient insertion, deletion, and search in time.

Applications of trees

File systems

- Operating systems organize files and directories as a tree. The root directory is the tree's root, directories are internal nodes, and files are leaves.

- This hierarchy lets you navigate paths like

/home/user/documents/by traversing from root to leaf. - Examples include Unix/Linux file systems and Windows NTFS.

Hierarchical data representation

- Trees naturally model any data with parent-child relationships: organizational charts, biological taxonomies, family trees.

- HTML and XML documents are parsed into a tree structure called the DOM (Document Object Model), where each tag is a node.

- JSON with nested objects also maps directly to a tree.

Decision trees in AI

- A decision tree models a sequence of choices leading to outcomes.

- Internal nodes represent tests on features (e.g., "Is age > 30?"), branches represent the possible answers, and leaf nodes represent final predictions or classifications.

- Algorithms like ID3, C4.5, and CART build decision trees from training data.

- Decision trees are popular because they produce interpretable, human-readable models.

Tree-based data structures

Heaps and priority queues

A heap is a complete binary tree that satisfies the heap property:

- Max-heap: every parent is greater than or equal to its children. The maximum element sits at the root.

- Min-heap: every parent is less than or equal to its children. The minimum element sits at the root.

Because a heap is a complete binary tree, it can be stored efficiently in an array. For a node at index :

- Left child is at index

- Right child is at index

- Parent is at index

Priority queues are often implemented with heaps, giving insertion and extraction of the min or max. Applications include Dijkstra's shortest-path algorithm and heap sort.

Trie for string operations

A trie (pronounced "try") is a tree where each node represents a single character, and paths from root to nodes spell out stored strings.

- Searching for a string of length takes time, regardless of how many strings are stored.

- Tries excel at prefix-based operations: autocomplete, spell checking, and IP routing tables.

- They're space-efficient when many stored strings share common prefixes.

Suffix trees in string matching

A suffix tree for a string of length is a compressed trie containing all suffixes of that string.

- Can be constructed in time (using Ukkonen's algorithm).

- Finding all occurrences of a pattern of length takes time, where is the number of occurrences.

- Widely used in bioinformatics for DNA sequence analysis and in text processing for fast substring searches.

Tree algorithms and problems

Lowest common ancestor

The lowest common ancestor (LCA) of two nodes is the deepest node that is an ancestor of both.

- A naive approach traces the path from the root to each node and finds where the paths diverge.

- More efficient methods use binary lifting or sparse tables to answer LCA queries in after preprocessing.

- Applications include phylogenetic analysis and network routing.

Diameter of a tree

The diameter is the length of the longest path between any two nodes.

To find it in time:

- Pick any node and run BFS/DFS to find the farthest node from it. Call that node .

- Run BFS/DFS from to find the farthest node from . Call that node .

- The distance from to is the diameter.

This works because the farthest node from any starting point must be an endpoint of a longest path.

Tree isomorphism

Two trees are isomorphic if one can be transformed into the other by rearranging children at each node (ignoring labels).

- Solved by computing a canonical form for each tree and comparing them.

- Polynomial-time algorithms exist, unlike general graph isomorphism which has no known polynomial-time solution.

- Applications include comparing chemical structures and querying graph databases.

Advanced tree concepts

Segment trees

A segment tree stores information about intervals of an array, enabling both range queries (e.g., sum, minimum, or maximum over a range) and point updates in time.

- Built on an array of elements, the tree has nodes.

- Each internal node stores the aggregate for its range; leaf nodes correspond to individual elements.

- Lazy propagation extends segment trees to support range updates efficiently.

- Used heavily in competitive programming and computational geometry.

Fenwick trees

A Fenwick tree (also called a Binary Indexed Tree, or BIT) provides an elegant way to compute prefix sums and perform point updates, both in time.

- More space-efficient and simpler to implement than a segment tree for problems that only need prefix sums and point updates.

- The key idea uses binary representations of indices to determine which ranges each node covers.

- Applications include counting inversions in an array and answering range sum queries.

Splay trees

A splay tree is a self-adjusting BST that moves recently accessed nodes to the root through a series of rotations called splaying.

- No explicit balance condition is maintained. Instead, the splaying operation gives amortized time per operation.

- Frequently accessed elements end up near the root, making future accesses faster.

- Particularly useful when access patterns are non-uniform (some elements are accessed much more often than others).

Trees in graph theory

In graph theory, trees are connected acyclic graphs. Several important problems involve finding or analyzing trees within larger graphs.

Spanning trees

A spanning tree of a connected graph is a subgraph that includes all vertices, is connected, and has no cycles.

- A spanning tree of a graph with vertices contains exactly edges.

- You can find a spanning tree using DFS or BFS on the original graph.

- The number of spanning trees of the complete graph is , a result known as Cayley's formula. For example, has spanning trees.

Minimum spanning trees

A minimum spanning tree (MST) is a spanning tree whose total edge weight is as small as possible.

- Kruskal's algorithm: Sort all edges by weight. Add edges in order from lightest to heaviest, skipping any edge that would create a cycle. Uses a disjoint-set (union-find) data structure.

- Prim's algorithm: Start from any vertex. Repeatedly add the cheapest edge connecting a vertex in the tree to a vertex outside it. Uses a priority queue.

- Both run in time for a graph with edges and vertices.

- Applications include network design, clustering, and image segmentation.

Steiner trees

The Steiner tree problem asks for the shortest tree connecting a specified subset of vertices, potentially using additional vertices not in the subset.

- This generalizes the MST problem and is NP-hard in general.

- Approximation algorithms can find solutions within a constant factor of optimal.

- Applications include VLSI circuit design and telecommunications network planning.

- Variants include the metric Steiner tree (where edge weights satisfy the triangle inequality) and the rectilinear Steiner tree (using only horizontal and vertical segments).