Definition of inductive reasoning

Inductive reasoning is the process of drawing general conclusions from specific observations or examples. Instead of starting with a rule and applying it (that's deductive reasoning), you start with particular instances and work toward a broader principle.

This distinction matters because inductive reasoning is how most new knowledge gets created. You notice something happening repeatedly, spot a pattern, and propose a general rule. It's the engine behind scientific discovery and a huge part of everyday problem-solving.

Characteristics of inductive reasoning

- Conclusions are probability-based, not absolutely certain. Even a strong inductive argument could turn out wrong.

- It relies on empirical evidence and observed patterns.

- The strength of your conclusion depends on both the quality and quantity of supporting evidence.

- It allows you to predict future events or unobserved cases based on what you've already seen.

- Conclusions are always open to revision as new information comes in.

Inductive vs deductive reasoning

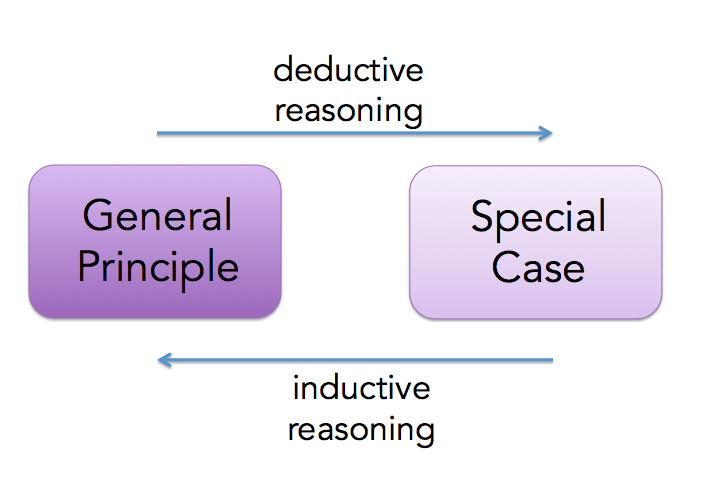

These two types of reasoning move in opposite directions:

- Inductive moves from specific observations to general conclusions. Deductive moves from general premises to specific conclusions.

- Inductive conclusions are probable. Deductive conclusions are certain, as long as the premises are true.

- Inductive reasoning can introduce genuinely new ideas. Deductive reasoning rearranges what's already contained in the premises.

- Scientists rely heavily on induction when discovering new patterns. Mathematicians rely on deduction when constructing formal proofs.

In practice, both types work together. You might use induction to notice a pattern, then use deduction to test whether that pattern holds logically.

Types of inductive arguments

Generalization

A generalization draws a broad conclusion about a population based on a sample. For example, if you survey 1,000 voters and 62% support a policy, you might generalize that roughly 62% of all voters support it.

- Strength depends on sample size and representativeness. A survey of 50 people from one city won't tell you much about an entire country.

- This is the reasoning behind polls, clinical trials, and scientific studies.

- The main pitfall is hasty generalization, where you draw a sweeping conclusion from too few or biased observations.

Analogy

Analogical reasoning compares two things that share certain features, then concludes that what's true of one is likely true of the other. For instance, if Drug A worked well on mice and mice share key biological traits with humans, you might argue Drug A could work on humans too.

- The argument gets stronger with more relevant similarities and fewer critical differences.

- Legal reasoning uses this constantly: courts apply past rulings (precedents) to new cases that share similar facts.

- A weak analogy ignores important differences between the two things being compared, which can lead to false conclusions.

Causal reasoning

Causal reasoning infers that one event or condition causes another. You notice that every time you eat shellfish you get a rash, so you conclude shellfish causes the rash.

- The biggest trap here is confusing correlation with causation. Two things happening together doesn't mean one causes the other. Ice cream sales and drowning rates both rise in summer, but ice cream doesn't cause drowning.

- Strong causal arguments consider temporal sequence (the cause comes before the effect), consistency across observations, and alternative explanations.

- Controlled experiments and statistical analysis help strengthen causal claims.

Statistical syllogism

A statistical syllogism applies a statistical generalization about a group to a specific individual. For example: "90% of students who complete all practice problems pass the exam. You completed all practice problems. So you'll probably pass."

- The strength depends on how reliable the statistic is and how well it applies to the specific case.

- This type of reasoning is used in medical diagnosis, risk assessment, and predictive analytics.

- It can break down when the individual has unique circumstances that make them an exception to the general trend.

Steps in inductive reasoning

Inductive reasoning follows a progression from raw data to broad explanations. Here are the four main stages:

1. Observation

You start by systematically collecting data or information. This could mean running an experiment, conducting a survey, or simply paying close attention to what's happening around you. The key is accuracy and objectivity: if your observations are biased or sloppy, everything built on them will be unreliable.

2. Pattern recognition

Next, you look for regularities, trends, or relationships in your data. Maybe you notice that a certain chemical reaction always produces heat, or that sales spike every December. Pattern recognition can involve visual inspection, numerical analysis, or data visualization tools like graphs and charts.

3. Hypothesis formation

Once you spot a pattern, you develop a tentative explanation or prediction. A good hypothesis is testable and falsifiable, meaning you can design an observation or experiment that could prove it wrong. Hypotheses are often framed as "if-then" statements: If temperature increases, then the reaction rate will increase.

4. Theory development

When multiple hypotheses survive repeated testing, they can be integrated into a broader theory. A theory is a comprehensive explanation that accounts for many observations and has strong predictive power. Theories aren't guesses; they're well-supported frameworks that guide future research. Sometimes a new theory overturns an old one entirely, as when Copernicus replaced the Earth-centered model of the solar system with a Sun-centered one.

Strength of inductive arguments

Strong vs weak induction

Not all inductive arguments are equally convincing. A strong inductive argument makes its conclusion highly probable given the evidence. A weak one offers some support but leaves plenty of room for doubt.

For example, observing that the sun has risen every day for all of recorded history gives you a strong inductive argument that it will rise tomorrow. Observing that two of your friends liked a movie gives you a weak inductive argument that everyone will like it.

Strong arguments resist counterexamples and alternative explanations. Weak arguments crumble quickly when challenged.

Factors affecting argument strength

- Sample size and representativeness for generalizations

- Number and relevance of similarities for analogies

- Control of variables and replication for causal reasoning

- Quality and reliability of data sources

- Whether alternative explanations have been considered

- Overall logical consistency of the reasoning

Applications of inductive reasoning

Scientific method

Inductive reasoning is baked into the scientific method. Scientists observe phenomena, recognize patterns, form hypotheses, and develop theories. Each round of experimentation can refine or overturn earlier conclusions. This iterative cycle of observation, prediction, and testing is what makes science self-correcting over time.

Machine learning

Machine learning algorithms are essentially automated inductive reasoning. They analyze large datasets, detect patterns, and use those patterns to make predictions or classifications. Image recognition, language translation, and recommendation systems all work this way. The more diverse and representative the training data, the better the algorithm performs.

Everyday decision-making

You use inductive reasoning constantly without thinking about it. If a restaurant has been good every time you've visited, you predict it'll be good next time. If traffic is always bad on Friday afternoons, you leave early. These are informal inductive arguments based on past experience, and they guide most of your daily choices.

Limitations of inductive reasoning

Problem of induction

The philosopher David Hume raised a fundamental challenge in the 18th century: what rational justification do we have for assuming the future will resemble the past? Just because the sun has always risen doesn't logically guarantee it will rise tomorrow. And you can't justify induction by saying "it's worked before," because that's itself an inductive argument. This circularity is known as Hume's problem of induction, and it remains a live debate in philosophy of science.

Fallacies in inductive reasoning

Watch for these common errors:

- Hasty generalization: Drawing conclusions from too few or biased observations. ("Two people from that city were rude, so everyone there must be rude.")

- Post hoc ergo propter hoc: Assuming that because event B followed event A, A caused B. ("I wore my lucky socks and we won, so the socks caused the win.")

- Cherry-picking: Selecting only the data that supports your conclusion while ignoring contradictory evidence.

- Gambler's fallacy: Believing that past random events affect future probabilities. ("The coin landed heads five times, so tails is 'due.'")

- Confirmation bias: Seeking out only evidence that supports what you already believe.

Evaluating inductive arguments

Assessing sample size

Larger samples generally produce more reliable generalizations. A poll of 10,000 people is more trustworthy than a poll of 50. But size alone isn't enough; you also need to consider statistical significance and margin of error. A massive but poorly designed study can still produce misleading results.

Representativeness of samples

A sample needs to reflect the characteristics of the larger population. If you're studying national voting trends but only survey college students in one state, your sample isn't representative. Random sampling helps reduce systematic bias, and good studies ensure their samples capture the relevant variables (age, geography, income, etc.).

Consideration of counterexamples

Actively looking for cases that contradict your conclusion is one of the best ways to test an inductive argument. Not every counterexample destroys an argument; some are minor exceptions. But if counterexamples are frequent or strike at the core of your reasoning, the argument needs to be revised or abandoned.

Historical perspectives on induction

Hume's problem of induction

David Hume argued in the 18th century that inductive reasoning has no purely logical foundation. His core point: past experience can't logically guarantee future outcomes. If you try to justify induction by saying "it's always worked before," you're using induction to justify induction, which is circular. This challenge influenced centuries of debate about epistemology and the foundations of scientific knowledge.

Mill's methods

John Stuart Mill developed five systematic methods in the 19th century for identifying causal relationships:

- Method of Agreement: If two or more cases of a phenomenon share only one common factor, that factor is likely the cause.

- Method of Difference: If a case where the phenomenon occurs and one where it doesn't differ in only one factor, that factor is likely the cause.

- Joint Method: Combines agreement and difference.

- Method of Residues: Subtract known causes from a phenomenon, and what remains is likely caused by the remaining factors.

- Method of Concomitant Variation: If a factor and a phenomenon vary together, they're likely causally related.

These methods laid groundwork for modern experimental design and data analysis, though Mill recognized that observational studies alone have limits in establishing causation.

Inductive reasoning in mathematics

Mathematical induction

Despite the name, mathematical induction is actually a deductive proof technique. It proves that a statement holds for all natural numbers by establishing two things:

- Base case: Show the statement is true for the first value (usually ).

- Inductive step: Show that if the statement is true for some arbitrary , then it must also be true for .

Once both steps are established, the statement is proven for every natural number, with certainty. This differs from empirical induction, which only gives probability. Mathematical induction is widely used in number theory, combinatorics, and computer science.

Proof by example

Showing that a statement holds for specific cases can provide insight but doesn't prove it holds universally. If someone claims "all prime numbers are odd," a single counterexample ( is prime and even) disproves the claim entirely.

Proof by example is most useful for:

- Disproving universal statements (one counterexample is enough)

- Building intuition about a pattern before attempting a formal proof

- Illustrating how a general property works in concrete instances

Inductive reasoning in other disciplines

Induction in natural sciences

Physics, chemistry, and biology all depend on inductive reasoning to move from experimental observations to general laws. A biologist observing that a particular gene mutation consistently produces a specific trait across hundreds of organisms uses induction to propose a general genetic mechanism. The strength of scientific induction comes from repeated testing, peer review, and the willingness to revise conclusions when new data contradicts them.

Induction in social sciences

Psychology, sociology, and economics use induction to study human behavior and social patterns. Qualitative methods like grounded theory build theories directly from interview and observational data rather than testing pre-existing hypotheses. Market researchers analyze consumer behavior patterns to predict trends.

A key challenge in the social sciences is that human behavior is complex and context-dependent, making it harder to generalize findings across different populations and cultures.