Network flows use directed graphs to model and optimize how resources move through interconnected systems. Whether you're routing data through a computer network, managing traffic, or distributing electricity, the same mathematical framework applies. This topic covers the core definitions, key algorithms, and the surprisingly deep connections between flows, cuts, and graph theory.

Fundamentals of network flows

Definition and terminology

A flow network is a directed graph with two special vertices: a source (where flow originates) and a sink (where flow is collected). Every edge has a capacity , which is the maximum amount of "stuff" that can pass through it.

A flow assigns a value to each edge, representing how much material actually moves through it. Two constraints govern every valid flow:

- Capacity constraint: for all edges. You can't exceed an edge's capacity.

- Skew symmetry: for all vertex pairs. This is a bookkeeping convention that makes the math cleaner: if 5 units flow from to , then units flow from to .

Components of flow networks

- Vertices represent junctions in the network (intersections, routers, warehouses)

- Edges represent connections with associated capacities (roads, cables, pipelines)

- Source generates flow; sink absorbs flow

- Internal nodes are intermediaries that neither create nor destroy flow

- The residual network shows how much additional flow each edge can still carry after some flow has been assigned

Flow conservation principle

At every internal vertex (everything except and ), the total flow in must equal the total flow out:

Think of it like water through pipes: nothing leaks and nothing accumulates at intermediate junctions. This conservation law is what makes the entire framework work, and it forms the basis for both maximum flow and minimum cost flow problems.

Maximum flow problem

The maximum flow problem asks: what is the greatest total flow you can push from source to sink while respecting all capacity constraints?

Ford-Fulkerson method

The Ford-Fulkerson method is the foundational approach for finding maximum flow. The core idea is to repeatedly find paths that can carry more flow and push flow along them.

- Initialize all edge flows to zero.

- Find an augmenting path from to in the residual network (any path with remaining capacity on every edge).

- Determine the bottleneck: the smallest residual capacity along that path.

- Augment flow along the entire path by the bottleneck amount.

- Update the residual network to reflect the new flow.

- Repeat steps 2-5 until no augmenting path exists.

When no more augmenting paths can be found, the current flow is maximum. The time complexity depends on how you choose augmenting paths, which is why specific implementations matter.

Edmonds-Karp algorithm

Edmonds-Karp is Ford-Fulkerson with one specific rule: always use breadth-first search (BFS) to find the augmenting path. This guarantees you always pick the shortest augmenting path (fewest edges).

This small change has a big payoff: the algorithm runs in time, which is polynomial regardless of the capacity values. Without BFS, Ford-Fulkerson can behave poorly on networks with large or irrational capacities.

Push-relabel algorithm

Push-relabel takes a fundamentally different approach. Instead of finding full paths from source to sink, it works locally:

- Start by pushing as much flow as possible out of the source (creating "excess" at neighboring vertices).

- Push excess flow from overflowing vertices toward the sink.

- Relabel vertices when no downhill push is possible, effectively raising them so flow can continue moving toward the sink.

This algorithm maintains a preflow (which can temporarily violate conservation at internal nodes) and gradually converts it into a valid maximum flow. With the right data structures, it achieves or even , and it tends to perform well on dense graphs.

Minimum cost flow problem

The minimum cost flow problem adds a twist: each edge has both a capacity and a per-unit cost. The goal is to send a specified amount of flow from source to sink at the lowest total cost. This combines flow maximization with cost minimization.

Network simplex method

This adapts the simplex method from linear programming to exploit network structure:

- Start with a spanning tree representing an initial feasible flow.

- Find an entering arc with negative reduced cost (meaning adding flow there would decrease total cost).

- Update the flow along the tree path affected by the new arc.

- Perform a pivot to swap arcs and maintain the spanning tree structure.

- Repeat until no negative reduced cost arcs remain.

Because it exploits the tree structure of networks, pivots are much faster than in general LP. It runs in polynomial time in practice, even though its theoretical worst case is exponential.

Cycle-canceling algorithm

This algorithm starts with any feasible flow (ignoring costs) and then improves it:

- Find a feasible flow that satisfies all capacity and demand constraints.

- Look for a negative-cost cycle in the residual network.

- Push as much flow as possible around that cycle, reducing total cost.

- Repeat until no negative-cost cycles remain.

When no negative-cost cycles exist, the flow is cost-optimal. The running time depends on how you detect cycles and the structure of the network.

Successive shortest path algorithm

This approach builds up the optimal flow incrementally:

- Initialize flow to zero and set node potentials.

- Find the shortest path (by cost) from to in the residual network.

- Push as much flow as possible along that path.

- Update node potentials (these keep edge costs non-negative, enabling efficient shortest-path computations).

- Repeat until the desired flow amount is reached.

This provides a clean primal-dual interpretation and works especially well when the total flow value is small relative to the network size.

Applications of network flows

Transportation networks

Road systems, rail networks, and air routes map naturally onto flow networks. Vertices are intersections, stations, or airports. Edges are routes, with capacities measured in vehicles per hour or passengers per trip.

- Traffic flow optimization: find maximum throughput or identify bottleneck roads

- Evacuation planning: route people from danger zones to safety as quickly as possible

- Supply chain management: move goods from factories to warehouses to customers at minimum cost

Communication systems

Data networks are flow networks where nodes are routers or servers and edges are communication links with bandwidth capacities.

- Network capacity planning: determine maximum data throughput between endpoints

- Load balancing: distribute traffic across servers to avoid overloading any single one

- Quality of service management: allocate bandwidth to meet priority requirements

Resource allocation

Any situation where limited resources must be distributed across competing demands can be modeled as a flow problem. Vertices represent supply points, demand points, and intermediaries; edges represent possible allocation paths.

- Energy grid management (routing power from generators to consumers)

- Water distribution systems

- Project scheduling and resource assignment

Duality in network flows

Max-flow min-cut theorem

This is one of the most important results in combinatorics. It states:

The value of the maximum flow from to equals the capacity of the minimum - cut.

An - cut is a partition of the vertices into two sets and where and . The capacity of the cut is the sum of capacities of all edges going from to . The minimum cut identifies the true bottleneck in the network.

This theorem has applications well beyond flow optimization:

- Network reliability analysis: the min cut tells you the weakest point in a network

- Image segmentation: separating foreground from background in computer vision

- Bipartite matching: connecting max-flow min-cut to matching problems

Linear programming formulation

Every network flow problem can be expressed as a linear program:

- The primal formulation maximizes flow subject to capacity and conservation constraints.

- The dual formulation minimizes cut capacity.

- Dual variables represent potentials or "prices" associated with vertices.

This connection means you can use general LP solvers for flow problems, and it reveals economic interpretations: dual variables act as shadow prices showing how much an extra unit of capacity at a bottleneck edge would be worth.

Complementary slackness

Complementary slackness connects optimal primal and dual solutions:

- For maximum flow: a saturated edge (flow = capacity) corresponds to a tight dual constraint; an unsaturated edge corresponds to a zero-valued dual variable.

- For minimum cost flow: positive flow on an edge means the reduced cost equation holds with equality; zero flow allows slack.

These conditions are useful for verifying that a solution is truly optimal and for performing sensitivity analysis (how does the solution change if parameters shift slightly?).

Advanced network flow concepts

Multi-commodity flows

In many real scenarios, multiple types of flow share the same network simultaneously. Each commodity has its own source(s) and sink(s), but all commodities share edge capacities.

For example, a telecommunications network might carry voice, video, and data traffic on the same links. The shared capacity constraints create interdependencies that make the problem significantly harder than single-commodity flow. Specialized techniques like column generation and path-based formulations are typically needed.

Generalized flows

Standard flows conserve quantity along edges, but generalized flows allow edges to multiply or reduce flow. Each edge has a gain factor , so if you send units in, units come out.

Flow conservation becomes:

This models currency exchange (converting dollars to euros at some rate), energy conversion (heat loss in transmission), or chemical reactions. A new complication arises: flow-generating cycles where flow can be amplified by circulating through a loop.

Dynamic flows

Standard flow models are static, but dynamic flows add a time dimension. Edges have both capacities and transit times (how long it takes flow to traverse them), and flow values can change over time.

Two main modeling approaches exist:

- Time-expanded networks: create copies of the network for each time step

- Continuous-time models: treat time as a continuous variable

Applications include evacuation planning with deadlines, traffic prediction, and scheduling time-sensitive deliveries.

Network flow algorithms

Complexity analysis

Choosing the right algorithm depends on the network's structure:

- Edmonds-Karp: , reliable general-purpose choice

- Push-relabel: or , better for dense graphs

- Sparse vs. dense matters: an algorithm that's fast on dense graphs may not be the best choice when is close to

Space complexity and average-case performance also factor into practical algorithm selection.

Approximation algorithms

For very large networks, exact solutions may be too expensive to compute. Approximation algorithms provide near-optimal solutions with guaranteed bounds on how far they can be from optimal.

- Rounding: solve a relaxed (fractional) version, then round to integers

- Primal-dual methods: simultaneously build primal and dual solutions

- Local search heuristics: iteratively improve a feasible solution

- FPTAS (Fully Polynomial-Time Approximation Schemes): for certain flow problems, you can get within of optimal in time polynomial in both the input size and

Parallel algorithms

Large-scale networks benefit from parallel computation. Classic algorithms have parallel variants:

- Distributed Ford-Fulkerson

- Parallel push-relabel (particularly well-suited since push operations are local)

- Parallel network simplex

The main challenges are maintaining global consistency across processors and minimizing communication overhead.

Graph theory connections

Matchings and network flows

Maximum bipartite matching reduces directly to maximum flow. Given a bipartite graph with left vertices and right vertices :

- Add a source connected to every vertex in .

- Add a sink connected from every vertex in .

- Set all edge capacities to 1.

- Solve for maximum integer flow.

Each unit of flow corresponds to a matched pair. This reduction means any max-flow algorithm immediately solves matching problems like job assignment, college admissions, and the stable marriage problem.

Connectivity and network flows

Flow techniques directly measure how well-connected a graph is:

- Edge connectivity (minimum number of edges whose removal disconnects two vertices) equals the max flow between those vertices with unit capacities.

- Vertex connectivity can be found by transforming the graph (splitting each vertex into two connected by a unit-capacity edge) and computing max flow.

- Menger's theorem states that the maximum number of edge-disjoint paths between two vertices equals the minimum edge cut between them. This is essentially the max-flow min-cut theorem applied to unit-capacity networks.

Circulation problem

A circulation generalizes max flow by removing the source and sink entirely. Flow conservation must hold at every vertex, and edges can have both lower and upper bounds on flow.

A feasible circulation exists if and only if no cut violates the bound constraints. You can solve circulation problems by transforming them into standard max flow: add a super-source and super-sink to handle the lower bounds.

Applications include periodic scheduling, inventory management, and balanced transportation problems.

Practical considerations

Data structures for networks

The choice of data structure significantly affects algorithm performance:

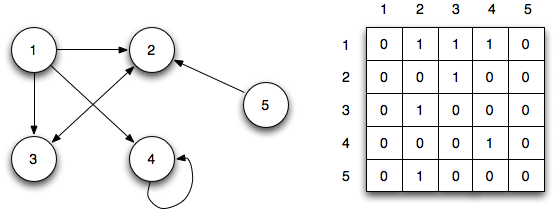

- Adjacency lists for sparse graphs (most real-world networks)

- Adjacency matrices for dense graphs (fast edge lookups but memory)

- Dynamic trees (link-cut trees) for the push-relabel algorithm, enabling path operations

- Fibonacci heaps for shortest path computations in minimum cost flow algorithms

For large networks, cache-friendly memory layouts can matter as much as asymptotic complexity.

Implementation techniques

Several practical strategies improve real-world performance beyond what complexity analysis predicts:

- Capacity scaling: start with large flow units and progressively refine, reducing the number of augmenting paths needed

- Path selection heuristics: choosing the "fattest" augmenting path (maximum bottleneck) often works better than arbitrary selection

- Global relabeling: periodically recompute all vertex labels in push-relabel using BFS, which dramatically speeds up convergence

- Warm-starting: when solving a sequence of related problems, reuse the previous solution as a starting point

- Numerical precision: careful handling of floating-point arithmetic prevents subtle bugs in cost computations

Scaling techniques

Networks with very large or very varied capacities/costs benefit from scaling approaches:

- Capacity scaling for max flow: process edges in phases, starting with the highest-capacity edges and halving the threshold each round. This reduces the number of augmenting paths from potentially huge to where is the maximum capacity.

- Cost scaling for min-cost flow: gradually increase cost precision, maintaining -optimal solutions at each phase. This avoids the sensitivity to cost magnitudes that plagues some algorithms.

Both techniques improve worst-case complexity and provide practical benefits when capacity or cost values span many orders of magnitude.

Network flow extensions

Flows with demands

Standard flow networks only have upper bounds on edges. Flows with demands add vertex-specific requirements: each vertex has a demand specifying how much net flow it must absorb (or supply, if ).

Flow conservation becomes:

This models production-distribution systems (factories supply, stores demand), power grid load balancing, and waste management. These problems are solved by transforming them into standard max flow with an added super-source and super-sink.

Flows over time

Flows over time extend dynamic flows with richer temporal modeling. Edges have both capacities and transit times, and flow can vary at each edge over time.

Key variants include:

- Earliest arrival flows: maximize flow reaching the sink at every point in time simultaneously

- Maximum flow over time: maximize total flow delivered within a time horizon

These are critical for emergency evacuation planning (where timing matters as much as throughput), traffic management, and packet routing in communication networks.

Robust network flows

Real networks have uncertain parameters: demand fluctuates, edges fail, capacities vary. Robust network flows seek solutions that perform well across a range of scenarios.

- Stochastic programming: model uncertainty with probability distributions

- Robust optimization: guarantee performance under worst-case scenarios within an uncertainty set

- Chance-constrained models: allow constraints to be violated with small probability

The tradeoff is always between optimality (best performance under expected conditions) and robustness (acceptable performance under adverse conditions).