Fundamentals of optical flow

Optical flow estimates the apparent motion of brightness patterns between consecutive video frames. It produces a 2D vector field where each vector describes how far (and in what direction) a pixel has moved from one frame to the next. This makes it essential for tasks like object tracking, motion segmentation, video compression, and action recognition.

Definition and concept

Optical flow captures the apparent motion of brightness patterns in an image sequence. When objects move in a 3D scene, that motion gets projected onto the 2D image plane. Optical flow is the estimate of that 2D projection.

The result is a vector field: at each pixel (or at selected pixels), you get a displacement vector describing horizontal and vertical motion. These vectors are computed using temporal gradients (how intensity changes over time) and spatial gradients (how intensity changes across the image). Flow fields are typically visualized with color-coded maps (where hue represents direction and saturation represents magnitude) or with arrow overlays on the image.

Applications in computer vision

- Object tracking follows moving objects across frames using their flow vectors

- Motion segmentation separates foreground objects from the background by grouping pixels with similar motion patterns

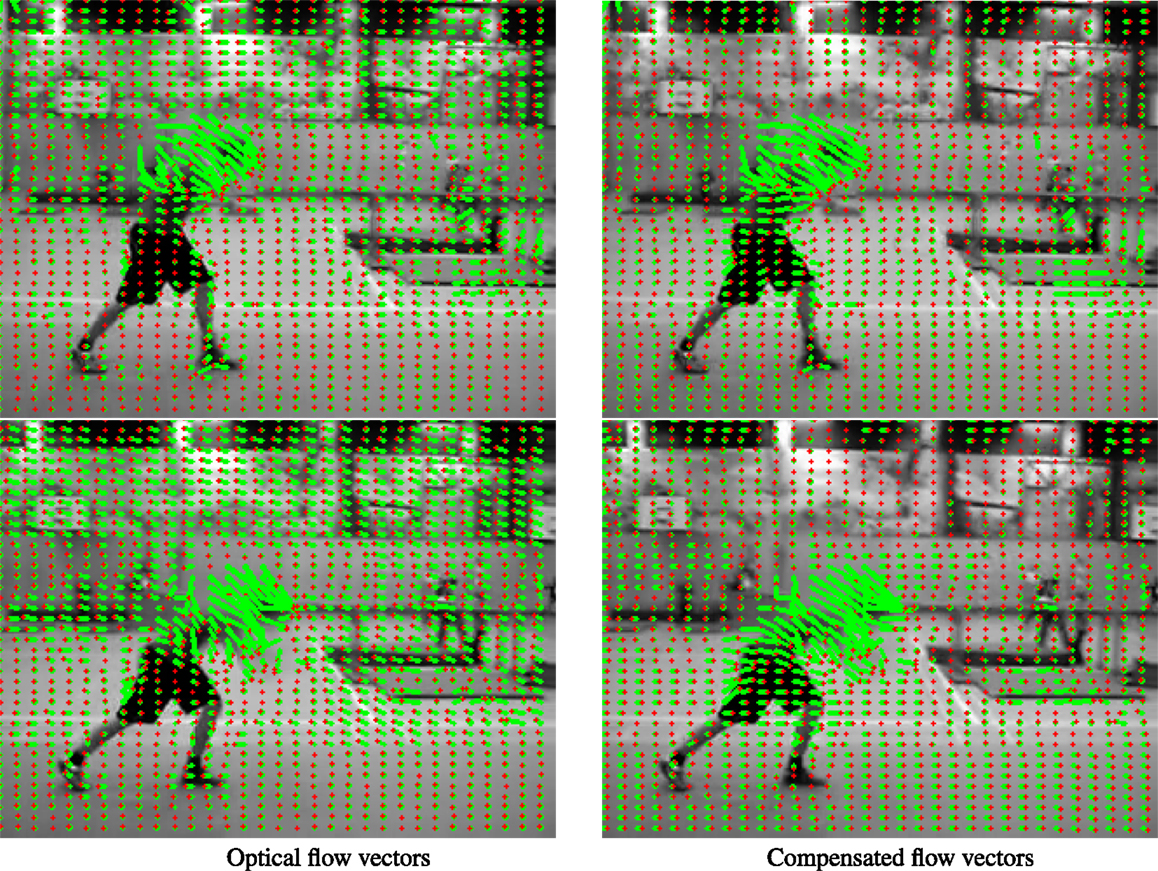

- Video stabilization compensates for camera shake by estimating the global motion of the scene and subtracting it

- Action recognition classifies human activities by analyzing the characteristic flow patterns they produce (e.g., walking vs. running)

- Depth estimation infers 3D structure from 2D motion cues in monocular video, since closer objects tend to move faster in the image

Assumptions and limitations

Most optical flow algorithms rely on three core assumptions:

- Brightness constancy: pixel intensities don't change between frames (the same surface point looks the same in both frames)

- Small motion: displacements between frames are small enough to allow linearization of the intensity function

- Spatial coherence: neighboring pixels tend to move together

These assumptions break down in practice. Occlusions (objects hiding behind other objects), illumination changes, specular reflections, and fast motion all violate one or more of them. Understanding where these assumptions fail helps you predict when an algorithm will struggle.

Motion estimation techniques

Motion estimation is the computational backbone of optical flow. There are three broad families of approaches, each balancing accuracy, speed, and robustness differently.

Block matching methods

Block matching divides each frame into small rectangular blocks and searches for the best-matching block in the next frame. "Best match" is determined by a similarity measure like Sum of Absolute Differences (SAD) or Sum of Squared Differences (SSD).

Different search strategies control how many candidate positions are checked:

- Exhaustive search checks every possible position within a search window (accurate but slow)

- Three-step search progressively narrows the search area (faster but may miss the global best match)

Block matching handles large motions well since it doesn't rely on linearization. However, it only gives block-level resolution (no sub-pixel accuracy) and suffers from the aperture problem in regions with uniform texture, where many blocks look identical.

Differential methods

Differential methods compute optical flow directly from the spatial and temporal derivatives of image intensity. They start from the brightness constancy assumption and use a Taylor expansion to derive the optical flow constraint equation:

where , are spatial intensity gradients, is the temporal gradient, and is the flow vector.

This single equation has two unknowns, so additional constraints are needed (this is the aperture problem at the mathematical level). Differential methods provide sub-pixel accuracy and dense flow fields, but they're sensitive to noise and require the small motion assumption to hold.

Feature-based approaches

Instead of computing flow everywhere, feature-based methods detect distinctive points (corners, edges) and track them across frames. Feature descriptors like SIFT or SURF make matching robust to viewpoint and illumination changes.

These methods produce sparse flow (only at feature locations) but handle large displacements and partial occlusions well. To get a dense flow field from sparse matches, you need interpolation or regularization to fill in the gaps.

Lucas-Kanade algorithm

The Lucas-Kanade algorithm is one of the most widely used differential optical flow methods. Its key idea: assume that all pixels within a small local patch share the same flow vector .

Mathematical formulation

Starting from the optical flow constraint equation for each pixel in a patch:

A patch of pixels gives equations but only two unknowns (). This overdetermined system is solved via least squares:

Here, is an matrix of spatial gradients for each pixel in the patch, and is a vector of negative temporal gradients .

For this to work, must be invertible. This requires the patch to contain sufficient gradient variation in both directions. Regions with uniform texture or a single edge direction make singular or near-singular, which is the aperture problem in Lucas-Kanade terms. Corners and textured regions work best.

Pyramidal implementation

The basic Lucas-Kanade algorithm assumes small motion. To handle larger displacements, a coarse-to-fine pyramid strategy is used:

- Build an image pyramid by repeatedly downsampling both frames (each level is half the resolution of the one below)

- At the coarsest level, large motions appear as small motions (because the image is small)

- Estimate the flow at the coarsest level using standard Lucas-Kanade

- Upsample that flow estimate to the next finer level and use it as an initial guess

- Refine the estimate at each finer level until you reach full resolution

This approach captures large displacements while preserving fine detail. The number of pyramid levels and the interpolation method used during upsampling both affect the final result.

Advantages and disadvantages

- Advantages:

- Dense flow with sub-pixel accuracy

- Computationally efficient and easy to parallelize

- Local averaging within patches provides some noise robustness

- Disadvantages:

- Without the pyramidal extension, it fails on large displacements

- Assumes brightness constancy (breaks under lighting changes)

- The local constancy assumption means it can't represent motion discontinuities within a patch (e.g., at object boundaries)

Horn-Schunck method

While Lucas-Kanade is a local method, Horn-Schunck takes a global approach. It estimates flow for the entire image simultaneously by minimizing an energy functional that balances fidelity to the image data with a smoothness constraint on the flow field.

Global smoothness constraint

The Horn-Schunck energy functional has two terms:

- Data term (how well the flow satisfies brightness constancy):

- Smoothness term (how smooth the flow field is):

The total energy to minimize is:

The parameter controls the trade-off. A larger produces smoother flow (good for noisy images, but blurs motion boundaries). A smaller allows sharper flow discontinuities but is more sensitive to noise.

Iterative solution

Minimizing this energy leads to a pair of coupled partial differential equations that are solved iteratively. The update equations at iteration are:

Here, and are local averages of the flow from the previous iteration (typically computed over a 4-neighbor or 8-neighbor stencil). The algorithm iterates until the flow field converges or a maximum number of iterations is reached. Convergence speed depends heavily on the choice of and the initial flow estimate.

Comparison with Lucas-Kanade

Horn-Schunck produces denser, smoother flow fields and handles the aperture problem better through its global smoothness constraint. However, it's more computationally expensive and more sensitive to noise and outliers because errors propagate globally.

Lucas-Kanade gives more reliable estimates in well-textured regions and is faster, but it only captures local motion and can't enforce long-range coherence.

In practice, many modern methods combine ideas from both: local data terms with global or semi-global regularization.

Dense vs. sparse optical flow

These represent two fundamentally different strategies. Dense flow computes a motion vector at every pixel. Sparse flow computes motion only at selected feature points.

Computational considerations

- Dense flow requires significant computation and memory since every pixel gets a vector. GPU parallelization makes real-time dense flow feasible on modern hardware. Hierarchical (coarse-to-fine) strategies help reduce cost.

- Sparse flow is much cheaper because only a fixed number of feature points are tracked, regardless of image resolution. Fast feature detectors (like Shi-Tomasi corners) and efficient tracking (like pyramidal Lucas-Kanade) keep it lightweight.

Accuracy trade-offs

Dense flow captures motion everywhere, including subtle gradients and small moving objects. But it's prone to errors in textureless regions and at motion boundaries where the smoothness assumption breaks down.

Sparse flow achieves high accuracy at the tracked points (since those points are chosen specifically because they're easy to track). The downside is that motion between feature points is unknown, so you may miss important information in non-feature regions.

Use cases for each approach

- Dense optical flow:

- Motion segmentation and object detection in complex scenes

- Video compression (motion-compensated prediction)

- Monocular depth estimation

- Sparse optical flow:

- Real-time object tracking on resource-constrained devices

- Visual odometry for robot navigation and autonomous vehicles

- Structure from Motion (SfM) for 3D reconstruction

Optical flow constraints

The three core constraints underpin nearly every optical flow algorithm. Understanding them clarifies why algorithms behave the way they do and when they'll fail.

Brightness constancy assumption

This assumes a pixel's intensity doesn't change between frames:

A first-order Taylor expansion of the right side (assuming small motion) yields the optical flow constraint equation:

This assumption is violated by illumination changes, specular highlights, transparency, and shadows. Robust methods address this by using gradient-based features (which are less sensitive to global brightness shifts) or by applying intensity normalization as a preprocessing step.

Small motion assumption

The Taylor expansion above only works if are small relative to the spatial structure of the image. When motion is large (fast objects, low frame rates), the linearization becomes inaccurate.

Multi-scale pyramid approaches are the standard fix: by downsampling the image, large motions become small motions at coarser scales.

Spatial coherence assumption

Neighboring pixels are assumed to move similarly. This is what allows Lucas-Kanade to assume constant flow within a patch and Horn-Schunck to impose a smoothness penalty.

This assumption fails at motion boundaries (where a foreground object moves differently from the background) and in scenes with complex, non-rigid motion. Advanced methods use edge-preserving regularization or segment the image first so that smoothness is only enforced within each segment.

Challenges in optical flow

Occlusion handling

Occlusions occur when parts of the scene become hidden or newly visible between frames. At occluded pixels, there's no valid correspondence in the other frame, so brightness constancy doesn't apply.

Common strategies include:

- Bidirectional flow estimation: compute flow in both directions (frame 1→2 and frame 2→1) and flag pixels where the two flows are inconsistent

- Robust error functions: use loss functions (like the L1 norm or Lorentzian) that downweight large residuals, reducing the influence of occluded pixels

- Layered motion models: represent the scene as multiple layers, each with its own motion, so occlusion relationships can be modeled explicitly

Large displacements

When objects move many pixels between frames, standard differential methods fail because the small motion assumption is violated.

Approaches to handle this:

- Coarse-to-fine pyramids (described above)

- Feature matching to provide an initial estimate of large motions, which is then refined by a variational method

- Non-local regularization terms that allow the algorithm to propagate motion information over long distances

There's a trade-off: methods that capture large motions often smooth over fine details at motion boundaries.

Illumination changes

Changes in lighting, camera exposure, or surface reflectance violate brightness constancy. This is especially problematic in outdoor scenes with changing sunlight or with non-Lambertian (shiny, reflective) surfaces.

Techniques to handle this:

- Use image gradients instead of raw intensities as the data term (gradients are more robust to additive brightness changes)

- Apply local or global intensity normalization before computing flow

- Incorporate photometric invariance directly into the energy functional

Advanced optical flow techniques

Variational methods

Variational methods generalize the Horn-Schunck framework by allowing more sophisticated data terms and regularizers. The flow is found by minimizing an energy functional, but with more flexibility:

- TV-L1 optical flow replaces the quadratic smoothness penalty with total variation (which preserves sharp motion boundaries) and uses an L1 data term (which is robust to outliers)

- DeepFlow integrates dense correspondences from a deep matching algorithm into the variational framework, combining the strengths of feature matching and energy minimization

These methods can model complex motion patterns and handle outliers better than classical approaches, at the cost of higher computational complexity.

Learning-based approaches

Deep learning has transformed optical flow estimation. Networks are trained end-to-end to predict flow directly from pairs of input frames.

Notable architectures:

- FlowNet (2015) was the first CNN-based approach, using an encoder-decoder structure

- PWC-Net (Pyramid, Warping, Cost volume) incorporates classical optical flow principles (pyramids, warping) into the network design, achieving better accuracy with fewer parameters

- RAFT (2020) uses recurrent updates over a correlation volume and has become a widely used baseline

These methods can learn to handle scenarios (like occlusions and large displacements) that are hard to model with hand-crafted algorithms. The main challenges are the need for large training datasets with ground truth flow (often generated synthetically) and the risk of poor generalization to domains not seen during training.

Real-time implementations

Many applications (autonomous driving, robotics, augmented reality) require optical flow at video frame rates. Strategies for real-time performance include:

- GPU-parallel implementations of classical algorithms (e.g., Lucas-Kanade on CUDA)

- Simplified algorithmic models designed for speed over maximum accuracy

- Compact neural networks like LiteFlowNet that trade some accuracy for significantly faster inference

The core trade-off is always speed vs. accuracy. For safety-critical applications like autonomous driving, finding the right balance is an active area of research.

Evaluation metrics

End-point error

End-Point Error (EPE) is the most common quantitative metric. It measures the Euclidean distance between the estimated flow vector and the ground truth flow vector at each pixel:

EPE is typically averaged over all pixels in the image. One caveat: a few pixels with very large errors can dominate the average, so some benchmarks also report median EPE or the percentage of pixels exceeding a threshold error.

Angular error

Angular Error (AE) measures the angle between the estimated and ground truth flow vectors in a 3D space (where the third component is set to 1):

AE focuses on directional accuracy and is less sensitive to magnitude differences than EPE. This makes it useful when you care more about where things are moving than how fast. However, AE can be misleading for very small flow vectors, where even tiny absolute errors produce large angular differences.

Qualitative assessment methods

Numbers don't tell the whole story. Qualitative evaluation includes:

- Color-coded flow visualizations (using the Middlebury color wheel) to spot systematic errors

- Warped image comparison: warp one frame using the estimated flow and compare it to the other frame to check alignment

- Motion boundary analysis: check whether the flow preserves sharp edges between differently moving regions

- Temporal consistency: in video sequences, check whether flow estimates are stable over time or flicker between frames

Applications of optical flow

Motion segmentation

Motion segmentation groups pixels by their motion patterns to separate independently moving objects from the background. The basic idea: compute the optical flow, then cluster the flow vectors.

- Flow clustering groups pixels with similar vectors into coherent regions

- Background subtraction fits a global motion model (e.g., an affine or homography model for camera motion) and labels pixels that don't fit the model as foreground

This is used in autonomous driving (detecting other vehicles and pedestrians), video surveillance (spotting unusual activity), and video editing. The main difficulty is distinguishing object motion from camera motion, especially when the background itself contains non-rigid elements like trees or water.

Video compression

Video codecs like H.264 and H.265 exploit temporal redundancy using motion estimation. Instead of encoding each frame independently, the encoder predicts each frame from a reference frame using motion vectors, then only encodes the residual (the difference between prediction and reality).

Block-based motion estimation is standard in codecs because it's compatible with the block-based transform coding pipeline. Better motion estimation means smaller residuals, which means lower bit rates at the same quality. The trade-off is that more accurate motion estimation takes more computation during encoding.

Object tracking

Optical flow provides frame-to-frame motion estimates that can drive object tracking:

- Sparse tracking uses pyramidal Lucas-Kanade to follow feature points on the object. This is fast and works well for short-term tracking.

- Dense tracking warps an object template using the dense flow field to follow the object's shape over time.

Applications include sports analytics (tracking players and the ball), augmented reality (anchoring virtual objects to the real world), and video editing. The main challenge for long-term tracking is error accumulation: small flow errors compound over many frames, causing drift. Most practical systems combine optical flow with periodic re-detection to correct for drift.