Augmented and virtual reality technologies are revolutionizing how we interact with digital content. By blending computer vision and image processing, AR overlays digital information onto the real world, while VR creates fully immersive virtual environments.

These technologies rely on advanced algorithms for spatial awareness, object recognition, and real-time rendering. From gaming and entertainment to education and healthcare, AR and VR are transforming various industries by offering new ways to visualize and interact with information.

Fundamentals of AR and VR

- Augmented Reality (AR) and Virtual Reality (VR) technologies transform visual perception and interaction in computer vision applications

- AR and VR leverage image processing techniques to create immersive digital experiences, enhancing or replacing real-world environments

- These technologies rely heavily on computer vision algorithms for spatial awareness, object recognition, and real-time rendering

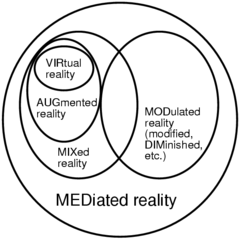

Definition and distinctions

- AR overlays digital content onto the real world, enhancing the user's perception of reality

- VR creates a fully immersive digital environment, replacing the user's entire visual field

- Mixed Reality (MR) combines elements of both AR and VR, allowing digital objects to interact with the real world

- AR maintains a connection to the physical environment, while VR transports users to entirely virtual spaces

Historical development

- Ivan Sutherland created the first head-mounted display (HMD) in 1968, laying the foundation for VR

- Tom Caudell coined the term "Augmented Reality" in 1990 while working at Boeing

- The 1990s saw the development of early VR systems (CAVE, Virtual Boy)

- Smartphone proliferation in the 2000s accelerated AR development

- Modern AR/VR era began with Oculus Rift Kickstarter in 2012, followed by Google Glass and Microsoft HoloLens

Key components

- Display technology (HMDs, smartphones, projection systems)

- Tracking systems (optical, inertial, hybrid) for position and orientation

- Input devices (controllers, cameras, sensors) for user interaction

- Graphics processing units (GPUs) for real-time rendering

- Software development kits (SDKs) and engines (Unity, Unreal) for content creation

AR technologies

- AR integrates digital information with the user's environment in real-time

- Computer vision algorithms play a crucial role in AR by enabling accurate object recognition and tracking

- Image processing techniques enhance AR experiences by improving the quality and realism of overlaid content

Marker-based vs markerless AR

- Marker-based AR uses predefined visual markers (QR codes, fiducial markers) for content triggering

- Markerless AR relies on natural feature tracking, enabling more seamless integration with the environment

- Simultaneous Localization and Mapping (SLAM) algorithms power markerless AR by creating 3D maps of surroundings

- Marker-based systems offer higher accuracy but limited flexibility compared to markerless solutions

Mobile AR applications

- Smartphone-based AR utilizes built-in cameras and sensors for widespread accessibility

- ARKit (iOS) and ARCore (Android) provide robust frameworks for mobile AR development

- Location-based AR apps (Pokémon GO) use GPS and compass data to place virtual content in real-world locations

- Social media filters (Snapchat, Instagram) employ facial recognition for real-time AR effects

AR displays and hardware

- Optical see-through displays (Microsoft HoloLens) use transparent screens to overlay digital content

- Video see-through displays (smartphone AR) combine camera feed with digital elements

- Spatial AR projects digital content directly onto physical objects or surfaces

- Retinal projection displays (Magic Leap) beam images directly onto the user's retina

VR technologies

- Virtual Reality creates fully immersive digital environments, replacing the user's entire visual field

- Computer vision algorithms in VR focus on tracking user movements and mapping virtual spaces

- Image processing techniques in VR aim to reduce latency and enhance visual fidelity for improved immersion

Immersive environments

- 360-degree video captures real-world scenes for passive VR experiences

- Computer-generated environments offer interactive and dynamic virtual worlds

- Photogrammetry techniques create highly detailed 3D models of real-world locations for VR exploration

- Volumetric capture technology enables the creation of 3D video for more immersive experiences

VR headsets and controllers

- Tethered VR headsets (Oculus Rift, HTC Vive) offer high-quality visuals but require connection to a powerful PC

- Standalone VR headsets (Oculus Quest) provide wireless freedom with integrated processing

- Inside-out tracking systems use onboard cameras to track headset and controller positions

- Haptic controllers provide tactile feedback to enhance immersion and interactivity

Haptic feedback systems

- Force feedback devices simulate resistance and texture in virtual environments

- Vibrotactile actuators create localized sensations for more nuanced haptic experiences

- Exoskeletons and full-body suits enable whole-body haptic feedback for enhanced realism

- Ultrasonic haptics generate touchless tactile sensations using focused sound waves

Computer vision in AR/VR

- Computer vision algorithms form the backbone of AR/VR systems, enabling accurate perception and interaction

- These techniques process visual data from cameras and sensors to understand the user's environment

- Advanced computer vision methods allow AR/VR systems to recognize objects, track movements, and map spaces in real-time

Image recognition and tracking

- Feature detection algorithms (SIFT, SURF, ORB) identify distinctive points in images for tracking

- Convolutional Neural Networks (CNNs) enable robust object recognition and classification in AR applications

- Optical flow techniques track motion between consecutive frames for smooth AR overlays

- Template matching algorithms compare image regions to predefined patterns for marker-based AR

Depth sensing and mapping

- Structured light systems project patterns onto surfaces to calculate depth information

- Time-of-Flight (ToF) cameras measure the time taken for light to bounce back from objects

- Stereo vision uses two cameras to estimate depth through triangulation

- Visual-Inertial Odometry (VIO) combines camera data with inertial measurements for accurate device positioning

Pose estimation

- 6 Degrees of Freedom (6DoF) tracking determines position and orientation in 3D space

- Perspective-n-Point (PnP) algorithms estimate camera pose from 2D-3D point correspondences

- Sensor fusion combines data from multiple sources (cameras, IMUs, GPS) for robust pose estimation

- Kalman filters and particle filters predict and refine pose estimates over time

Image processing for AR/VR

- Image processing techniques enhance the visual quality and performance of AR/VR systems

- These methods optimize rendering, improve display output, and ensure smooth integration of virtual content

- Advanced image processing algorithms contribute to reducing latency and increasing the realism of AR/VR experiences

Real-time rendering techniques

- Foveated rendering optimizes performance by reducing detail in peripheral vision areas

- Asynchronous Timewarp reduces perceived latency by warping previously rendered frames

- Adaptive resolution scaling adjusts render quality based on available processing power

- Occlusion culling improves performance by not rendering objects hidden from view

Image enhancement for displays

- Chromatic aberration correction compensates for color fringing in optical systems

- Barrel and pincushion distortion correction adjusts for lens-induced image warping

- High Dynamic Range (HDR) rendering increases the range of luminance levels for more realistic visuals

- Anti-aliasing techniques (MSAA, FXAA) reduce jagged edges in rendered images

Stereoscopic image processing

- Parallax adjustment fine-tunes the perceived depth of stereoscopic images

- Interpupillary distance (IPD) calibration ensures proper alignment of stereo images for individual users

- Depth-aware image compositing blends virtual objects with real-world scenes at the correct depth

- Anaglyph image generation creates 3D effects using color-filtered images for each eye

User interaction in AR/VR

- User interaction in AR/VR relies on computer vision and image processing to interpret user inputs

- These technologies enable natural and intuitive ways for users to engage with virtual content

- Advanced interaction methods enhance immersion and usability in AR/VR applications

Gesture recognition

- Hand tracking algorithms detect and interpret hand movements and poses

- Skeletal tracking enables full-body gesture recognition for more immersive interactions

- Machine learning models classify complex gestures for advanced control schemes

- Depth cameras improve gesture recognition accuracy by providing 3D spatial information

Eye tracking

- Pupil center corneal reflection (PCCR) technique tracks eye movements using infrared light

- Foveated rendering uses eye tracking data to optimize graphics performance

- Gaze-based interfaces allow users to interact with virtual objects using eye movements

- Eye tracking enables more natural depth-of-field effects in VR rendering

Voice commands

- Natural Language Processing (NLP) interprets spoken commands for hands-free control

- Wake word detection activates voice recognition systems in AR/VR devices

- Speech-to-text conversion enables text input and search functionality in virtual environments

- Voice activity detection distinguishes speech from background noise for improved recognition accuracy

Applications of AR/VR

- AR and VR technologies find applications across various industries, leveraging computer vision and image processing

- These applications demonstrate the versatility and potential impact of AR/VR in different domains

- Continuous advancements in AR/VR technologies expand the scope and effectiveness of these applications

Gaming and entertainment

- Immersive VR games create fully interactive virtual worlds for players to explore

- AR mobile games (Pokémon GO) blend virtual elements with real-world environments

- VR theme park attractions offer enhanced rides and experiences

- AR-enhanced live events (concerts, sports) provide additional information and interactive elements

Education and training

- Virtual field trips transport students to historical sites or inaccessible locations

- AR anatomy apps overlay 3D models onto the human body for medical education

- VR simulations provide safe environments for practicing dangerous or complex procedures

- AR maintenance guides offer step-by-step instructions for equipment repair and assembly

Healthcare and medicine

- VR exposure therapy treats phobias and PTSD by simulating triggering scenarios

- AR surgical navigation systems overlay patient data and guidance during procedures

- VR pain management techniques distract patients during painful treatments or recovery

- AR visualization tools assist in planning complex surgeries and medical interventions

Challenges in AR/VR

- AR and VR technologies face several challenges that impact user experience and adoption

- Addressing these challenges requires advancements in computer vision, image processing, and hardware design

- Overcoming these obstacles is crucial for the widespread adoption and long-term success of AR/VR technologies

Motion sickness and discomfort

- Vestibular mismatch between visual and physical motion causes VR sickness

- Latency in display updates contributes to motion sickness and disorientation

- Vergence-accommodation conflict strains eyes when focusing on virtual objects

- Extended use of AR/VR devices can lead to eye fatigue and physical discomfort

Privacy and security concerns

- AR applications may inadvertently capture and process sensitive real-world information

- VR systems collect large amounts of user data, including movement patterns and physiological responses

- Potential for AR/VR devices to be hacked, leading to unauthorized access to personal information

- Ethical considerations arise from the use of AR/VR for surveillance or behavior manipulation

Hardware limitations

- Current display resolutions fall short of human visual acuity, reducing immersion

- Field of view limitations in AR headsets restrict the area where virtual content can be displayed

- Battery life constraints impact the portability and usability of standalone AR/VR devices

- Processing power requirements for high-quality AR/VR experiences limit mobile device capabilities

Future trends

- The future of AR/VR technologies is shaped by ongoing research and development in computer vision and image processing

- Emerging trends aim to address current limitations and expand the capabilities of AR/VR systems

- These advancements promise to enhance user experience and broaden the applications of AR/VR across industries

Mixed reality integration

- Seamless blending of AR and VR technologies for more versatile experiences

- Advanced environment understanding enables better integration of virtual objects in real spaces

- Collaborative mixed reality spaces allow multiple users to interact in shared virtual environments

- Adaptive systems dynamically adjust the level of virtuality based on user needs and context

Advancements in display technology

- Micro-LED displays offer higher brightness, contrast, and energy efficiency for AR/VR devices

- Holographic displays create true 3D images without the need for special eyewear

- Varifocal displays address the vergence-accommodation conflict by dynamically adjusting focus

- Light field displays provide more natural depth cues and wider fields of view

AI-enhanced AR/VR experiences

- Machine learning algorithms improve object recognition and tracking in real-time

- AI-powered content generation creates dynamic and personalized virtual environments

- Natural language processing enables more sophisticated voice interactions in AR/VR

- Emotion recognition systems adapt experiences based on user's emotional state and engagement