Template matching is a fundamental technique in computer vision for finding specific patterns within images. It compares a small template image against regions of a larger image to identify similarities, enabling object detection, facial recognition, and medical image analysis.

This method forms the basis for more advanced image processing techniques. Various algorithms like cross-correlation and sum of squared differences quantify similarity between templates and image regions. Optimization techniques and machine learning approaches enhance template matching's performance and versatility.

Fundamentals of template matching

- Template matching serves as a foundational technique in computer vision for locating specific patterns within images

- Utilizes a predefined template to search for similar regions in a larger image, enabling object detection and recognition

- Forms the basis for more advanced image processing and analysis techniques in computer vision applications

Definition and purpose

- Pattern recognition method identifies specific image regions matching a predefined template

- Compares a small image (template) against overlapping areas of a larger image

- Quantifies similarity between template and image regions using correlation or difference measures

- Enables object detection, feature tracking, and image alignment in various computer vision tasks

Types of templates

- Binary templates consist of black and white pixels, useful for simple shape matching

- Grayscale templates contain intensity values, allowing for more detailed pattern matching

- Color templates utilize RGB or other color spaces for complex object recognition

- Edge templates focus on object contours, robust to illumination changes

- Texture templates capture surface patterns, effective for material recognition

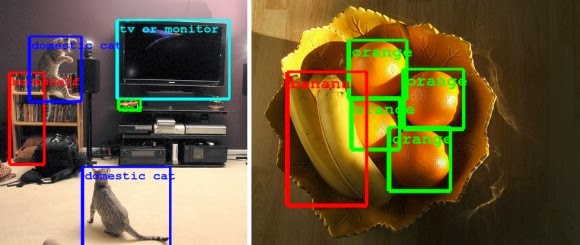

Applications in computer vision

- Object detection locates specific items within images or video frames

- Facial recognition identifies and verifies individuals based on facial features

- Medical image analysis detects abnormalities or specific structures in medical scans

- Industrial quality control inspects products for defects or inconsistencies

- Optical character recognition (OCR) converts printed or handwritten text into machine-encoded text

Template matching algorithms

Cross-correlation method

- Measures similarity between template and image regions by computing their dot product

- Slides template over image, calculating correlation at each position

- Higher correlation values indicate better matches between template and image region

- Formula for cross-correlation:

- T represents the template, I represents the image

- Sensitive to intensity variations, may produce false positives in bright image areas

Sum of squared differences

- Calculates dissimilarity between template and image regions

- Computes squared differences between corresponding pixel values

- Lower SSD values indicate better matches between template and image region

- Formula for sum of squared differences:

- More robust to intensity variations compared to cross-correlation

- Computationally efficient, suitable for real-time applications

Normalized cross-correlation

- Addresses limitations of standard cross-correlation by normalizing pixel values

- Computes correlation coefficient between template and image region

- Ranges from -1 to 1, with 1 indicating perfect match

- Formula for normalized cross-correlation:

- Robust to linear intensity changes, suitable for varying lighting conditions

- Computationally more expensive than standard cross-correlation

Implementation techniques

Sliding window approach

- Moves template over image in a raster scan pattern, typically left to right and top to bottom

- Computes similarity measure at each position, creating a response map

- Identifies local maxima in response map as potential matches

- Allows for exhaustive search but can be computationally intensive for large images

- Optimized using techniques like step size adjustment or early termination criteria

Multi-scale template matching

- Addresses size variations between template and target object in image

- Creates image pyramid by repeatedly downsampling original image

- Performs template matching at each scale level of the pyramid

- Combines results from different scales to identify best matches

- Enables detection of objects at various sizes without resizing the template

- Increases computational cost but improves robustness to scale variations

Rotation-invariant matching

- Handles rotated instances of the template in the target image

- Generates rotated versions of the template at different angles

- Performs template matching with each rotated template

- Selects best match across all rotations for each image position

- Circular templates can achieve rotation invariance without explicit rotation

- Increases computational complexity but enables detection of rotated objects

Performance optimization

Pyramid representation

- Creates multi-resolution image pyramid by successively downsampling the input image

- Performs template matching at coarser levels first, refining results at finer levels

- Reduces computational cost by quickly eliminating unlikely match locations

- Allows for efficient multi-scale matching without resizing the template

- Improves performance for large images or when searching for objects at multiple scales

Fast Fourier Transform (FFT)

- Utilizes frequency domain representation to accelerate template matching

- Converts both template and image to frequency domain using FFT

- Performs element-wise multiplication of frequency representations

- Applies inverse FFT to obtain spatial domain correlation result

- Reduces computational complexity from O(N^4) to O(N^2 log N) for NxN images

- Particularly effective for large templates or when matching multiple templates

Integral images

- Precomputes cumulative sum of pixel values in the image

- Enables rapid calculation of sum or average of rectangular image regions

- Accelerates template matching algorithms based on sum of squared differences

- Reduces computational complexity for each window evaluation

- Especially useful for real-time applications or when using large templates

Challenges and limitations

Occlusion and partial matching

- Objects partially hidden or obstructed in images pose challenges for template matching

- Traditional methods struggle with incomplete object appearances

- Partial matching techniques (matching subregions of template) can improve robustness

- Deformable templates or part-based models address occlusion issues

- Requires careful threshold selection to balance false positives and false negatives

Illumination and scale variations

- Changes in lighting conditions affect pixel intensities, impacting matching accuracy

- Scale differences between template and target object in image reduce matching performance

- Normalized cross-correlation helps mitigate illumination variations

- Multi-scale matching techniques address scale differences

- Preprocessing steps (histogram equalization, edge detection) can improve robustness

Computational complexity

- Template matching can be computationally expensive, especially for large images or templates

- Exhaustive search approaches may not be suitable for real-time applications

- Optimization techniques (FFT, integral images) reduce computational burden

- Trade-off between accuracy and speed must be considered for specific applications

- Parallel processing or GPU acceleration can improve performance for large-scale matching

Advanced template matching

Deformable templates

- Allows for non-rigid transformations of the template to match object variations

- Incorporates shape models to adapt template to different object instances

- Active contour models (snakes) deform template boundaries to fit image features

- Statistical shape models capture variations in object appearance across a population

- Improves matching accuracy for objects with flexible or articulated structures

Feature-based matching

- Extracts distinctive features (corners, edges, keypoints) from both template and image

- Matches features between template and image using descriptors (SIFT, SURF, ORB)

- Estimates transformation between matched features to locate template in image

- Robust to partial occlusion and geometric transformations

- Computationally efficient for large images or multiple template matching

Machine learning approaches

- Utilizes trained models to improve template matching performance

- Convolutional Neural Networks (CNNs) learn hierarchical features for object detection

- Template matching serves as region proposal mechanism for deep learning models

- Combines traditional template matching with learned features for improved accuracy

- Enables adaptation to complex object variations and background clutter

Evaluation metrics

Precision and recall

- Precision measures proportion of correct matches among all detected matches

- Recall measures proportion of correct matches among all actual instances in image

- Formula for precision:

- Formula for recall:

- F1-score combines precision and recall into a single metric for overall performance

Intersection over Union (IoU)

- Measures overlap between predicted bounding box and ground truth bounding box

- Calculated as area of intersection divided by area of union of the two boxes

- Formula for IoU:

- Commonly used threshold (0.5 or 0.7) determines successful match

- Higher IoU indicates more accurate localization of matched objects

Receiver Operating Characteristic (ROC)

- Plots true positive rate against false positive rate at various threshold settings

- Visualizes trade-off between sensitivity and specificity of template matching

- Area Under the Curve (AUC) quantifies overall performance of matching algorithm

- Allows comparison of different template matching methods or parameter settings

- Helps in selecting optimal threshold for specific application requirements

Template matching vs alternatives

Template matching vs feature detection

- Template matching searches for entire object pattern, feature detection identifies distinctive points

- Feature detection more robust to occlusion and geometric transformations

- Template matching provides precise localization, feature detection offers faster matching

- Feature detection requires less prior knowledge about object appearance

- Hybrid approaches combine strengths of both methods for improved performance

Template matching vs deep learning

- Template matching relies on predefined patterns, deep learning learns features from data

- Deep learning models (CNNs) can handle complex object variations and backgrounds

- Template matching computationally efficient for simple objects, deep learning for complex scenes

- Deep learning requires large labeled datasets and significant computational resources for training

- Template matching serves as preprocessing step or fallback method in deep learning pipelines

Real-world applications

Object detection and tracking

- Locates and follows specific objects in images or video streams

- Used in surveillance systems to identify and track persons of interest

- Enables autonomous vehicle systems to detect and track other vehicles, pedestrians

- Facilitates augmented reality applications by anchoring virtual objects to real-world targets

- Supports robotics applications for object manipulation and navigation

Image registration

- Aligns multiple images of the same scene taken from different viewpoints or times

- Crucial for medical image analysis, aligning scans from different modalities (MRI, CT)

- Enables creation of panoramic images by stitching multiple overlapping photographs

- Supports remote sensing applications, aligning satellite or aerial imagery

- Facilitates motion correction in video stabilization and super-resolution techniques

Quality control in manufacturing

- Inspects products on assembly lines for defects or inconsistencies

- Verifies correct placement and orientation of components in electronic assemblies

- Checks packaging for proper labeling, sealing, and overall quality

- Measures dimensions and tolerances of manufactured parts for compliance

- Enables automated sorting and grading of products based on visual characteristics