Image sampling and quantization are fundamental concepts in digital image processing. These techniques convert continuous visual information into discrete digital data, enabling computational analysis and manipulation of images in various applications.

Sampling determines how finely an image is divided into pixels, while quantization assigns discrete values to pixel intensities. Together, they balance image quality with data efficiency, influencing resolution, color depth, and file size in digital imaging systems.

Fundamentals of image sampling

- Image sampling forms the foundation of digital image representation in computer vision and image processing

- Involves converting continuous visual information into discrete digital data for computational analysis

- Crucial for accurately capturing and preserving image details for further processing and interpretation

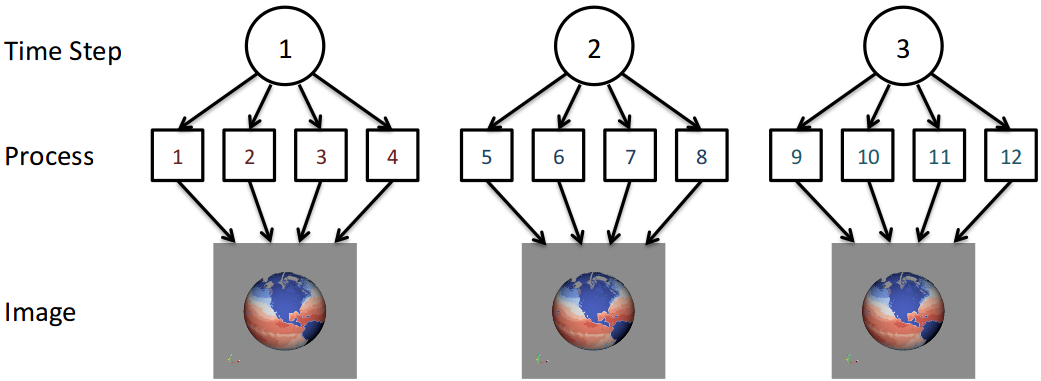

Spatial vs temporal sampling

- Spatial sampling captures image information across physical dimensions (width and height)

- Temporal sampling records image changes over time, essential for video processing

- Spatial resolution determines image detail level while temporal resolution affects motion smoothness

- Higher sampling rates in both domains generally lead to better image quality but increased data size

Nyquist-Shannon sampling theorem

- Fundamental principle stating the minimum sampling rate required to accurately represent a signal

- Sampling frequency must be at least twice the highest frequency component in the original signal

- Prevents aliasing and ensures faithful signal reconstruction from discrete samples

- Applied in image processing to determine optimal spatial sampling rates for capturing fine details

Aliasing and Moiré patterns

- Aliasing occurs when sampling rate is insufficient to capture high-frequency image components

- Results in visual artifacts like jagged edges or false patterns in digital images

- Moiré patterns manifest as interference patterns when sampling conflicts with image structures

- Anti-aliasing techniques (low-pass filtering, oversampling) mitigate these issues in image acquisition and rendering

Quantization process

- Quantization converts continuous-valued image data into discrete levels for digital representation

- Critical step in digitizing analog image signals and reducing data storage requirements

- Balances image quality with computational efficiency in various computer vision applications

Bit depth and color depth

- Bit depth defines the number of discrete levels used to represent pixel intensities

- Higher bit depths allow for more precise color or grayscale representation (8-bit, 16-bit, 32-bit)

- Color depth refers to the total number of bits used to represent color information per pixel

- Impacts color accuracy, dynamic range, and file size of digital images

Uniform vs non-uniform quantization

- Uniform quantization divides the signal range into equal intervals

- Simple to implement but may not optimize perceptual quality across all intensity levels

- Non-uniform quantization adapts interval sizes based on signal characteristics or human perception

- Logarithmic or perceptually-weighted quantization can improve visual quality in low-light areas

Quantization error and noise

- Quantization error results from rounding continuous values to discrete levels

- Manifests as loss of detail or introduction of false contours in images

- Quantization noise appears as granularity or "graininess" in digital images

- Dithering techniques can distribute quantization errors to improve perceived image quality

Spatial resolution concepts

- Spatial resolution defines the level of detail an imaging system can capture or display

- Crucial for determining the clarity and sharpness of digital images in computer vision applications

- Impacts the ability to distinguish fine structures and textures in image analysis tasks

Pixels and pixel density

- Pixels (picture elements) are the smallest addressable elements in a digital image

- Pixel count determines the total number of samples in horizontal and vertical dimensions

- Pixel density (pixels per inch or PPI) measures the concentration of pixels in a given area

- Higher pixel density generally results in sharper images but requires more storage and processing power

Resolution vs image size

- Resolution refers to the amount of detail in an image, often expressed in pixels (1920x1080)

- Image size denotes the physical dimensions of an image when printed or displayed

- Same resolution can yield different image sizes depending on pixel density and viewing distance

- Critical to consider both factors for optimal image quality in different applications (screen display, printing)

![Spatial vs temporal sampling, The effects of spatial and temporal replicate sampling on eDNA metabarcoding [PeerJ]](https://storage.googleapis.com/static.prod.fiveable.me/search-images%2F%22Spatial_vs_temporal_sampling_in_computer_vision%3A_image_resolution_video_processing_and_data_quality%22-fig-4-full.png)

Interpolation methods

- Used to estimate new pixel values when resizing or transforming digital images

- Nearest neighbor interpolation assigns the value of the closest pixel, preserving hard edges

- Bilinear interpolation calculates weighted average of surrounding pixels, producing smoother results

- Bicubic interpolation considers a larger pixel neighborhood, offering better quality for complex images

Color quantization techniques

- Color quantization reduces the number of distinct colors in an image while maintaining visual quality

- Essential for optimizing storage, transmission, and display of images in resource-constrained environments

- Balances color fidelity with computational efficiency in various image processing applications

Color spaces for quantization

- RGB (Red, Green, Blue) commonly used for display devices and digital image representation

- YCbCr separates luminance and chrominance, often used in video compression

- LAB color space designed to approximate human vision, useful for perceptual color quantization

- HSV (Hue, Saturation, Value) intuitive for color selection and manipulation tasks

Color palette selection

- Uniform color quantization divides the color space into equal partitions

- Popularity algorithm selects colors based on their frequency in the image

- Median cut recursively subdivides the color space based on color distribution

- Octree quantization uses a tree structure to efficiently represent and reduce the color space

Dithering algorithms

- Floyd-Steinberg dithering distributes quantization errors to neighboring pixels

- Ordered dithering applies a threshold matrix to create patterns of available colors

- Error diffusion dithering spreads quantization errors in multiple directions

- Halftoning simulates continuous tone images using patterns of dots

Sampling in frequency domain

- Analyzes image content in terms of spatial frequencies rather than spatial coordinates

- Provides insights into image structure, texture, and periodic patterns

- Crucial for various image processing tasks (filtering, compression, feature extraction)

Fourier transform and sampling

- Fourier transform decomposes an image into its constituent frequency components

- Discrete Fourier Transform (DFT) applies to sampled digital images

- Fast Fourier Transform (FFT) efficiently computes DFT, essential for real-time processing

- Sampling in frequency domain relates to spatial sampling through the Fourier transform properties

Low-pass and band-pass filtering

- Low-pass filtering attenuates high-frequency components, smoothing images and reducing noise

- Band-pass filtering selectively preserves a specific range of frequencies

- Ideal filters have sharp cutoffs in frequency domain but may introduce ringing artifacts

- Gaussian filters provide smooth transitions, balancing frequency selectivity and spatial localization

Reconstruction from samples

- Inverse Fourier transform reconstructs spatial domain image from frequency domain samples

- Nyquist-Shannon theorem ensures perfect reconstruction if sampling criteria are met

- Windowing functions (Hamming, Hann) mitigate artifacts when working with finite-length signals

- Interpolation in frequency domain can achieve high-quality image resizing and rotation

Image resampling methods

- Resampling alters the pixel grid of an image, changing its resolution or geometric properties

- Essential for resizing, rotating, or warping images in computer vision and graphics applications

- Different methods balance computational efficiency with output image quality

Nearest neighbor vs bilinear

- Nearest neighbor assigns the value of the closest pixel in the original image

- Preserves hard edges but can result in blocky appearance when upscaling

- Bilinear interpolation calculates weighted average of four nearest pixels

- Produces smoother results but may slightly blur sharp edges

Bicubic and Lanczos resampling

- Bicubic interpolation considers a 4x4 pixel neighborhood for each output pixel

- Provides smoother results than bilinear, preserving more image detail

- Lanczos resampling uses a windowed sinc function as the interpolation kernel

- Offers high-quality results, particularly for downscaling, but more computationally intensive

Super-resolution techniques

- Aim to increase image resolution beyond simple interpolation methods

- Machine learning approaches (convolutional neural networks) learn to infer high-resolution details

- Example-based methods use databases of image patches to guide upscaling

- Frequency domain techniques exploit aliasing to recover high-frequency information

Quantization in compression

- Quantization reduces the amount of information in an image to achieve data compression

- Crucial for efficient storage and transmission of digital images and video

- Balances compression ratio with perceived image quality in various applications

Lossy vs lossless compression

- Lossless compression preserves all original image data (PNG, TIFF)

- Achieves moderate compression ratios while allowing perfect reconstruction

- Lossy compression discards some image information to achieve higher compression (JPEG)

- Exploits human visual system limitations to reduce file size while maintaining perceptual quality

Vector quantization

- Represents groups of pixel values (vectors) using a codebook of representative vectors

- Effective for compressing images with recurring patterns or textures

- Codebook design crucial for balancing compression ratio and image quality

- Often used in combination with other compression techniques (JPEG, video coding)

Transform coding quantization

- Applies quantization in a transformed domain (DCT for JPEG, wavelet for JPEG2000)

- Exploits energy compaction properties of transforms to concentrate information

- Quantization step sizes often vary based on frequency content and human visual sensitivity

- Zig-zag scanning and run-length encoding further compress quantized coefficients

Applications and considerations

- Image sampling and quantization techniques find diverse applications across various fields

- Understanding the specific requirements and constraints of each application domain crucial

- Balancing image quality, computational resources, and domain-specific needs essential

Medical imaging requirements

- High bit depth crucial for preserving subtle intensity variations in diagnostic images

- Lossless compression often preferred to avoid artifacts that could impact diagnosis

- Specialized color spaces (DICOM grayscale) used for consistent interpretation across devices

- Super-resolution techniques applied to enhance image detail in specific modalities (MRI, CT)

Digital photography challenges

- Wide dynamic range scenes require careful exposure and quantization strategies

- Demosaicing algorithms reconstruct full-color images from color filter array samples

- Noise reduction techniques address issues arising from high ISO settings and small sensors

- Raw image formats preserve maximum information for post-processing flexibility

Computer vision preprocessing

- Image resampling often necessary to normalize input sizes for machine learning models

- Color quantization can reduce computational complexity in object detection tasks

- Frequency domain analysis useful for texture classification and feature extraction

- Careful consideration of quantization effects on edge detection and feature matching algorithms