Newton's Method

Newton's Method is an iterative algorithm for finding roots (zeros) of equations that can't be solved algebraically. It starts with an initial guess and uses the derivative to refine that guess repeatedly, producing increasingly accurate approximations of the root.

This method is especially useful for nonlinear equations like , where no algebraic technique will give you an exact answer. It shows up constantly in applied math, physics, and engineering.

Concept and Purpose

A root of a function is a value of where . For example, has roots at .

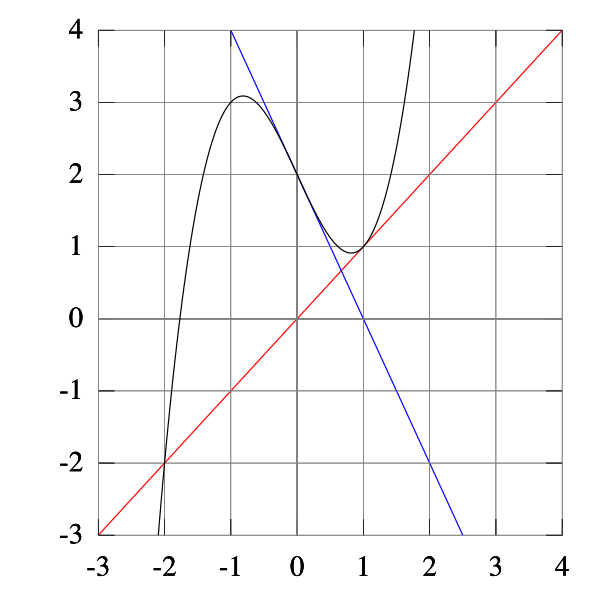

Newton's Method finds roots by using linear approximation. The core idea: at your current guess , draw the tangent line to . Where that tangent line crosses the x-axis becomes your next guess, . Each tangent line gets you closer to the actual root, as long as conditions are reasonable.

This works because near a root, the tangent line is a good local approximation of the curve. So the tangent line's zero is close to the function's zero.

The Iterative Formula

The formula that drives Newton's Method:

- is your current approximation

- is the function's value at that point

- is the slope of the tangent line at that point

The fraction tells you how far to shift from your current guess. If is large (you're far from zero), you take a bigger step. If the slope is steep, you take a smaller step.

Applying Newton's Method Step by Step

-

Choose an initial guess reasonably close to the expected root (a graph helps here).

-

Compute and .

-

Apply the formula to get .

-

Repeat using to find , then to find , and so on.

-

Stop when the difference is within your desired tolerance, or after a set number of iterations.

Quick example: Find a root of (so you're approximating ).

- . Start with .

- The actual value of . After just two iterations, you're accurate to four decimal places.

Convergence and Limitations

When it works well:

- Newton's Method converges quadratically when your initial guess is close enough to the root. Quadratic convergence means the number of correct digits roughly doubles with each iteration. That's fast.

When it can fail:

- Initial guess too far from the root. The tangent line may point you away from the root you want, or toward a different root entirely.

- Derivative near zero at some iterate. If , the formula sends flying off to a huge value. Geometrically, a nearly flat tangent line crosses the x-axis very far away.

- Repeated roots. At a repeated root like for , convergence slows down significantly (it drops from quadratic to linear).

- Oscillation. For some functions and starting points, the method can bounce back and forth without settling down.

- Non-differentiable functions. The method requires to exist. You can't directly apply it to functions like .

Interpreting Results

- Each iteration produces a new approximation . The sequence should converge toward the actual root.

- Check accuracy by evaluating . The closer it is to zero, the better your approximation.

- The difference gives a rough estimate of the error, though it's not a guarantee.

- If the method oscillates or the approximations diverge, your results aren't reliable. Try a different initial guess or verify the function meets the method's requirements.

Related Methods

- The secant method is a variation that replaces the derivative with a difference quotient, using two previous points instead. Useful when computing is difficult.

- Fixed-point iteration is another root-finding approach that can work when Newton's Method isn't suitable, though it typically converges more slowly.