Overview of Parametric Spectral Estimation

Parametric spectral estimation models signals as outputs of linear systems driven by white noise. Instead of directly transforming data into the frequency domain (as non-parametric methods do), these methods assume a specific signal model, estimate its parameters from data, and then compute the power spectral density (PSD) from that model.

The payoff: higher spectral resolution and better noise rejection, particularly with short data records. When the assumed model fits the underlying signal well, parametric methods can substantially outperform periodogram-based approaches.

Principles of Parametric Modeling

The core assumption is that your signal can be represented by a linear system with a rational transfer function, characterized by a finite set of parameters. The workflow follows three steps:

- Choose a model structure (AR, MA, or ARMA) and a model order.

- Estimate the model parameters from observed data, typically via least-squares or maximum-likelihood methods.

- Compute the PSD by evaluating the model's frequency response, or equivalently, by computing the model's autocorrelation and applying the Wiener-Khinchin theorem.

The three model types differ in how they relate the white noise input to the observed signal.

Autoregressive (AR) Models

An AR model expresses the current sample as a weighted sum of its most recent past values, plus a white noise driving term. The difference equation is:

where are the AR coefficients and is white noise with variance . The model order controls how many past samples contribute to the prediction.

The corresponding PSD is an all-pole spectrum:

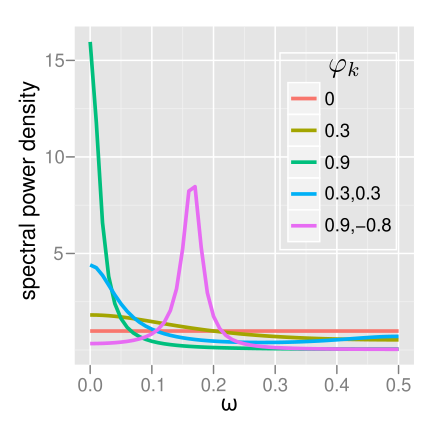

Because the PSD has only poles (no zeros), AR models are naturally suited to signals with sharp spectral peaks, like narrowband sinusoids or resonances. They're also the most widely used parametric model because their parameters can be estimated by solving linear equations.

Moving Average (MA) Models

An MA model expresses the signal as a weighted sum of the current and past white noise samples:

where are the MA coefficients. The PSD is an all-zero spectrum:

MA models are well suited to signals with spectral nulls (deep valleys in the spectrum). However, estimating MA parameters requires solving nonlinear equations, which makes them less convenient than AR models in practice.

Autoregressive Moving Average (ARMA) Models

ARMA models combine both AR and MA components, giving the most flexible representation:

The PSD has both poles and zeros:

ARMA models can represent spectra with both peaks and nulls using fewer total parameters than a pure AR or MA model would need. The trade-off is that parameter estimation is more complex, since the joint estimation of AR and MA coefficients is a nonlinear problem.

Parametric Spectral Estimation Techniques

Several methods exist for estimating AR model parameters. They differ in what they optimize, whether they guarantee model stability, and how they perform on short records.

Yule-Walker Method

The Yule-Walker method estimates AR coefficients by solving linear equations derived from the sample autocorrelation function:

where is the sample autocorrelation at lag . This system has Toeplitz structure, so it can be solved efficiently using the Levinson-Durbin recursion in operations.

Strengths: Always produces a stable model (all poles inside the unit circle). Computationally efficient.

Weakness: Uses a biased autocorrelation estimate, which can limit spectral resolution, particularly for short data records or high model orders.

Burg Method

The Burg method takes a different approach: it directly minimizes the sum of forward and backward linear prediction errors in a least-squares sense, without first computing the autocorrelation.

The algorithm estimates reflection coefficients (also called partial correlation coefficients) at each stage of a lattice filter, then converts them to AR coefficients. Because the reflection coefficients are constrained to have magnitude less than one, the resulting model is always stable.

Strengths: Better spectral resolution than Yule-Walker, especially for short records. Guaranteed stable. Computationally efficient via its recursive lattice structure.

Weakness: Can exhibit line-splitting artifacts, where a single spectral peak splits into two spurious peaks. This tends to occur when the data record is very short and the true frequency is near 0 or .

Covariance Method

The covariance method minimizes only the forward prediction error in a least-squares sense. It solves a set of normal equations based on the sample covariance matrix of the data (which is not Toeplitz in general).

Strengths: Can provide better resolution than Yule-Walker because it avoids the biased autocorrelation estimate.

Weakness: Does not guarantee a stable AR model. More computationally intensive since the covariance matrix lacks Toeplitz structure, so Levinson-Durbin cannot be used.

Modified Covariance Method

This method minimizes the average of forward and backward prediction errors, combining the strengths of the Burg and covariance approaches.

Strengths: Generally provides the best spectral resolution among the four methods. Reduces bias compared to the standard covariance method. Less susceptible to line-splitting than Burg.

Weakness: Still does not guarantee model stability. Highest computational cost of the four methods.

Model Order Selection

Choosing the right model order is one of the most critical decisions in parametric spectral estimation.

- Order too low: The PSD estimate is overly smooth and may fail to resolve closely spaced spectral components.

- Order too high: The PSD estimate overfits the data, introducing spurious peaks that don't correspond to real signal features.

Three widely used criteria balance goodness of fit against model complexity. In each case, you compute the criterion for a range of candidate orders and pick the order that minimizes it.

Akaike Information Criterion (AIC)

where is the model order and is the maximum likelihood of the data given the model. For Gaussian AR models, this simplifies to a function of , , and the estimated prediction error variance.

The AIC tends to overestimate the true model order, especially for small sample sizes , because its penalty term grows linearly with but doesn't scale with .

Minimum Description Length (MDL)

The MDL penalizes model complexity more heavily than the AIC (the penalty grows as rather than being constant). This makes it more conservative, favoring parsimonious models and reducing the risk of overfitting. For large , MDL is consistent, meaning it converges to the true order.

Final Prediction Error (FPE)

where is the estimated prediction error variance for order . The ratio acts as a penalty that increases with model order. FPE is asymptotically equivalent to AIC, so it shares the tendency to slightly overestimate the order for small , but it provides a useful alternative when you want to think in terms of prediction performance.

Advantages vs Disadvantages of Parametric Methods

High Resolution vs Model Order Sensitivity

Parametric methods excel at resolving closely spaced spectral components, particularly from short data records where non-parametric methods suffer from wide mainlobes. However, this resolution advantage depends heavily on selecting the correct model order. Too low and you miss components; too high and you hallucinate peaks that aren't there.

Computational Efficiency vs Model Assumptions

For a given resolution target, parametric methods are typically cheaper computationally than non-parametric alternatives, especially with long records. The catch is that they assume the signal actually follows the chosen model (AR, MA, or ARMA). When the real signal violates this assumption, the PSD estimate can be significantly biased. Non-parametric methods make no such structural assumptions and degrade more gracefully under model mismatch.

Applications of Parametric Spectral Estimation

Speech Analysis and Synthesis

The vocal tract acts approximately as an all-pole filter, making AR models a natural fit. Linear predictive coding (LPC) uses AR parameter estimation to extract vocal tract resonances (formants) from speech frames. This is the foundation of many speech compression, synthesis, and recognition systems.

Radar and Sonar Signal Processing

AR models are used to estimate Doppler spectra from radar returns, providing high-resolution velocity estimates of moving targets. ARMA models can represent range profiles, helping to resolve closely spaced targets and suppress clutter. The ability to work with short coherent processing intervals makes parametric methods especially valuable here.

Biomedical Signal Analysis

AR spectral estimation is widely used for EEG analysis, where short stationary segments and the need to track spectral changes over time favor parametric approaches. Frequency-band power ratios estimated from AR spectra help identify sleep stages, detect seizures, and characterize cognitive states. ARMA models are also applied to ECG signals for heart rate variability analysis and arrhythmia detection.

Comparison of Parametric vs Non-Parametric Methods

Spectral Resolution and Sidelobe Levels

Parametric methods achieve higher resolution because they don't suffer from the windowing effects that limit non-parametric methods. There are no sidelobes in the traditional sense, since the PSD is computed from a model rather than a windowed FFT. The downside is that model mismatch or incorrect order selection can produce artifacts that are harder to identify than the predictable sidelobe patterns of windowed periodograms.

Robustness to Noise and Model Mismatch

Non-parametric methods (periodogram, Welch) are more robust when you don't know the signal's underlying structure. Their performance degrades gradually with decreasing SNR. Parametric methods can break down more abruptly: if the SNR is low or the model is wrong, the estimated PSD may show large biases or spurious features with no obvious warning signs.

Computational Complexity and Data Requirements

Parametric methods scale as or depending on the algorithm, where is the data length and is the model order. Non-parametric FFT-based methods scale as . For typical model orders (), parametric methods are faster.

More importantly, parametric methods need far fewer data samples to achieve a given resolution. A Welch estimate might need thousands of samples to resolve two closely spaced tones, while an AR model of modest order can do it with a few hundred. This data efficiency is the primary practical advantage in applications where observation time is limited.