Basics of M/M/1 queues

An M/M/1 queue models a system with a single server where customers arrive randomly and service takes a random amount of time. The name itself encodes the assumptions: the first "M" means Markovian (memoryless) arrivals, the second "M" means Markovian service times, and the "1" means one server.

Arrival process

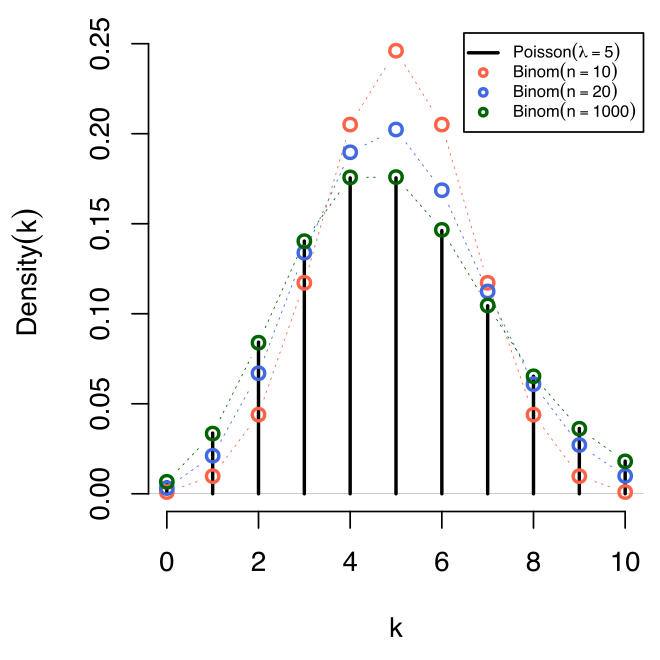

Customers arrive according to a Poisson process with rate (customers per unit time). This means:

- Inter-arrival times are independent and exponentially distributed with mean .

- Arrivals are independent of each other and of the current state of the system.

- In any small interval , the probability of exactly one arrival is approximately .

Service time distribution

Each customer's service time is exponentially distributed with rate , so the mean service time is . The memoryless property of the exponential distribution is what makes the analysis tractable: no matter how long a service has been in progress, the remaining time has the same distribution. This is precisely why the system's future behavior depends only on the current number of customers, making it a continuous-time Markov chain.

Traffic intensity

Traffic intensity is defined as:

This ratio captures how fast work arrives relative to how fast the server can handle it. For the queue to reach a steady state, you need . If , the server can't keep up with arrivals and the queue grows without bound.

Think of as the long-run fraction of time the server is busy. At , the server is idle only 10% of the time, and congestion becomes severe.

Steady-state probabilities

Once the system reaches steady state, the probability of finding exactly customers in the system is:

This is a geometric distribution. A few things to note:

- is the probability the system is empty (and the server is idle).

- The probabilities decay exponentially in , so very long queues are possible but increasingly unlikely when is well below 1.

- All performance measures can be derived from this distribution.

Performance measures of M/M/1 queues

These formulas let you quantify how congested a system is and how long customers wait. They all follow from the steady-state distribution above.

Server utilization

This is the fraction of time the server is busy. For example, if customers/hour and customers/hour, then , meaning the server is busy 80% of the time.

Expected number in system

This counts everyone present: the customer in service plus those waiting. With , you get customers on average. Notice how grows rapidly as .

Expected number in queue

This counts only the customers waiting (not the one being served). The relationship is worth remembering.

Expected waiting time in system

This is the total time from arrival to departure (waiting plus service). With and , hour = 30 minutes.

Expected waiting time in queue

This is the time spent waiting before service begins. Note that , which makes sense: total time in system equals time waiting plus time being served.

Little's Law connects these measures: and . These relationships hold for any stable queue, not just M/M/1. They're extremely useful for converting between "number" measures and "time" measures.

Variations of M/M/1 queues

The basic M/M/1 model assumes infinite capacity and an infinite customer population. Several variations relax these assumptions.

M/M/1/K queues with limited capacity

The system can hold at most customers (including the one in service). When the system is full, new arrivals are turned away and lost. Because arrivals are blocked at capacity, this system can be stable even when . The steady-state probabilities become:

(When , each state is equally likely: .)

M/M/1/K/K queues with limited population

Here the total customer population is finite ( customers). A customer who is already in the system can't generate another arrival. This is useful for modeling machine repair problems: you have machines, and only machines that are running can break down. The effective arrival rate depends on how many customers are already in the system, which changes the balance equations.

M/M/1 queues with balking

Customers who arrive and see a long queue may decide not to join at all. Typically, the probability of joining decreases as the number of customers already present increases. This reduces the effective arrival rate and lowers congestion compared to the standard model.

M/M/1 queues with reneging

Customers join the queue but leave if they wait too long. Each customer's patience is usually modeled as an exponential random variable. Reneging also reduces the effective load on the system but requires modified balance equations to compute steady-state probabilities.

Basics of M/M/c queues

An M/M/c queue extends the M/M/1 model to identical servers working in parallel, all fed by a single queue. Customers are served first-come, first-served by whichever server becomes free next.

Arrival and service time distributions

The assumptions are the same as M/M/1:

- Arrivals follow a Poisson process with rate .

- Each server has independent, exponentially distributed service times with rate .

The combined maximum service rate of the system is .

Traffic intensity for M/M/c

This is the utilization per server. Stability again requires , meaning . It's common to also define (the offered load in Erlangs), so .

Steady-state probabilities for M/M/c

The probability of an empty system is:

where . The state probabilities are then:

- For :

- For :

The key difference from M/M/1: when , some servers are idle and the effective service rate is (not ). When , all servers are busy and the effective service rate is .

Performance measures of M/M/c queues

Probability of waiting (Erlang C formula)

The probability that an arriving customer finds all servers busy and must wait is:

This is the Erlang C formula, one of the most widely used results in queueing theory. Call centers, for instance, use it daily to staff agents.

Expected number in queue

Expected number in system

The term accounts for the average number of customers currently in service.

Expected waiting time in queue

By Little's Law:

Expected waiting time in system

Numerical example: Suppose customers/hour, customers/hour, and servers. Then and . You'd compute , then , and from there all the performance measures follow. Compare this to an M/M/1 queue with (same total service capacity): the M/M/c system will generally have shorter queues because pooling servers is more efficient than having one fast server.

Designing M/M/c queueing systems

Determining the number of servers needed

The typical approach:

- Estimate and from data.

- Pick a performance target (e.g., "average wait in queue under 2 minutes" or "at most 20% of customers wait").

- Compute , , or for until the target is met.

- The smallest satisfying the target is your answer.

Since the formulas involve factorials and sums, this is typically done computationally or with Erlang C tables.

Cost analysis and optimization

A common formulation balances two costs:

- Server cost: per unit time (wages, equipment).

- Waiting cost: per unit time (customer dissatisfaction, lost productivity).

The total cost is evaluated for different values of , and you pick the that minimizes it. Because decreases with but server cost increases, there's usually a clear optimum.

Quality of service requirements

In practice, systems are designed around service level agreements (SLAs). Common targets include:

- A specified percentage of customers served within a target time (e.g., 80% of calls answered within 20 seconds).

- Maximum acceptable probability of waiting.

- Maximum acceptable average wait.

The M/M/c model provides the formulas to check whether a given configuration meets these targets.

Capacity planning considerations

Arrival rates fluctuate over time (think of a call center with morning peaks). Capacity planning involves:

- Forecasting demand at different times of day or seasons.

- Sizing the system for peak loads or using time-varying staffing.

- Building in headroom so that small demand increases don't push the system past .

M/M/1 vs M/M/c queues

Differences in system behavior

The fundamental difference is the number of servers. In M/M/1, a single server handles everything. In M/M/c, servers share the load from one queue. This pooling effect is powerful: combining resources into a shared pool is more efficient than running separate single-server queues.

For example, two separate M/M/1 queues each with and ( each) will have per queue, so . A single M/M/2 queue with and has but will be significantly less than 8 due to the pooling effect.

Performance comparison

For the same total service capacity ( equal in both cases):

- M/M/c queues have shorter expected queue lengths ().

- M/M/c queues have shorter expected waiting times ().

- The probability of waiting () is lower in M/M/c.

The advantage of pooling grows as utilization increases. At low , the difference is small. At high , pooling makes a dramatic difference.

Suitability for different applications

- M/M/1 fits systems with a single resource: a single checkout lane, a single-processor system, a single communication link.

- M/M/c fits systems with parallel resources: a bank with multiple tellers, a hospital emergency department, a multi-core server.

Transitioning from M/M/1 to M/M/c

When demand grows beyond what one server can handle ( approaching 1), adding servers is the natural response. The decision should be based on:

- Current performance metrics and how close is to 1.

- Projected demand growth.

- The cost of additional servers versus the cost of degraded service.

Adding even one server (going from M/M/1 to M/M/2) can produce a large improvement in waiting times when the system is heavily loaded.