Discrete probability distributions describe the likelihood of outcomes for random variables that take on countable values. They're the building blocks for modeling random events in stochastic processes, and nearly every topic in this course builds on them.

This section covers the key discrete distributions (Bernoulli, binomial, geometric, Poisson, and others), along with the core machinery: probability mass functions, cumulative distribution functions, expected value, variance, moment generating functions, and joint distributions.

Types of discrete distributions

Discrete distributions model scenarios where a random variable can only take on isolated, countable values (integers, for instance). In stochastic processes, they show up constantly: modeling arrivals to a queue, counting defects in manufacturing, tracking failures in a reliability system.

The distributions you need to know for this unit:

- Bernoulli and Binomial (success/failure trials)

- Geometric and Negative Binomial (waiting for successes)

- Poisson (counting events over an interval)

- Hypergeometric (sampling without replacement)

Each has a specific PMF, expected value, and variance that you should be comfortable deriving and applying.

Probability mass functions

The probability mass function (PMF) is the primary way to specify a discrete distribution. It tells you the probability that a random variable equals each of its possible values.

Definition of PMF

A PMF assigns a probability to every possible value of a discrete random variable . It's written as , and it answers the question: "What's the probability that equals exactly ?"

Properties of valid PMFs

For a PMF to be valid, two conditions must hold:

- Non-negativity: for all

- Normalization:

If either condition fails, you don't have a legitimate probability distribution.

Cumulative distribution functions

The cumulative distribution function (CDF) gives a running total of probability. It's especially useful when you need to compute probabilities involving inequalities, like .

Definition of CDF

The CDF of a discrete random variable is defined as:

It's a non-decreasing, right-continuous step function. As , , and as , .

Relationship between PMF and CDF

You can go back and forth between the two:

- CDF from PMF: Sum up PMF values:

- PMF from CDF: Take differences at jump points:

For integer-valued random variables, this simplifies to .

Expected value

The expected value summarizes where a distribution is "centered." Think of it as the long-run average if you could repeat the random experiment infinitely many times.

Definition of expected value

For a discrete random variable :

Each possible value gets weighted by its probability. Values that are more likely pull the expected value toward them.

Linearity of expectation

This is one of the most useful properties in probability:

This holds regardless of whether and are independent. That's what makes it so powerful. You can also pull out constants: .

Variance and standard deviation

Variance measures how spread out a distribution is around its mean. Two distributions can have the same expected value but very different variances.

Definition of variance

A computationally easier form that's often faster to use:

Properties of variance

- Scaling: (the square matters; variance is not linear)

- Shifting: (adding a constant doesn't change spread)

- Sum of independents: If and are independent,

Note the independence requirement for the sum rule. Unlike linearity of expectation, this does not hold for dependent variables. For dependent variables, you need the covariance term: .

Standard deviation vs variance

The standard deviation is . Its main advantage is that it's in the same units as itself, making it more interpretable. Variance is in squared units, which is useful for calculations but harder to reason about directly.

Moment generating functions

Moment generating functions (MGFs) encode the entire distribution of a random variable into a single function. They're especially handy for finding moments and for working with sums of independent variables.

Definition of MGF

The MGF exists if this sum converges in some neighborhood of . When it exists, it uniquely determines the distribution.

Properties and applications of MGFs

- Extracting moments: The -th moment is , the -th derivative evaluated at . So and .

- Sums of independents: If and are independent, . This makes MGFs a clean way to derive the distribution of a sum.

- Identifying distributions: If you compute an MGF and recognize it as the MGF of a known distribution, you've identified the distribution of your random variable.

Common discrete distributions

Bernoulli and binomial distributions

A Bernoulli random variable models a single trial with two outcomes: success () with probability , or failure () with probability .

- PMF: for

- ,

The Binomial distribution counts the number of successes in independent Bernoulli trials.

- PMF: for

- ,

Applications include quality control (number of defective items in a batch) and survey sampling (number of respondents choosing a particular option).

Geometric and negative binomial distributions

The Geometric distribution models the number of trials until the first success.

- PMF: for

- ,

Be careful: some textbooks define the geometric as the number of failures before the first success, which shifts the support to and changes the PMF to . Check which convention your course uses.

The Negative Binomial generalizes this to the number of trials until the -th success.

- PMF: for

- ,

These distributions are natural models for waiting times in stochastic processes.

Poisson distribution

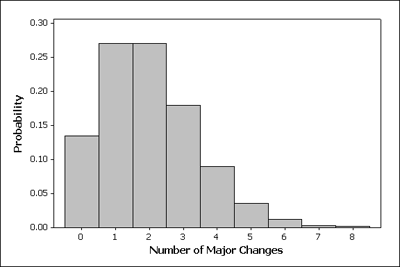

The Poisson distribution models the count of events in a fixed interval, given that events occur independently at a constant average rate .

- PMF: for

- ,

The fact that the mean equals the variance is a distinctive feature. Typical applications: customer arrivals per hour, number of typos per page, radioactive decay events per second.

The Poisson also arises as a limit of the binomial when is large, is small, and stays moderate.

Hypergeometric distribution

The Hypergeometric distribution models successes in draws from a finite population of size containing successes, without replacement.

- PMF:

- ,

The key difference from the binomial: draws are dependent because there's no replacement. As with , the hypergeometric converges to the binomial.

Joint distributions

When you're working with two or more random variables simultaneously, you need joint distributions to capture how they behave together.

Joint probability mass functions

The joint PMF of and is , giving the probability that and at the same time.

Validity requirements are the same as for single-variable PMFs: non-negativity, and the double sum over all pairs must equal 1.

Marginal and conditional distributions

Marginal PMFs recover the distribution of a single variable by summing out the other:

Conditional PMFs describe one variable given a specific value of the other:

This is only defined when .

Independent vs dependent random variables

and are independent if and only if their joint PMF factors into the product of marginals for every pair of values:

Equivalently, independence means conditioning on one variable doesn't change the distribution of the other: .

If this factorization fails for even a single pair , the variables are dependent, and you'll need the full joint PMF (or covariance information) to analyze their combined behavior.

Sums of discrete random variables

Adding random variables together comes up constantly in stochastic processes (total service time, aggregate demand, cumulative arrivals, etc.).

Convolution formula for PMFs

For independent random variables and , the PMF of is:

This is called the convolution of the two PMFs. You iterate over all values that can take and accumulate the products.

For sums of three or more independent variables, apply convolution iteratively (or use MGFs, which is usually cleaner).

Distribution of sum of independent variables

Certain families of distributions are "closed" under summation of independent variables:

- Sum of i.i.d. Bernoulli() variables Binomial()

- Sum of independent Poisson() and Poisson() Poisson()

- Sum of independent Negative Binomial() and Negative Binomial() Negative Binomial()

Recognizing these closure properties saves you from doing convolution by hand.

Transformations of discrete random variables

Transformations create new random variables as functions of existing ones. This is how you go from a model of raw data to a model of some derived quantity.

PMF and CDF under transformations

Given where has a known PMF, the PMF of is:

You collect all values of that map to the same and add their probabilities. If is one-to-one, each sum has only one term.

Functions of multiple discrete variables

For a transformation :

Once you have the joint PMF of , you can extract marginals and conditionals using the standard formulas from the joint distributions section.

Applications and examples

Modeling real-world scenarios

- Queueing systems: Customer arrivals are often modeled as Poisson; the number of customers served in a time window can be binomial or geometric depending on the service mechanism.

- Inventory management: Daily demand for a product might follow a Poisson distribution with rate units/day, which lets you set reorder points and safety stock levels.

- Reliability engineering: The number of component failures in a system over a fixed period can be modeled as Poisson or binomial, depending on whether components are independent and identical.

Solving problems using discrete distributions

- Quality control: Use the hypergeometric distribution to find the probability that a sample of 10 items from a lot of 200 contains 2 or more defectives.

- Risk assessment: Model the number of insurance claims per month as Poisson() to estimate the probability of exceeding a threshold.

- Network analysis: Node degree distributions in random graphs often follow Poisson (Erdős–Rényi model) or power-law distributions, which determine connectivity and resilience properties.

The common thread: pick the distribution whose assumptions match your scenario, then use PMFs, CDFs, expected values, and variances to answer quantitative questions about the system.