Definition of Stationary Distributions

A stationary distribution for a continuous-time Markov chain (CTMC) is a probability distribution over the state space that remains unchanged as the process evolves. If the chain is "in" its stationary distribution at some moment, it stays in that distribution for all future times.

Formally, a probability vector is stationary for a CTMC with generator matrix if it satisfies:

The equation is the continuous-time analogue of in discrete time. Because the generator encodes transition rates rather than transition probabilities, the stationarity condition takes this slightly different form.

Existence and Uniqueness

Whether a CTMC has a stationary distribution depends on structural properties of the chain:

- Irreducibility: Every state can be reached from every other state. There's a path of positive-rate transitions connecting any pair of states.

- Positive recurrence: Starting from any state, the expected time to return to that state is finite. For finite-state irreducible chains, positive recurrence is automatic.

If a CTMC is irreducible and positive recurrent, it has a unique stationary distribution. For finite irreducible chains, this is always guaranteed.

A reducible chain can have multiple stationary distributions (one for each closed communicating class, and convex combinations of these). A chain that is irreducible but not positive recurrent (null recurrent or transient) has no stationary distribution.

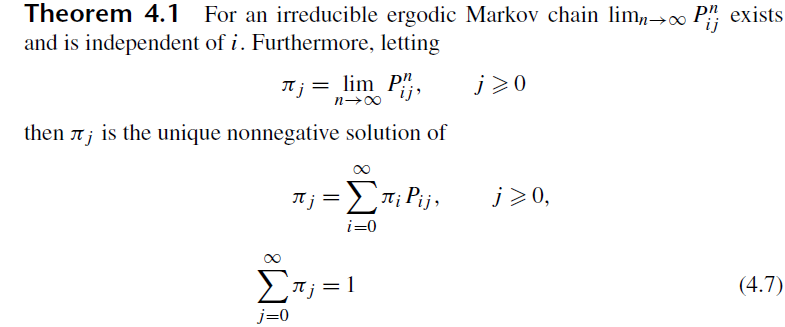

Relationship to Limiting Distributions

The limiting distribution describes where the chain ends up as , regardless of where it started. Specifically, gives the limiting probability of being in state .

For CTMCs, the relationship is cleaner than in discrete time because there's no periodicity issue (continuous-time chains are inherently aperiodic). If a CTMC is irreducible and positive recurrent, the limiting distribution exists and equals the unique stationary distribution:

So for well-behaved CTMCs, the stationary distribution is the limiting distribution. The distinction matters more in discrete time, where periodicity can prevent convergence to a limit even when a stationary distribution exists.

Computation Methods

Global Balance Equations

The global balance equations state that, for each state , the total rate of probability flow out of equals the total rate of flow into :

Here is the rate of transitioning from state to state (the off-diagonal entries of ).

To find :

- Write out the global balance equation for each state.

- Note that these equations are linearly dependent (they all encode ), so drop one equation.

- Replace the dropped equation with the normalization condition .

- Solve the resulting system of linear equations.

Detailed Balance (Reversibility)

For many chains encountered in practice, a stronger condition called detailed balance holds:

This says the rate of flow from to equals the rate from to , pairwise. Detailed balance implies global balance (sum both sides over ), but not vice versa.

When detailed balance holds, the chain is called reversible, and finding is often much easier because you can solve the pairwise equations directly rather than dealing with the full system.

Using the Embedded Chain

Another approach connects the CTMC to its embedded discrete-time chain. If is the stationary distribution of the embedded chain (with transition probabilities where ), then the CTMC stationary distribution is:

This formula weights each state by the reciprocal of its total departure rate , reflecting the fact that the chain spends more time in states it leaves slowly.

Properties

Invariance

If you initialize a CTMC according to , then at every future time the distribution over states is still . This is the defining property, and it follows directly from (equivalently, for all , where is the transition semigroup).

Long-Run Time Averages

The entries of have a concrete interpretation: equals the long-run fraction of time the chain spends in state . More precisely, for an irreducible positive-recurrent CTMC:

This holds regardless of the initial state. It also means where is the expected return time to state (counting both holding times and transitions).

Relationship to Mean Holding Times

Because a CTMC holds in state for an exponential time with rate , states with smaller (slower departure rates) tend to have larger stationary probabilities. The embedded-chain formula above makes this precise.

Examples

Birth-Death Processes

Birth-death CTMCs have states with only nearest-neighbor transitions: birth rate (from to ) and death rate (from to ).

Detailed balance holds automatically for birth-death chains, giving:

Solving recursively:

Then is determined by normalization: . A stationary distribution exists if and only if this sum converges.

For example, with constant rates and , the ratio is , and convergence requires .

Random Walks

A continuous-time random walk on a finite graph assigns jump rates to each edge. On a finite connected graph with symmetric rates (), detailed balance is satisfied and the stationary distribution is proportional to (where is the total rate out of state ). If all rates are equal, this gives a uniform stationary distribution.

Queueing Models

The M/M/1 queue has Poisson arrivals at rate and exponential service at rate . This is a birth-death process with constant rates, so the stationary distribution (when ) is geometric:

From this you can derive performance measures like the expected number of customers () and the expected time in the system ( by Little's law).

The M/M/c queue ( servers) has a similar structure but with state-dependent service rates: . The stationary distribution involves an Erlang-C formula, and stability requires .

Applications

Steady-State Performance Analysis

In practice, you often care about long-run averages: the mean queue length, the fraction of time a server is idle, the throughput of a network. The stationary distribution gives you all of these directly. Once you have , any steady-state quantity is just a weighted sum for the appropriate function .

Ergodicity and Convergence

A CTMC is ergodic if it's irreducible and positive recurrent. Ergodicity guarantees:

- A unique stationary distribution exists.

- The chain converges to it from any starting state.

- Time averages equal ensemble averages (the ergodic theorem).

This is what justifies using stationary distributions in practice. You don't need to know the initial state to predict long-run behavior.

Simulation and MCMC

Markov chain Monte Carlo (MCMC) methods construct a Markov chain whose stationary distribution is a target distribution you want to sample from. The Metropolis-Hastings algorithm and Gibbs sampler are the most common examples. After running the chain long enough to approximately reach stationarity (the "burn-in" period), subsequent samples approximate draws from the target distribution.

Stationary Distributions vs. Limiting Distributions

Stationary distribution: A distribution satisfying . If you start there, you stay there.

Limiting distribution: The distribution the chain converges to as , regardless of starting state.

For CTMCs, these two concepts coincide whenever the chain is irreducible and positive recurrent. The lack of periodicity in continuous time simplifies things compared to the discrete-time case, where a periodic chain can have a stationary distribution but no limiting distribution.

A chain that is not positive recurrent (e.g., a symmetric random walk on ) has neither a stationary nor a limiting distribution.

Extensions

Quasi-Stationary Distributions

When a CTMC has absorbing states (states it can enter but never leave), the chain will eventually be absorbed with probability 1. A quasi-stationary distribution describes the conditional distribution over transient states, given that absorption hasn't happened yet:

where is the set of absorbing states. This is useful in population models (where extinction is the absorbing state) and reliability theory (where system failure is absorbing).

Stationary Distributions for General CTMCs

For CTMCs on countably infinite state spaces, existence of a stationary distribution is not guaranteed even for irreducible chains. The chain must be positive recurrent. Standard tools for verifying positive recurrence include:

- Foster-Lyapunov criteria: Find a function on the state space such that outside a finite set, which implies positive recurrence.

- Direct computation: For birth-death chains, check whether using the product-form solution.