Definition of moment-generating functions

The moment-generating function (MGF) of a random variable is defined as:

where is a real number. The name comes from what this function does: it generates the moments of a distribution (mean, variance, skewness, kurtosis) through differentiation. MGFs also uniquely characterize distributions, making them one of the most versatile tools in probability theory.

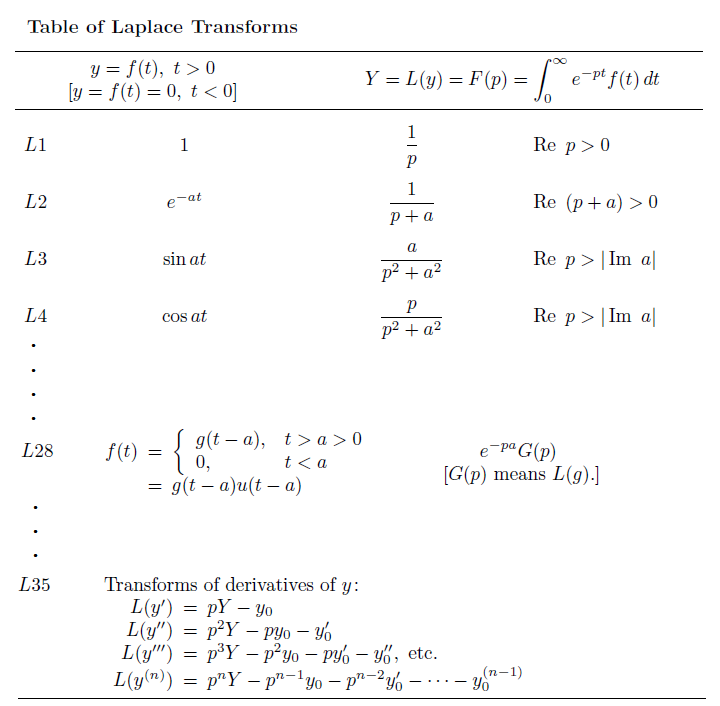

Laplace transforms vs moment-generating functions

MGFs are closely related to Laplace transforms, which show up heavily in engineering and physics for solving differential equations. The two-sided Laplace transform of a probability density is , so the MGF is simply the Laplace transform evaluated at . In practice, Laplace transforms typically deal with functions defined on , while MGFs apply to random variables on the entire real line.

Existence of moment-generating functions

Not every distribution has a valid MGF. For to exist, the expectation must be finite for all in some open interval around zero (i.e., for with ).

Distributions with heavy tails can violate this condition. The Cauchy distribution is the classic example: its tails decay so slowly that diverges for every . When an MGF doesn't exist, the characteristic function (which uses with ) serves as an alternative that always exists.

Uniqueness of distributions and moment-generating functions

If two random variables have MGFs that are equal on some open interval containing zero, then they have the same distribution. This uniqueness theorem is what makes MGFs so useful for identifying distributions and proving distributional results.

Note that the converse concern sometimes raised about "two different MGFs corresponding to the same distribution" is not actually an issue: if two random variables share the same distribution, their MGFs are necessarily identical wherever they exist. The uniqueness runs in both directions.

Properties of moment-generating functions

Derivatives and moment extraction

This is the core property that gives MGFs their name. The -th moment of equals the -th derivative of evaluated at :

Why does this work? Expand as a Taylor series:

Each coefficient encodes a moment. Differentiating times and setting isolates .

In practice, the most common extractions are:

- Mean:

- Variance:

- Higher moments (skewness, kurtosis) follow from higher-order derivatives.

Linear transformations

For a random variable , the MGF transforms as:

This follows directly from the definition: .

Sums of independent random variables

For independent random variables and , the MGF of their sum factors into a product:

This extends to any finite collection. If are independent:

The factoring happens because independence lets you split the joint expectation: . This property is what makes MGFs so effective for working with sums.

Moment-generating functions of common distributions

Discrete distributions

- Bernoulli (parameter ):

- Binomial (parameters ):

- Poisson (parameter ):

- Geometric (parameter , counting from 1): for

Continuous distributions

- Exponential (rate ): for

- Normal (mean , variance ):

- Gamma (shape , rate ): for

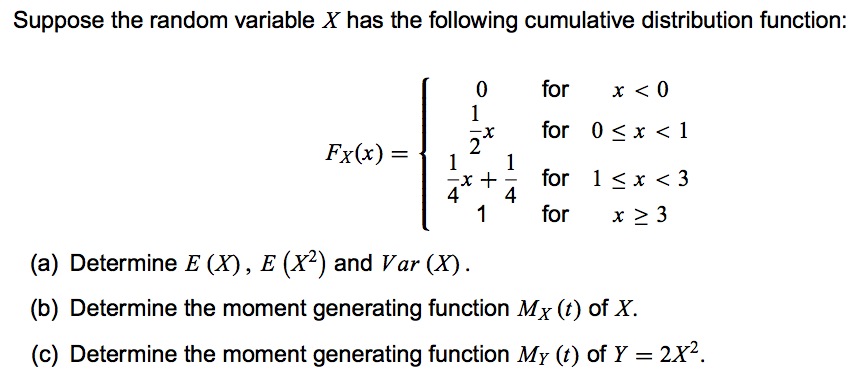

Worked examples

Example 1: Deriving the standard normal MGF

For :

Complete the square in the exponent: . This gives:

The integral equals 1 because the integrand is the density of a distribution.

Example 2: Moments of the exponential distribution

For with :

-

First derivative: , so

-

Second derivative: , so

-

Variance:

Applications of moment-generating functions

Determining distributions of sums

The product property for independent sums, combined with uniqueness, gives you a clean strategy for identifying the distribution of a sum:

- Compute the MGF of each independent summand.

- Multiply them together.

- Recognize the resulting MGF as belonging to a known distribution.

Example: Let and be independent. Then:

This is the MGF of a distribution. By uniqueness, .

The same technique works for showing that sums of independent normals are normal, sums of independent gammas (with the same rate) are gamma, and so on.

Role in limit theorems

MGFs provide one of the cleanest routes to proving the Central Limit Theorem (CLT). The argument proceeds roughly as follows:

-

Let be i.i.d. with mean , variance , and a valid MGF.

-

Form the standardized sum .

-

Show that as .

-

Since is the MGF of the standard normal, a continuity theorem guarantees .

The key step uses a Taylor expansion of around . This MGF-based proof requires the MGF to exist in a neighborhood of zero, which is a stronger condition than the CLT actually needs, but it makes the argument especially transparent.

Characterizing distributions

Because MGFs uniquely determine distributions, you can use them as a fingerprint. If you derive the MGF of some random variable and it matches a known form, you've identified the distribution without needing to work out the full density or PMF. This is often far easier than computing convolutions or transformations directly.