Diagonalization and spectral decomposition are powerful tools for understanding matrix transformations. They break down complex matrices into simpler components, revealing their fundamental structure and behavior.

These techniques are crucial for solving various problems in linear algebra and beyond. By expressing matrices in terms of eigenvalues and eigenvectors, we gain insights into their properties and can simplify calculations in many applications.

Diagonalizing Matrices with Eigenvectors

The Diagonalization Process

- Diagonalization finds a diagonal matrix similar to a given square matrix , such that , where is an invertible matrix

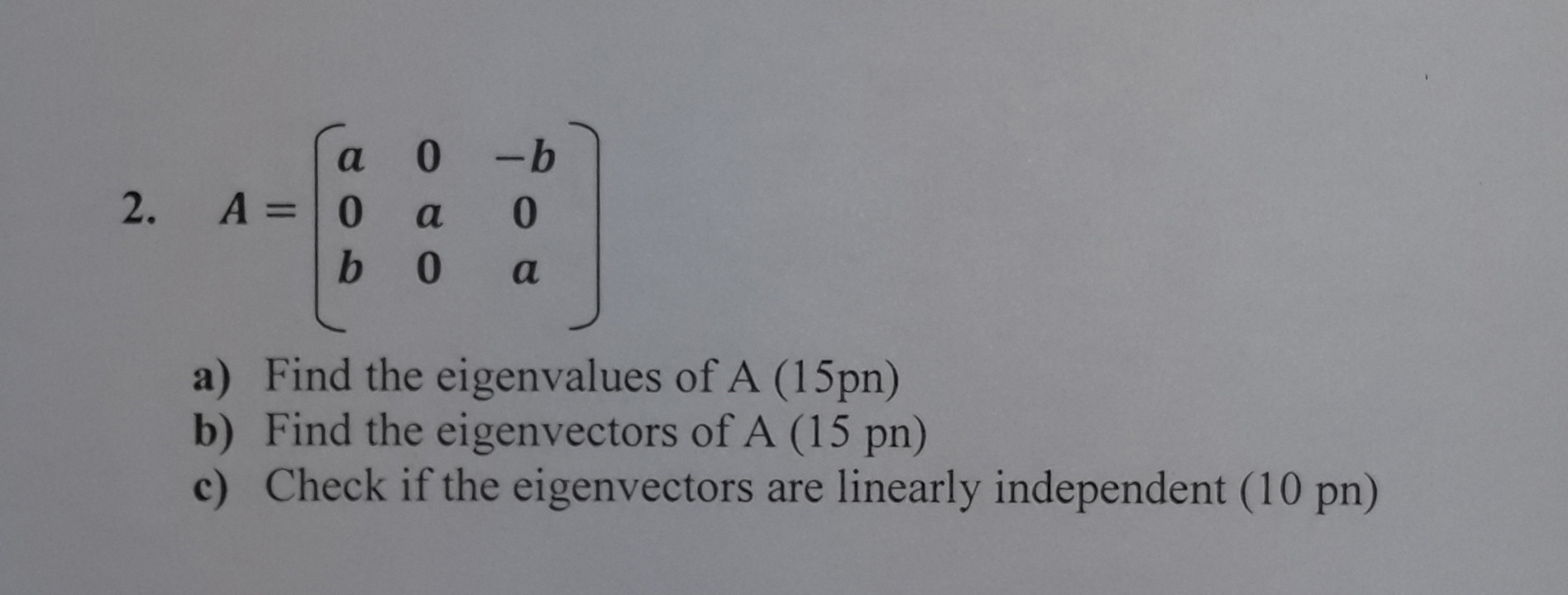

- A matrix is diagonalizable if and only if it has linearly independent eigenvectors, where is the size of the matrix

- Example: A 3x3 matrix is diagonalizable if it has 3 linearly independent eigenvectors

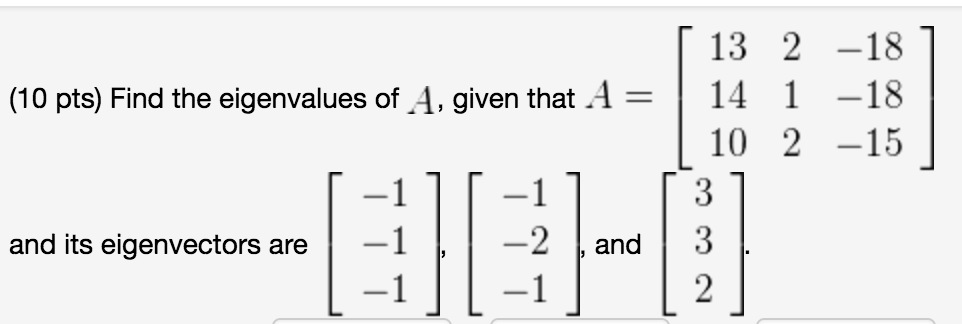

- To diagonalize a matrix , first find its eigenvalues by solving the characteristic equation , where represents the eigenvalues and is the identity matrix

- Example: For a 2x2 matrix , the characteristic equation is

Finding Eigenvectors and Eigenspaces

- For each distinct eigenvalue , find the corresponding eigenvectors by solving the equation , where represents the eigenvectors

- Example: If is an eigenvalue of matrix , solve to find the eigenvectors associated with

- The eigenvectors corresponding to each distinct eigenvalue form a basis for the eigenspace associated with that eigenvalue

- The eigenspace is the set of all vectors that satisfy for a given eigenvalue

- The dimension of the eigenspace is equal to the geometric multiplicity of the corresponding eigenvalue

Diagonal and Invertible Matrices for Diagonalization

Constructing the Diagonal Matrix

- The diagonal matrix is constructed by placing the eigenvalues of along the main diagonal in any order, with each eigenvalue appearing as many times as its algebraic multiplicity

- Example: If the eigenvalues of are with multiplicity 2 and with multiplicity 1, then

- The size of the diagonal matrix is the same as the size of the original matrix

Constructing the Invertible Matrix

- The invertible matrix , also known as the modal matrix, is constructed by arranging the linearly independent eigenvectors of as its columns, in the same order as their corresponding eigenvalues in

- Example: If the eigenvectors of are and , then

- The columns of must be linearly independent for to be invertible. If has repeated eigenvalues, ensure that the corresponding eigenvectors are linearly independent

- The matrix is the inverse of , and it can be found using various methods such as Gaussian elimination or the adjugate matrix divided by the determinant of

- Example: If , then

Spectral Decomposition of Matrices

The Spectral Decomposition Theorem

- The spectral decomposition theorem states that if is an symmetric matrix with distinct eigenvalues and corresponding orthonormal eigenvectors , then can be expressed as

- Example: If with eigenvalues and , and orthonormal eigenvectors and , then

- Each term in the spectral decomposition, , is a rank-one matrix, as it is the outer product of a column vector () with its transpose ()

Matrix Form of the Spectral Decomposition

- The spectral decomposition can be written in matrix form as , where is an orthogonal matrix whose columns are the orthonormal eigenvectors of , and is a diagonal matrix with the eigenvalues of on its main diagonal

- Example: If , , and , then

- If is not symmetric, the spectral decomposition theorem does not apply directly. However, a similar decomposition can be obtained using the singular value decomposition (SVD)

Geometric and Algebraic Interpretation of Spectral Decomposition

Geometric Interpretation

- Geometrically, the spectral decomposition can be interpreted as a transformation of the standard basis vectors by the matrix , followed by a scaling of each transformed vector by its corresponding eigenvalue

- Example: If , the standard basis vectors and are transformed by and then scaled by the eigenvalues 2 and 3, respectively

- The eigenvectors represent the principal directions or axes of the transformation, while the eigenvalues represent the scaling factors along these axes

- Example: In a 2D transformation, the eigenvectors may represent the directions of stretching or compression, while the eigenvalues indicate the amount of stretching or compression along those directions

Algebraic Interpretation

- Algebraically, the spectral decomposition expresses a matrix as a linear combination of rank-one matrices, each of which represents a specific contribution to the overall transformation

- Example: In the spectral decomposition , each term represents a rank-one matrix contributing to the transformation described by

- The magnitude of each eigenvalue indicates the significance of its corresponding eigenvector in the transformation. Larger eigenvalues have a more significant impact on the transformation than smaller eigenvalues

- Example: If and , the transformation described by is primarily determined by the eigenvector corresponding to , as it has a much larger scaling factor

- The spectral decomposition provides insight into the underlying structure of a matrix and can be used to analyze properties such as matrix powers, exponentials, and functions of matrices

- Example: The matrix exponential can be easily computed using the spectral decomposition as , where is a diagonal matrix with the exponentials of the eigenvalues on its main diagonal