Systematic Ethical Problem-Solving

Importance of Structured Approaches

Ethical problem-solving is a structured process for analyzing and resolving moral dilemmas in a consistent, justifiable way. Without a clear method, it's easy to rush to judgment, overlook key details, or let personal bias drive the outcome.

A systematic approach helps in several ways:

- Promotes thorough analysis of the ethical issue, so you don't skip over important considerations

- Supports transparency and accountability, because you can clearly communicate why you reached a particular decision

- Maintains trust among the people affected, since they can see the reasoning behind your conclusions

- Provides a reusable framework for addressing new or unfamiliar dilemmas, so you're not starting from scratch every time

Structured approaches also help you apply ethical principles consistently across different situations rather than making ad hoc judgments that shift depending on your mood or the pressure you're under.

Organizational Benefits

When organizations adopt ethical problem-solving frameworks consistently, the effects go beyond individual decisions:

- Culture: Employees begin to prioritize ethical considerations as a normal part of their work, not an afterthought. A shared framework creates a shared language around the organization's values.

- Reputation: Demonstrating a commitment to ethical conduct builds stakeholder trust and reduces the risk of scandals or ethical lapses that can cause lasting damage.

- Skill development: Incorporating these strategies into training programs equips employees with concrete tools for navigating ethical challenges, which strengthens critical thinking and moral awareness across the organization.

Ethical Frameworks: Comparison and Contrast

Models and Frameworks

Several models have been developed to guide ethical decision-making. While they differ in structure and emphasis, most share a common logic: identify the problem, gather information, evaluate options, and act.

Two widely referenced models:

-

The IDEA Model walks through four stages:

- Identify the ethical issue

- Determine the relevant facts and stakeholders

- Evaluate your options using ethical principles

- Act on the course of action you've chosen

-

The Potter Box Model approaches decisions through four dimensions:

- Define the situation (what are the facts?)

- Identify the values at play

- Apply ethical principles

- Determine where your loyalties lie

Most models also encourage you to draw on multiple ethical theories when evaluating your options:

- Utilitarianism asks which action produces the greatest overall well-being or happiness

- Deontology focuses on whether the action fulfills moral duties and follows moral rules, regardless of the outcome

- Virtue ethics shifts the focus to the character of the decision-maker: What would a person of good moral character do here?

Specific Aspects and Contexts

Beyond these general models, some frameworks zero in on particular dimensions of ethical decision-making:

- Stakeholder theory examines how a decision affects different groups (employees, customers, shareholders, communities) and weighs their competing interests

- Organizational values frameworks align decisions with an organization's stated mission and principles

- Professional codes of ethics offer field-specific guidance, such as the Hippocratic tradition in medicine or codes of conduct in law and engineering

No single model fits every situation perfectly. Comparing their strengths and limitations helps you pick the right tool for the context. A healthcare dilemma involving patient autonomy, for example, may call for a different emphasis than a business decision about environmental sustainability.

Applying Ethical Problem-Solving

Real-World Scenarios and Case Studies

Practicing with real-world scenarios is where ethical reasoning moves from abstract to practical. Case studies let you apply theoretical frameworks to concrete situations and build the skill of spotting ethical issues in messy, real-life contexts.

Case studies come from many fields, and each brings its own complexities:

- Business: Corporate social responsibility, employee rights, environmental sustainability

- Healthcare: Patient autonomy, resource allocation (e.g., who gets a scarce organ transplant?), end-of-life care decisions

- Technology: Data privacy, algorithmic bias, the societal impact of emerging tools like AI

- Public policy: Balancing individual freedoms against collective welfare, distributing limited public resources

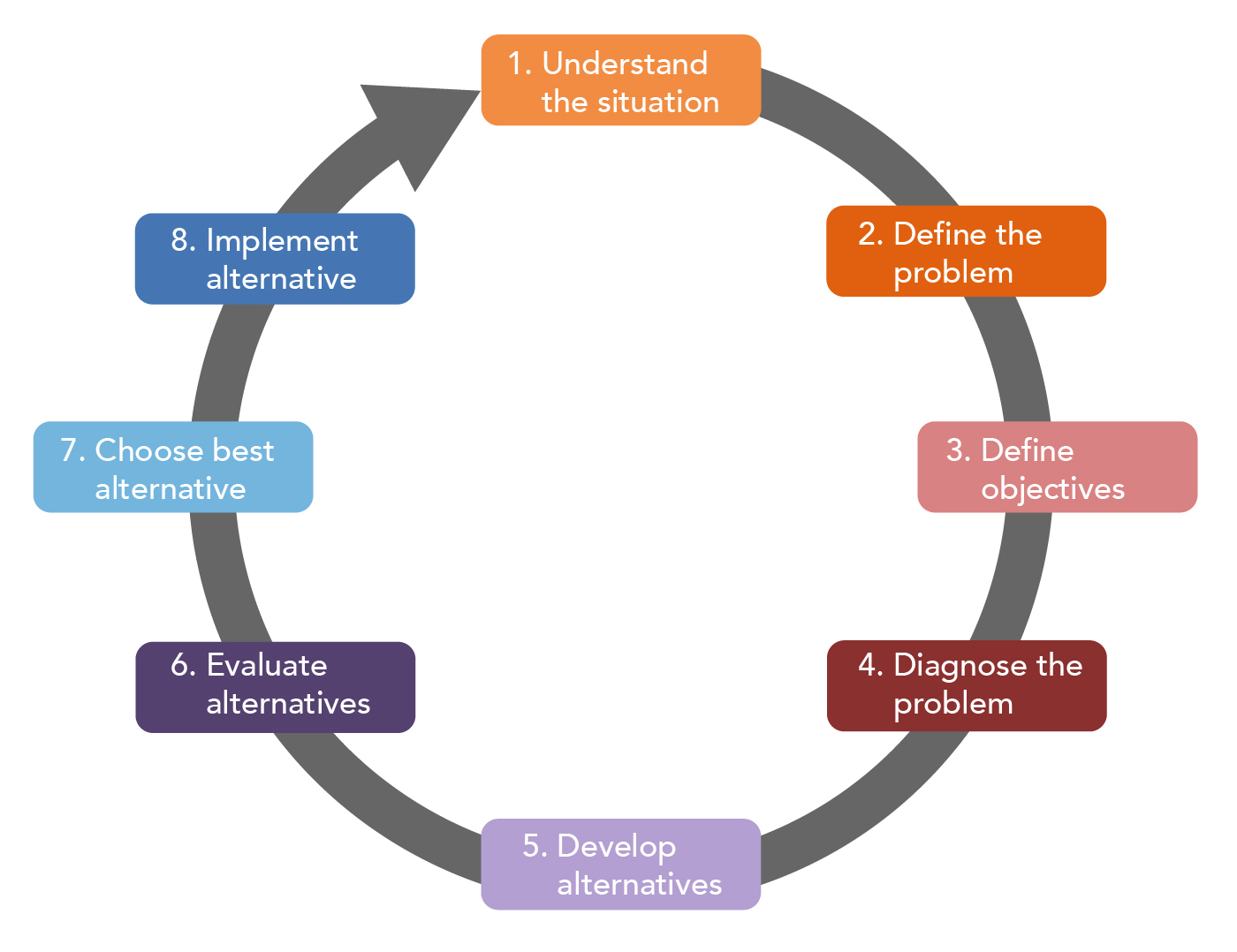

When you work through a case study, follow a clear process:

- Define the ethical problem precisely. Vague problem statements lead to vague analysis.

- Identify all relevant stakeholders and consider their perspectives and interests.

- Gather the facts, including any applicable laws, regulations, or professional standards. Ethical decisions don't happen in a vacuum.

- Generate possible courses of action and evaluate each one using ethical principles.

- Anticipate consequences, both short-term and long-term, for each option.

- Choose and justify your decision, explaining which principles guided you and why.

Evaluation and Critical Thinking

Working through case studies does more than test your knowledge of frameworks. It reveals the practical trade-offs that different ethical theories produce. A utilitarian analysis might point you toward one action, while a deontological analysis points somewhere else entirely. Sitting with that tension is part of developing stronger moral reasoning.

Discussing cases with others is especially valuable. Collaborative debate exposes you to perspectives you might not have considered, challenges assumptions you didn't realize you held, and sharpens the clarity of your arguments. You don't have to agree with every viewpoint you encounter, but engaging seriously with alternative reasoning will make your own thinking more rigorous.

Critical Thinking for Ethical Dilemmas

Key Skills and Abilities

Three interrelated skills form the core of ethical reasoning:

Critical thinking means carefully analyzing arguments, evidence, and assumptions before reaching conclusions. In practice, this looks like:

- Questioning the logic behind ethical claims and justifications

- Assessing the strength of evidence supporting different positions

- Recognizing biases (your own and others') that may be shaping the analysis

Moral reasoning is the ability to identify which ethical principles apply to a situation and use them coherently. This requires understanding the key tenets of frameworks like utilitarianism, deontology, and virtue ethics well enough to determine which principles are most relevant in a given context.

Decision-making under uncertainty is where things get hardest. Real ethical dilemmas involve competing values, incomplete information, and stakeholders with legitimate but conflicting interests. Effective decision-making means:

- Balancing the rights and concerns of different parties

- Prioritizing the most important ethical values at stake

- Forecasting likely outcomes while acknowledging what you can't predict

Development and Practice

These skills aren't things you either have or don't. They develop through deliberate, ongoing practice:

- Analyze regularly. Work through case studies, thought experiments, and current ethical controversies. The more situations you reason through, the sharper your instincts become.

- Reflect on your own thinking. Examine your moral beliefs, notice where your biases show up, and ask yourself whether your reasoning would hold up if someone challenged it.

- Embrace ambiguity. Many ethical dilemmas don't have clean answers. Resisting the urge to oversimplify is itself a sign of mature moral reasoning.

- Engage in dialogue. Discuss ethical questions with peers, colleagues, or mentors. Actively listen to opposing views, and be willing to revise your position when the reasoning warrants it.

The goal isn't to become certain about every moral question. It's to become more thoughtful, more consistent, and more capable of explaining why you believe what you believe.