The digital age raises ethical challenges that don't fit neatly into traditional moral frameworks. As technologies like AI, mass surveillance, and big data reshape daily life, they create new tensions between innovation and individual rights. Understanding these tensions is central to applied ethics today, because the decisions made now about technology governance will shape civil liberties, equality, and democracy for decades.

Ethical Implications of Emerging Technologies

Privacy and Security Concerns

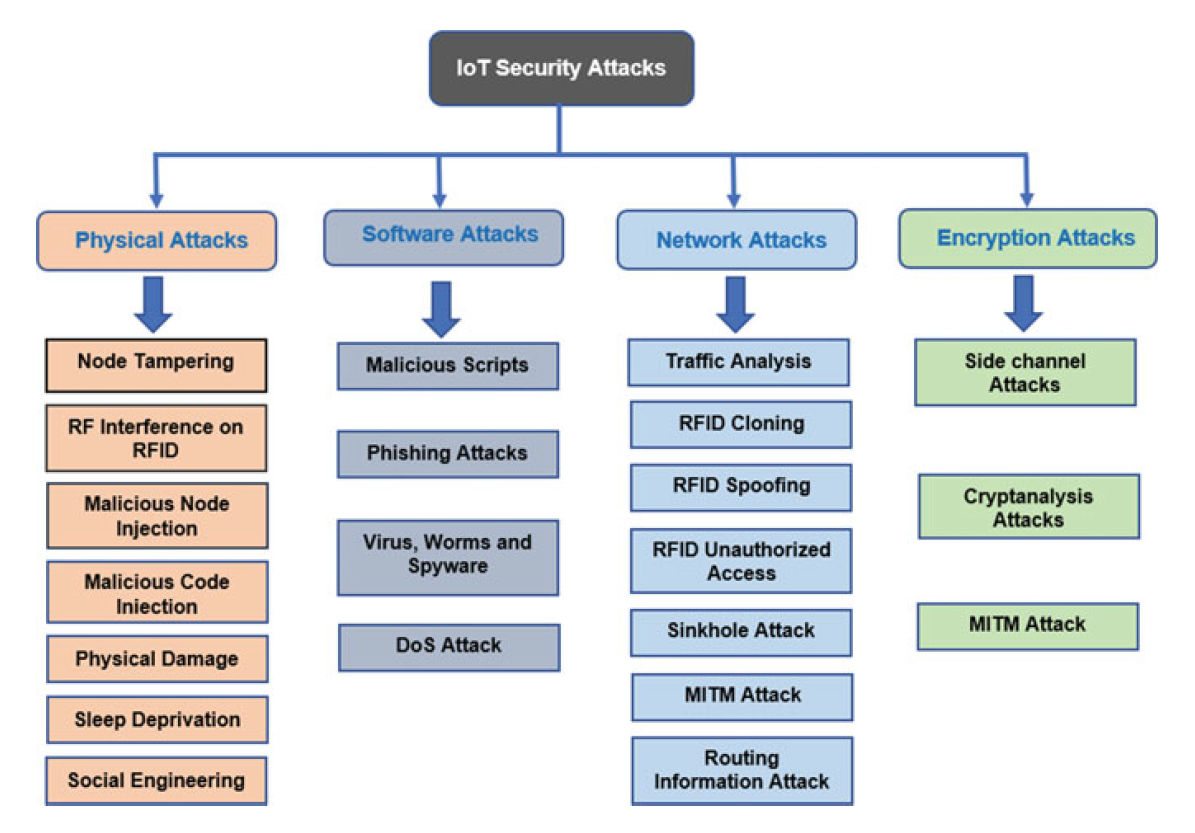

Technologies like artificial intelligence, big data analytics, and the Internet of Things (IoT) can collect, process, and share enormous amounts of personal data. This creates real risks:

- Unauthorized access and data breaches expose sensitive information. The 2017 Equifax breach, for example, compromised the personal data of roughly 147 million people.

- Sensitive categories of data raise the stakes further. Genetic information and biometric data (fingerprints, facial scans) are permanent; you can change a password, but you can't change your fingerprint. Misuse of this data could enable discrimination based on genetic predispositions or physical characteristics.

- The sheer volume of data collected often exceeds what users understand or consent to, creating an asymmetry of knowledge between individuals and the organizations holding their data.

Impact on Civil Liberties and Individual Rights

- Facial recognition used by governments and corporations can enable mass surveillance and tracking. This potentially infringes on rights to privacy and freedom of movement, and it could be used to suppress political dissent or social movements.

- Platform concentration is another concern. A handful of technology companies now mediate communication, commerce, and social interaction for billions of people. That concentration of power gives these companies significant influence over public discourse and access to information, which can undermine individual autonomy and democratic processes.

- Regulatory lag is a persistent problem. The rapid pace of technological change regularly outpaces the development of legal and ethical frameworks. This leaves individuals vulnerable to harms that existing laws weren't designed to address and requires ongoing efforts to update regulations.

Bias and Discrimination in Algorithmic Decision-Making

Algorithmic systems are now used to make or inform high-stakes decisions in hiring, lending, criminal sentencing, and more. The core problem: these systems learn from historical data, and historical data often reflects existing social inequalities.

- A well-known example is the COMPAS recidivism algorithm, which studies found was more likely to falsely flag Black defendants as high-risk compared to white defendants. The algorithm didn't use race as an input, but it picked up on proxy variables correlated with race.

- In hiring, Amazon scrapped an AI recruiting tool in 2018 after discovering it systematically downgraded résumés from women, because it had been trained on a decade of hiring data that skewed male.

These cases illustrate how algorithms can reinforce and amplify existing power imbalances rather than correct them. The ethical question isn't just whether AI can make these decisions, but whether it should, and what level of human oversight is required.

Responsibilities of Tech Companies

Ensuring Ethical Design and Deployment

Technology companies bear responsibility for the products they release into the world. "Ethical design" means considering potential impacts on individuals and society before deployment, not just after problems surface.

- Companies should prioritize fairness, transparency, and accountability throughout the product development cycle.

- Investing in ethical AI research matters. This includes engaging diverse stakeholders early in the design process to identify potential biases and harms that a homogeneous team might miss.

- "Move fast and break things" is a poor motto when the things being broken are people's rights. Responsible innovation requires slowing down enough to assess consequences.

Transparency and Accountability in Data Practices

Users deserve to know what's happening with their data. In practice, this means:

- Clear communication about how data is collected, used, and shared. Privacy policies written in dense legalese don't count as genuine transparency.

- Robust security measures to protect user data from breaches and unauthorized access.

- Meaningful user control over personal data, including the ability to access, correct, and delete information, and to opt out of data collection or sharing where appropriate. The EU's General Data Protection Regulation (GDPR), enacted in 2018, codified many of these rights into law.

- Transparent handling of government data requests. Companies should have clear policies for responding to government requests for user data and should publish transparency reports detailing the scope and frequency of such requests.

Stakeholder Engagement and Collaboration

No single actor can solve these problems alone. Effective technology governance requires multistakeholder collaboration involving industry, government, academia, and civil society.

- Companies should conduct regular audits of their algorithms and decision-making systems to identify biases and unintended harms.

- Ongoing dialogue with users, policymakers, and civil society organizations helps companies understand ethical concerns they might otherwise overlook.

- Regulatory frameworks developed through broad collaboration are more likely to balance innovation with public interest effectively.

Regulating Technology and Ethical Frameworks

Challenges of Regulating Rapidly Evolving Technologies

Regulation struggles to keep pace with technology for several reasons:

- Speed of innovation. By the time regulators understand a technology well enough to write rules, the technology may have already evolved or been replaced.

- Jurisdictional complexity. Technology platforms operate globally, but legal and cultural norms around privacy, security, and individual rights vary widely between countries. The EU's GDPR, for instance, takes a much stricter approach to data protection than U.S. federal law. International cooperation and harmonization of regulatory approaches remain difficult but necessary.

- Technical complexity. Modern AI and data systems are often opaque even to their creators (the "black box" problem). Regulators need technical expertise and new tools, such as algorithmic auditing and data access rights, to effectively oversee these systems.

Role of Ethical Frameworks in Guiding Technological Development

Traditional ethical frameworks offer different lenses for evaluating technology:

- Utilitarianism asks whether a technology produces the greatest good for the greatest number, weighing benefits against harms.

- Deontology focuses on whether the technology respects fundamental rights and duties, regardless of outcomes. Mass surveillance might reduce crime (a utilitarian benefit) but still violate the duty to respect individual privacy (a deontological concern).

- Virtue ethics asks what kind of character and society a technology cultivates. Does constant social media use promote or undermine human flourishing?

Professional codes of ethics also play a role. The ACM Code of Ethics and Professional Conduct and IEEE's Ethically Aligned Design establish shared standards for responsible technology development. These codes promote accountability and integrity, though enforcement remains limited.

A persistent tension exists between the economic and social benefits of innovation and the need for regulation. Policymakers must navigate this carefully, and doing so requires ongoing dialogue between regulators, industry, and the public.

Digital Surveillance and Personal Autonomy

Erosion of Privacy and Civil Liberties

Widespread digital surveillance, including facial recognition, location tracking, and social media monitoring, can produce a chilling effect on personal autonomy. A chilling effect occurs when people self-censor or change their behavior because they believe they're being watched, even if no direct punishment follows. This can deter legitimate political activism and free expression.

- The 2013 revelations by Edward Snowden about the scope and secrecy of NSA surveillance programs brought these concerns into sharp public focus. The programs operated with minimal transparency and limited oversight, raising serious questions about abuse of power.

- Surveillance is also becoming normalized through everyday devices. Smart home assistants, public cameras, and IoT devices continuously collect data on people's activities, gradually eroding expectations of privacy in both public and private spaces.

Disproportionate Impact on Marginalized Communities

Surveillance technologies don't affect everyone equally. Marginalized communities often bear the heaviest burden.

- Authoritarian regimes use surveillance to monitor and suppress dissent. China's deployment of facial recognition to track Uighur Muslims and Iran's monitoring of social media to identify protesters are prominent examples. These technologies can entrench oppressive power structures and stifle democratic movements.

- Predictive policing algorithms in democracies raise similar concerns. These tools typically rely on historical crime data that reflects systemic biases in policing. The result is often over-policing of communities of color and low-income neighborhoods, reinforcing the very patterns the data was drawn from.

- Corporate data collection for targeted advertising creates its own form of surveillance. Micro-targeting based on behavioral data can manipulate consumer choices and exploit psychological vulnerabilities, raising questions about consent and autonomy.

Need for Transparency and Accountability

Both government and corporate surveillance often operate with little public oversight. Individuals frequently have no way to know what data is being collected about them, how it's being used, or how to challenge misuse.

Addressing this requires:

- Robust legal and regulatory frameworks with mechanisms for independent oversight, redress for abuses, and protection of individual rights.

- Greater public awareness about the implications of digital surveillance. Informed civic participation depends on people understanding how their data is collected and used.

- Active roles for media and civil society in investigating and publicizing surveillance practices, holding both governments and corporations accountable.

These aren't problems that will resolve themselves. They require sustained public engagement and a willingness to update ethical and legal frameworks as technology continues to evolve.