Uncertainty in Climate Models

Types of Uncertainty in Climate Projections

Climate model projections face three main types of uncertainty, and each one dominates at a different timescale:

- Scenario uncertainty stems from unpredictable future human activities like greenhouse gas emissions and land-use changes. This is the largest source of uncertainty for projections beyond about 50 years because we simply can't know what policy and technology choices societies will make.

- Model uncertainty results from limited understanding of climate processes and the simplifications needed to represent them numerically. Different modeling groups make different choices about how to represent the same physics, which leads to a spread in projections even when they use the same emissions scenario.

- Internal variability encompasses natural fluctuations within the climate system that occur independently of external forcings. Think of year-to-year swings in temperature or precipitation. This dominates uncertainty for near-term projections (the next decade or two) but becomes less important over longer timescales as the forced signal grows.

Beyond these three categories, several specific mechanisms drive uncertainty:

- Parameterization of sub-grid scale processes is a major contributor. Processes like cloud formation, convection, and turbulent mixing happen at scales smaller than a model's grid cells, so they must be approximated with simplified equations. These approximations introduce error.

- Feedback mechanisms contribute substantial uncertainty to long-term projections. Cloud feedbacks, aerosol interactions, and carbon cycle responses can either amplify or dampen warming, and models disagree on their strength. Cloud feedbacks alone account for much of the spread in equilibrium climate sensitivity across models.

- Initial condition uncertainty matters most for short-term predictions. Because the climate system is chaotic, small differences in starting conditions can lead to divergent outcomes over weeks to years. For multi-decadal projections, though, the forced response overtakes this source of uncertainty.

Sources of Model Uncertainty

Several practical limitations prevent models from perfectly representing the climate system:

- Incomplete process understanding. Some interactions, like cloud-aerosol feedbacks and ocean-atmosphere coupling, are not fully understood even at a theoretical level.

- Finite resolution. Grid cells in global models are typically 50–100 km across. Fine-scale features like individual thunderstorms, coastal sea breezes, or mountain-valley circulations can't be explicitly resolved and must be parameterized.

- Computational constraints. Higher resolution and more complex process representation require more computing power. Modelers face trade-offs between spatial/temporal resolution, the number of processes included, and how long a simulation can run.

- Data limitations. Observational networks are sparse over oceans, polar regions, and parts of the developing world. Instrumental climate records only extend back about 150 years, which limits how thoroughly models can be validated against long-term trends.

Importance of Model Intercomparisons

Model Intercomparison Projects (MIPs)

Model Intercomparison Projects provide a structured way to compare climate models developed by different research groups around the world. The most prominent is the Coupled Model Intercomparison Project (CMIP), now in its sixth phase (CMIP6), which coordinates dozens of modeling centers running standardized experiments.

MIPs serve several purposes:

- Identifying robust signals. Where multiple independent models agree on a projection (e.g., Arctic amplification of warming), confidence in that result increases. Where they disagree, it flags processes that need more research.

- Evaluating model performance. Standardized experiments allow direct comparison of how well each model reproduces observed climate features like global mean temperature, precipitation patterns, and sea ice extent.

- Supporting policy. CMIP results form the backbone of IPCC assessment reports, which in turn inform international climate negotiations. The multi-model spread from CMIP provides the uncertainty ranges you see in IPCC projections.

Ensemble Simulations and Their Benefits

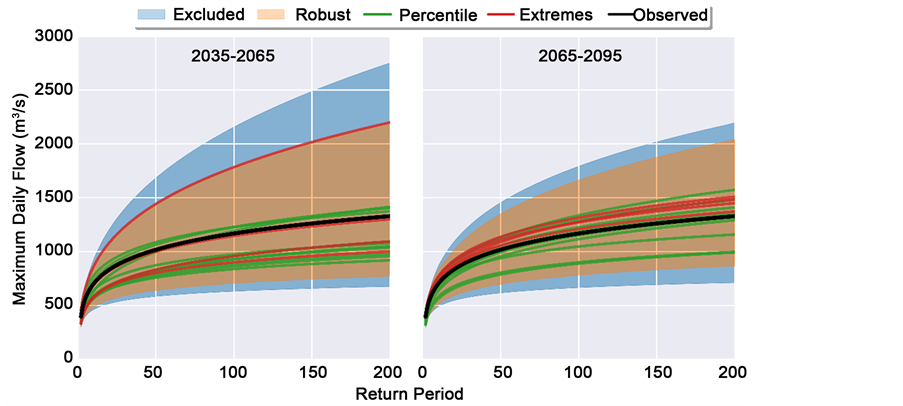

Ensemble simulations involve running a model (or multiple models) many times with varied conditions. There are several types, each targeting a different source of uncertainty:

- Multi-model ensembles combine output from different models to capture structural uncertainty. The ensemble mean tends to outperform any single model at reproducing observed climate patterns because individual model biases partially cancel out.

- Perturbed physics ensembles take a single model and systematically vary its internal parameters (e.g., cloud droplet size thresholds, mixing lengths). This reveals how sensitive the model's projections are to specific parameter choices.

- Initial condition ensembles (also called large ensembles) run the same model many times with slightly different starting states. By averaging across runs, you can separate the forced climate change signal from internal variability. This is critical for attribution studies that ask whether an observed trend is due to human influence or natural fluctuation.

Evaluating Climate Model Performance

Comparison with Observational Data

Model evaluation centers on comparing simulated output against historical observations. The key variables assessed include global and regional temperature patterns, precipitation distributions, and large-scale circulation features like jet streams and monsoons.

Several metrics and tools are used:

- Root mean square error (RMSE) measures the average magnitude of differences between modeled and observed values. Lower RMSE indicates better agreement.

- Correlation coefficients assess how well the spatial or temporal patterns in model output match observations. A correlation near 1.0 means the model captures the observed pattern well, even if the absolute values are off.

- Taylor diagrams combine correlation, standard deviation ratio, and RMSE into a single visual summary. They allow quick comparison of how multiple models perform relative to observations for a given variable.

- Pattern correlation specifically evaluates spatial agreement between modeled and observed climate fields, which is useful for assessing whether a model gets regional patterns right.

Models are also evaluated on their ability to simulate climate variability modes like the El Niño-Southern Oscillation (ENSO) and the North Atlantic Oscillation (NAO). Getting these right matters because they drive much of the year-to-year climate variability that affects societies.

Advanced Evaluation Techniques

Beyond standard comparisons with the modern observational record, several more sophisticated approaches strengthen model evaluation:

- Paleoclimate simulations test models under very different boundary conditions, such as ice age climates or warm periods like the mid-Pliocene. If a model can reproduce past climates with known forcings, that builds confidence in its physics.

- Process-based evaluation focuses on whether models get the right answer for the right reasons. Rather than just checking if global temperature matches observations, it examines whether specific mechanisms (cloud feedbacks, ocean heat uptake, land-atmosphere coupling) are represented realistically.

- Emergent constraints are relationships found across a multi-model ensemble between an observable present-day quantity and a future projection. For example, if models that better simulate current seasonal temperature cycles also tend to project a narrower range of climate sensitivity, that observed relationship can be used to constrain the likely range of future warming.

- Detection and attribution studies test whether models can reproduce observed trends only when human forcings (greenhouse gases, aerosols) are included, and not with natural forcings alone. This is a core method for establishing that observed warming is human-caused.

Sensitivity Analysis of Model Uncertainties

Techniques for Sensitivity Analysis

Sensitivity analysis systematically varies model parameters or inputs to determine which ones most strongly affect the output. Two broad approaches exist:

- One-at-a-time (OAT) analysis holds all parameters constant except one, which is varied across its plausible range. This is straightforward and identifies the most influential individual parameters, but it misses interactions between parameters.

- Global sensitivity analysis varies all parameters simultaneously and uses statistical methods (such as Sobol indices) to decompose the total output variance into contributions from individual parameters and their interactions. This captures non-linear effects that OAT analysis misses, but it's computationally expensive.

A key metric that emerges from sensitivity analysis is equilibrium climate sensitivity (ECS), defined as the global mean temperature increase resulting from a sustained doubling of atmospheric . Current estimates place ECS between roughly 2.5°C and 4.0°C (per IPCC AR6), and much of that range reflects uncertainty in cloud feedbacks.

Perturbed parameter ensembles are a practical application of sensitivity analysis. By generating many model versions with different parameter combinations, they produce probabilistic projections rather than single best-guess estimates.

Applications and Insights from Sensitivity Analysis

- Prioritizing model development. Sensitivity analysis reveals which parameters and processes contribute most to projection uncertainty, directing research effort where it will have the greatest impact.

- Identifying non-linear behavior and tipping points. Some parameter combinations push the model into qualitatively different states, such as abrupt shifts in ocean circulation or rapid ice sheet collapse. These high-impact, low-probability outcomes are especially important for risk assessment.

- Quantifying projection uncertainty. Sensitivity analysis produces ranges and probability distributions for future climate variables (temperature, precipitation, sea level), which feed directly into adaptation planning.

- Guiding model tuning. Results help modelers decide which parameters to adjust during calibration and how to balance model complexity against computational cost.

Implications of Model Uncertainties

Impact on Climate Change Adaptation Strategies

Uncertainty doesn't mean ignorance. It means planners need to work with ranges of possible outcomes rather than single predictions.

- Risk-based planning considers multiple plausible future scenarios rather than relying on a single "best estimate." For example, a water management plan might prepare for both increased drought frequency and more intense flooding, since models project both are possible depending on the region and scenario.

- Robust decision-making frameworks seek strategies that perform reasonably well across a wide range of climate outcomes, rather than optimizing for one specific projection.

- The uncertainty cascade describes how uncertainties compound as you move from global emissions scenarios to global climate response to regional impacts to local decisions. A modest spread in global temperature projections can translate into a much larger spread in local rainfall projections, which in turn creates wide uncertainty in crop yield estimates.

- Effective adaptation strategies build in flexibility: monitoring systems, decision triggers, and adjustment mechanisms that allow plans to be updated as new observations and improved projections become available.

Communication and Policy Implications

- Ensemble projections provide probabilistic information for risk assessments, giving policymakers likelihood ranges rather than false precision. For instance, stating that there is a 66–100% probability of exceeding 1.5°C warming by 2040 is more useful than a single number.

- The precautionary principle supports taking mitigation action even in the face of uncertainty, particularly because some climate changes (ice sheet loss, species extinction) are effectively irreversible.

- Communicating uncertainty effectively is genuinely difficult. Framing matters: saying "scientists are uncertain about how much warming will occur" can be misread as "scientists don't know if warming is happening." Clear communication should emphasize what is well-established alongside what remains uncertain.

- Uncertainty is not a reason for inaction. Many climate signals are robust across models and scenarios, including continued warming, sea level rise, and intensification of heavy precipitation events. Low-regret strategies that provide benefits regardless of which scenario unfolds (energy efficiency, ecosystem restoration, improved early warning systems) are sensible responses even under deep uncertainty.

- Ongoing improvements in observations, computing power, and process understanding continue to narrow uncertainties over time, supporting an adaptive approach to climate policy.