Cholesky factorization is a powerful tool for decomposing symmetric positive definite matrices. It's faster than LU factorization and has wide-ranging applications in linear algebra, optimization, and statistics.

This method breaks down a matrix into the product of a lower triangular matrix and its transpose. It's computationally efficient, numerically stable, and useful for solving linear systems and least-squares problems.

Cholesky Factorization Properties

Decomposition and Characteristics

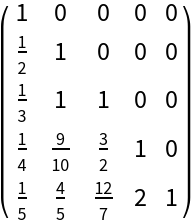

- Cholesky factorization decomposes a symmetric positive definite matrix A into the product of a lower triangular matrix L and its transpose

- Symmetric positive definite matrices have positive eigenvalues and satisfy the property for all non-zero vectors x

- Cholesky factor L remains unique with positive diagonal entries ensuring numerical stability in computations

- Factorization only exists for positive definite matrices making it a useful test for this property

Applications and Efficiency

- Applicable in various fields (linear algebra, optimization, statistical computations)

- Useful for solving systems of linear equations, computing determinants, and generating random samples from multivariate normal distributions

- Computationally efficient requiring approximately floating-point operations for an n × n matrix

- Twice as fast as LU factorization for symmetric positive definite matrices

Cholesky Factorization Implementation

Standard and Blocked Algorithms

- Standard Cholesky algorithm computes lower triangular matrix L column by column using inner products and square roots

- Blocked versions improve cache efficiency on modern computer architectures

- Outer product form updates the entire matrix at each step as an alternative implementation

Variants and Specialized Implementations

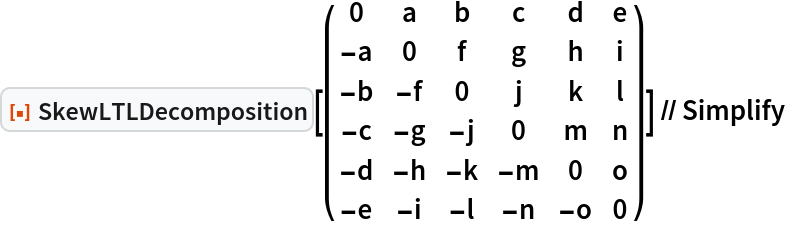

- Modified Cholesky factorization handles symmetric matrices not necessarily positive definite

- Produces factorization of the form , where D represents a diagonal matrix

- Ensures factorization exists even for indefinite matrices useful in optimization algorithms

- Rank-one updates and downdates allow efficient dynamic updates to factorization as underlying matrix changes

- Parallel implementations take advantage of multi-core processors or distributed computing environments

- Sparse Cholesky factorization algorithms exploit sparsity patterns to reduce computational complexity and storage requirements

Applications of Cholesky Factorization

Solving Linear Systems and Least-Squares Problems

- Solves system Ax = b by first solving Ly = b for y, then solving for x

- Solution process involves forward substitution followed by backward substitution, each with complexity

- Applies to least-squares problems of the form using normal equations

- Numerically stable for well-conditioned A in least-squares problems

- Combines with iterative refinement to improve solution accuracy in presence of rounding errors

Optimization and Large-Scale Problems

- Used in optimization problems to solve linear systems arising in Newton's method or interior point methods

- Sparse Cholesky factorization with appropriate ordering techniques reduces computational cost for large, sparse systems

- Efficient for dynamic updates in optimization algorithms through rank-one updates and downdates

Efficiency vs Stability of Cholesky Factorization

Computational Complexity and Stability

- Time complexity for dense matrices more efficient than LU factorization for symmetric positive definite matrices

- Space complexity for storing lower triangular factor L

- Numerically stable without pivoting due to positive definiteness of the matrix

- Relative error in computed Cholesky factor L bounded by , where ε represents machine epsilon and κ(A) condition number of A

- Backward error analysis shows computed Cholesky factor as exact Cholesky factor of slightly perturbed matrix

Performance Considerations

- Accuracy degrades for ill-conditioned matrices but remains more stable than methods like Gaussian elimination

- Block algorithms improve cache efficiency and overall performance on modern computer architectures

- Parallel implementations achieve near-linear speedup on shared-memory systems for sufficiently large matrices