Standardization and Codification

Grammar rules didn't appear out of nowhere. They developed over centuries as societies moved from informal speaking customs to written, codified standards. This history sits at the heart of the prescriptive vs. descriptive debate, because many rules we treat as timeless were actually invented at a specific moment, by specific people, for specific reasons.

Development of Language Standards

Standardization is the process of establishing uniform language practices across regions and social groups. Before standardization, English varied wildly from town to town. What counted as "correct" depended entirely on where you lived.

Codification takes standardization a step further: it means writing the rules down in official documents. This happened through several key channels:

- Grammar books compiled and explained accepted conventions. Early examples include Robert Lowth's A Short Introduction to English Grammar (1762), which introduced many rules still taught today, like avoiding double negatives.

- Dictionaries fixed spelling and meaning. Samuel Johnson's A Dictionary of the English Language (1755) was one of the first major efforts to standardize English vocabulary.

- Style guides provided specific recommendations for writing in particular contexts. Modern examples like The Chicago Manual of Style carry on this tradition.

A key point: most of this codification happened in the 1700s and 1800s, during a period when scholars actively tried to model English grammar on Latin. That's where rules like "don't split infinitives" and "don't end a sentence with a preposition" come from. Latin doesn't allow those constructions, so prescriptivists applied the same logic to English, even though English works very differently.

Impact of Language Standardization

Standardization had real consequences, both positive and problematic:

- It promoted clearer communication across regions by reducing variation in writing.

- It made widespread education and literacy possible by giving teachers a consistent model to work from.

- It supported professional and academic writing with shared norms that readers could rely on.

- It also created a link between language and social status. People who used the "standard" variety were seen as educated and competent, while those who didn't were often judged negatively, regardless of how effectively they communicated.

That last point matters. Standardization didn't just organize language; it created hierarchies of "correct" and "incorrect" that often mapped onto existing class, race, and regional divisions.

Evolution of Language Standards

Standards aren't frozen in place. They shift as the language itself changes:

- Words and constructions once considered errors become accepted over time. "They" as a singular pronoun, for instance, was criticized for decades but is now widely endorsed by style guides.

- Digital communication (texting, social media, email) has introduced new conventions and pressures on formal standards.

- Descriptive approaches have gained influence in linguistics and education, increasingly balancing the older prescriptive traditions.

- Regional and global varieties of English continue to challenge the idea that any single standard is universal.

Linguistic Purism

Principles of Linguistic Purism

Linguistic purism is the belief that a language should be preserved in its "pure" or "original" form, and that changes to it represent corruption. This impulse has driven language policy in many countries.

Some nations have created official institutions to guard their language. The Académie Française, founded in 1635, still regulates French by approving or rejecting new words. English has never had an equivalent body, though proposals for one were floated in the 1700s.

One concept to watch for on exams: the etymological fallacy. This is the mistaken assumption that a word's original meaning is its "true" or "correct" meaning. For example, "decimate" originally meant "to kill one in ten" (from the Latin decimare), but today it means "to destroy a large portion of something." Insisting on the Latin meaning ignores how the word is actually used now.

Manifestations of Linguistic Purism

Purism shows up in several recognizable patterns:

- Resistance to loanwords and neologisms. Purists often push back against borrowed words (like English borrowing "café" from French) or newly coined terms, preferring existing native vocabulary.

- Insistence on historical grammar rules. Rules inherited from earlier periods get treated as permanent, even when usage has moved on.

- Framing language change as decay. You'll often hear complaints that "people don't know how to speak properly anymore." This attitude treats any change as a decline rather than a natural process.

- Preference for formal registers. Purists tend to elevate formal, written language over colloquial or spoken forms, treating everyday speech as inherently lesser.

Critiques and Challenges to Purism

Linguists have pushed back against purism on several fronts:

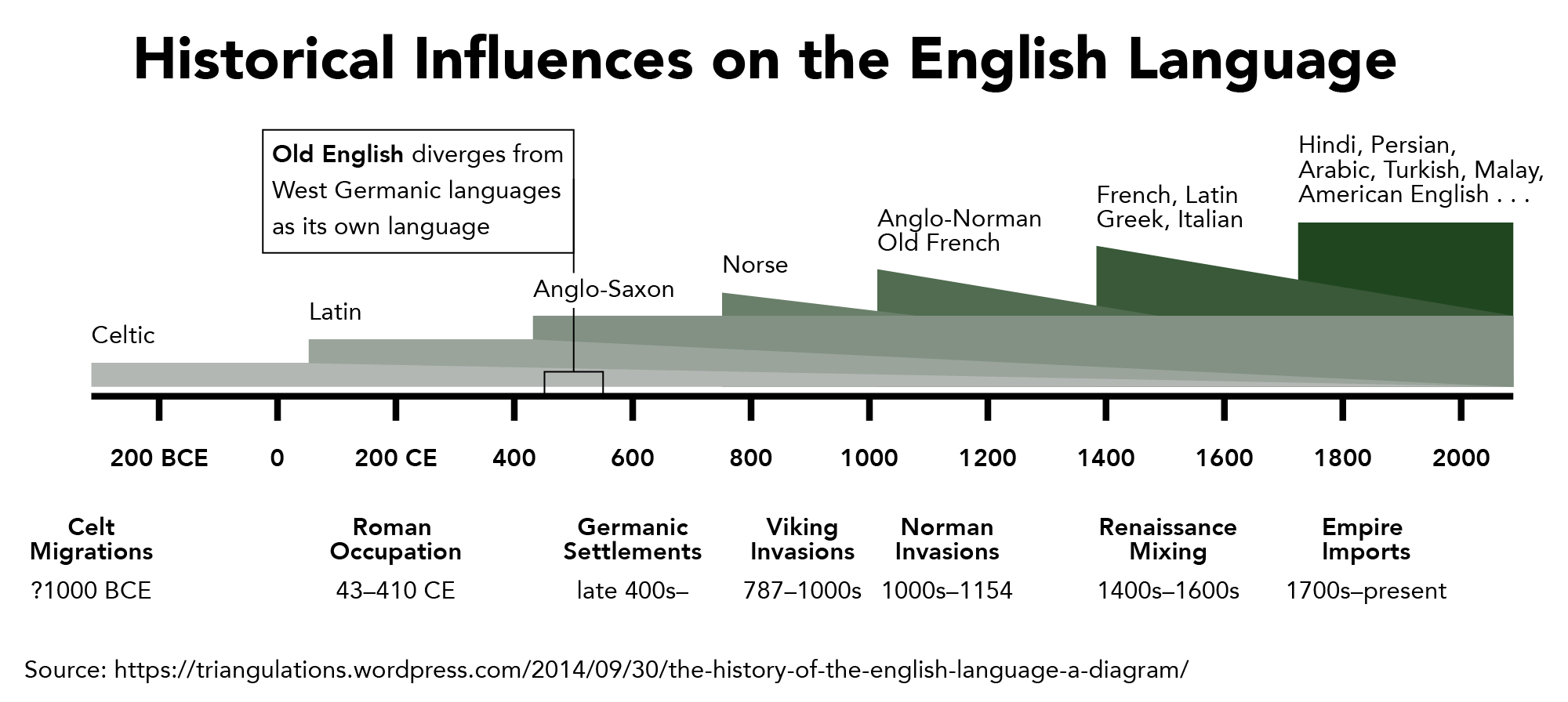

- Descriptive linguists point out that all living languages naturally evolve. Change isn't corruption; it's how language works. Old English is unrecognizable to modern speakers, and no one considers that a tragedy.

- Sociolinguists emphasize that linguistic diversity and variation are normal and valuable. Dialects and vernaculars follow their own consistent rules.

- Pragmatic perspectives recognize that language needs to adapt to new contexts. Scientific discoveries, technological inventions, and cultural shifts all require new vocabulary and sometimes new grammatical structures.

- Globalization and technology continue to reshape language despite purist resistance. English absorbs words from dozens of languages, and digital communication creates new forms faster than any academy could regulate.

The takeaway: understanding linguistic purism helps you recognize when a grammar "rule" is based on genuine communicative clarity versus when it's based on tradition, social prestige, or resistance to change. That distinction is central to the prescriptive vs. descriptive framework.