Dropout and noise-based regularization techniques are powerful tools in deep learning. They help prevent overfitting by randomly deactivating neurons or adding noise during training, creating an ensemble effect that improves generalization.

These methods, including dropout, Gaussian noise injection, and cutout, can be applied to various network architectures. Each technique has its strengths and trade-offs, offering different ways to enhance model performance and robustness across diverse tasks.

Understanding Dropout and Noise-Based Regularization

Concept of dropout regularization

- Dropout randomly deactivates neurons during training typically applied to hidden layers

- Prevents overfitting and reduces co-adaptation of neurons

- Neurons "dropped out" with probability p, remaining neurons scaled by 1/(1-p)

- Creates ensemble effect improves generalization reduces reliance on specific features (ResNet, DenseNet)

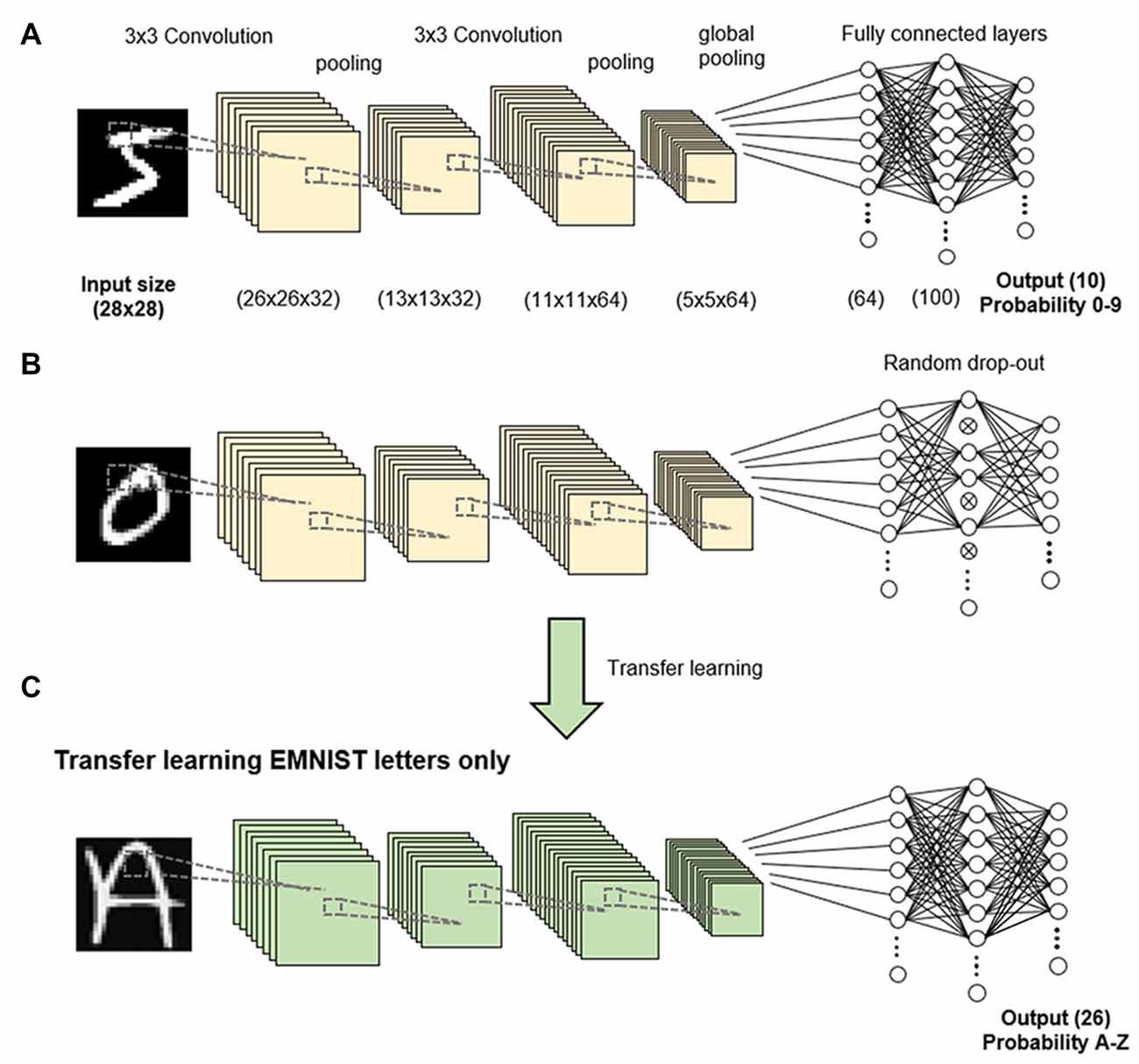

Application of dropout layers

- Placement of dropout layers strategically inserted between dense or convolutional layers

- Common dropout rates 0.5 for hidden layers, 0.2 for input layer

- Fully connected networks benefit from dropout in dense layers

- Convolutional neural networks apply dropout after pooling layers (AlexNet, VGGNet)

- Recurrent neural networks use dropout on non-recurrent connections (LSTM, GRU)

- Reduces overfitting improves generalization potentially increases training time

Other Noise-Based Regularization Methods

Noise-based regularization methods

- Gaussian noise injection adds random Gaussian noise to inputs or activations controlled by standard deviation

- DropConnect generalizes dropout by randomly dropping connections instead of neurons

- Zoneout specific to recurrent neural networks randomly preserves previous hidden states

- Cutout applies to image data randomly masks out square regions of input during training (ImageNet, CIFAR-10)

Comparison of regularization techniques

- Dropout effective easy to implement computationally efficient may require longer training time potential loss of information

- Gaussian noise injection simple to implement can be applied to various layers sensitive to noise level potential instability in training

- DropConnect more fine-grained than dropout potentially more powerful computationally expensive harder to implement

- Zoneout effective for RNNs helps with vanishing gradients limited to recurrent architectures

- Cutout improves robustness to occlusions in images only applicable to image data requires careful tuning of mask size