Simple linear regression is a powerful tool for understanding relationships between variables. It helps us predict one variable based on another, like guessing someone's blood pressure from their age. The method uses a straight line to represent this relationship.

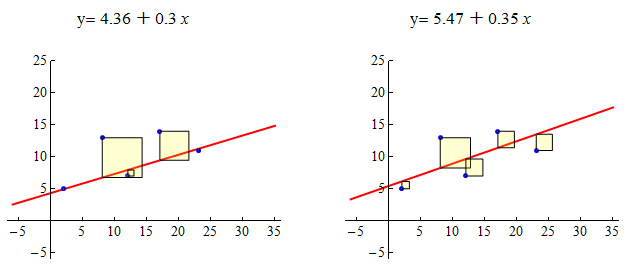

The least squares method finds the best-fitting line by minimizing differences between observed and predicted values. We can use this line to make predictions, but it's important to remember that these predictions aren't perfect and come with some uncertainty.

Simple Linear Regression

Concept of simple linear regression

- Statistical method models relationship between two variables

- Independent (explanatory) variable: (age, income)

- Dependent (response) variable: (blood pressure, spending)

- Determines strength and direction of linear relationship between variables

- Predicts value of dependent variable based on independent variable

- Relationship represented by straight line called regression line

- Equation:

- : y-intercept, value of when

- : slope, change in for one-unit change in

- : random error term, deviation of observed values from regression line

- Equation:

Least squares method application

- Estimates parameters and of regression line

- Minimizes sum of squared differences between observed and predicted values

- Estimate slope using formula:

- : estimated slope

- , : observed values of independent and dependent variables

- , : sample means of independent and dependent variables

- : number of observations

- Estimate y-intercept using formula:

- : estimated y-intercept

Derivation of regression equation

- Substitute estimated values of and into general equation:

- Resulting regression equation:

- : predicted value of dependent variable for given value of independent variable

Interpretation of regression components

- Slope represents change in dependent variable for one-unit change in independent variable

- Positive slope indicates positive linear relationship (weight and height)

- Negative slope indicates negative linear relationship (age and reaction time)

- Y-intercept represents value of dependent variable when independent variable equals zero

- May not have meaningful interpretation if range of values does not include zero (negative age)

Predictions using regression equation

- Substitute given value of independent variable into equation:

- Resulting value is predicted value of dependent variable for given value of independent variable

- Predictions subject to uncertainty

- Accuracy depends on strength of linear relationship and quality of model fit (correlation coefficient, residual analysis)