Introduction to Self-Balancing BSTs

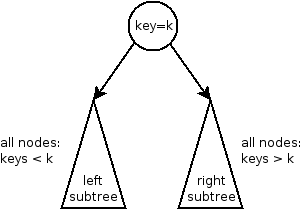

A standard BST can become lopsided depending on the order you insert elements, which kills its performance. Self-balancing BSTs fix this by automatically restructuring themselves after insertions and deletions, guaranteeing time complexity for core operations.

The Problem with Unbalanced BSTs

To understand why self-balancing trees matter, consider what happens when you insert already-sorted data (say, 1, 2, 3, 4, 5) into a plain BST. Each new node becomes the right child of the previous one, and you end up with a structure that looks and behaves exactly like a linked list. The tree's height becomes instead of the ideal .

This means:

- Search degrades from to

- Insertion degrades from to

- Deletion degrades from to

At that point, you've lost every advantage a BST is supposed to give you.

Unbalanced trees typically result from:

- Inserting elements in sorted or nearly sorted order (ascending or descending)

- Repeated deletions from one side of the tree, causing lopsided structure

What Self-Balancing Means

A self-balancing BST automatically adjusts its structure after every insertion or deletion so that the height stays proportional to . "Balance" here means minimizing the height difference between the left and right subtrees of every node. The result is consistent performance regardless of what order you insert or delete elements.

Types and Principles of Self-Balancing BSTs

AVL Trees

AVL trees (named after Adelson-Velsky and Landis) track a balance factor at each node, defined as:

The rule is simple: every node's balance factor must be , , or . If an insertion or deletion causes any node's balance factor to fall outside that range, the tree performs rotations to fix it.

AVL trees use four rotation types:

- Right rotation — fixes a left-heavy imbalance (left child's left subtree is too tall)

- Left rotation — fixes a right-heavy imbalance (right child's right subtree is too tall)

- Left-right rotation — a left rotation on the left child, then a right rotation on the node (fixes a left-heavy node where the left child is right-heavy)

- Right-left rotation — a right rotation on the right child, then a left rotation on the node (fixes a right-heavy node where the right child is left-heavy)

AVL trees are strictly balanced, so lookups are very fast. The tradeoff is that insertions and deletions may require more rotations compared to other self-balancing trees.

Red-Black Trees

Red-Black trees take a more relaxed approach to balance. Each node is colored either red or black, and the tree enforces these properties:

- The root is always black.

- No red node can have a red child (no two consecutive red nodes on any path).

- Every path from a given node down to any of its NULL leaves contains the same number of black nodes (this count is called the black-height).

After an insertion or deletion violates these rules, the tree restores them using a combination of rotations and color flips (swapping a node's color between red and black).

Because Red-Black trees allow slightly more imbalance than AVL trees, they do fewer restructuring operations on average during insertions and deletions. That's why many standard library implementations (like Java's TreeMap and C++'s std::map) use Red-Black trees under the hood.

Balancing Mechanisms: Core Principles

All self-balancing BSTs share a few key ideas, even though the specific rules differ:

- Height control — The goal is always to keep tree height close to . AVL trees enforce this strictly; Red-Black trees enforce it loosely (the height is at most ).

- Rotations — The fundamental tool for restructuring. A left rotation moves a node's right child up to take its place; a right rotation moves the left child up. These preserve BST ordering while changing the tree's shape.

- Local restructuring — Rebalancing happens locally, near the point of insertion or deletion, and propagates upward only as needed. You don't rebuild the whole tree.

AVL vs. Red-Black at a glance: AVL trees give faster lookups (stricter balance, shorter height). Red-Black trees give faster insertions/deletions (fewer rotations on average). Choose based on whether your workload is read-heavy or write-heavy.