Binaural Cues and Sound Localization

Your brain figures out where sounds come from by comparing what each ear receives. Since your ears are on opposite sides of your head, a sound coming from the left will reach your left ear slightly sooner and slightly louder than your right ear. These tiny differences are the foundation of spatial hearing.

Binaural cues for localization

Interaural Time Difference (ITD) is the difference in arrival time of a sound between your two ears. If a sound comes from directly to your left, it reaches your left ear a fraction of a millisecond before your right ear. Your brain detects this delay and uses it to estimate direction.

ITD works best for low-frequency sounds (below ~1500 Hz). At these frequencies, the wavelengths are long enough relative to your head that the brain can reliably track phase differences between the two ears. You can estimate ITD with:

where is the radius of the head, is the azimuth angle of the source, and is the speed of sound.

Interaural Level Difference (ILD) is the difference in sound intensity between your ears. Your head physically blocks high-frequency sound waves, creating an acoustic "shadow" on the far side. This head shadow effect makes ILD most useful for high-frequency sounds (above ~1500 Hz), where the wavelengths are short enough for the head to obstruct them effectively. ILD increases with both frequency and the angle of the source away from center.

The duplex theory ties these two cues together: your brain relies on ITD for low frequencies and ILD for high frequencies. Together, they cover the full audible spectrum for left-right localization.

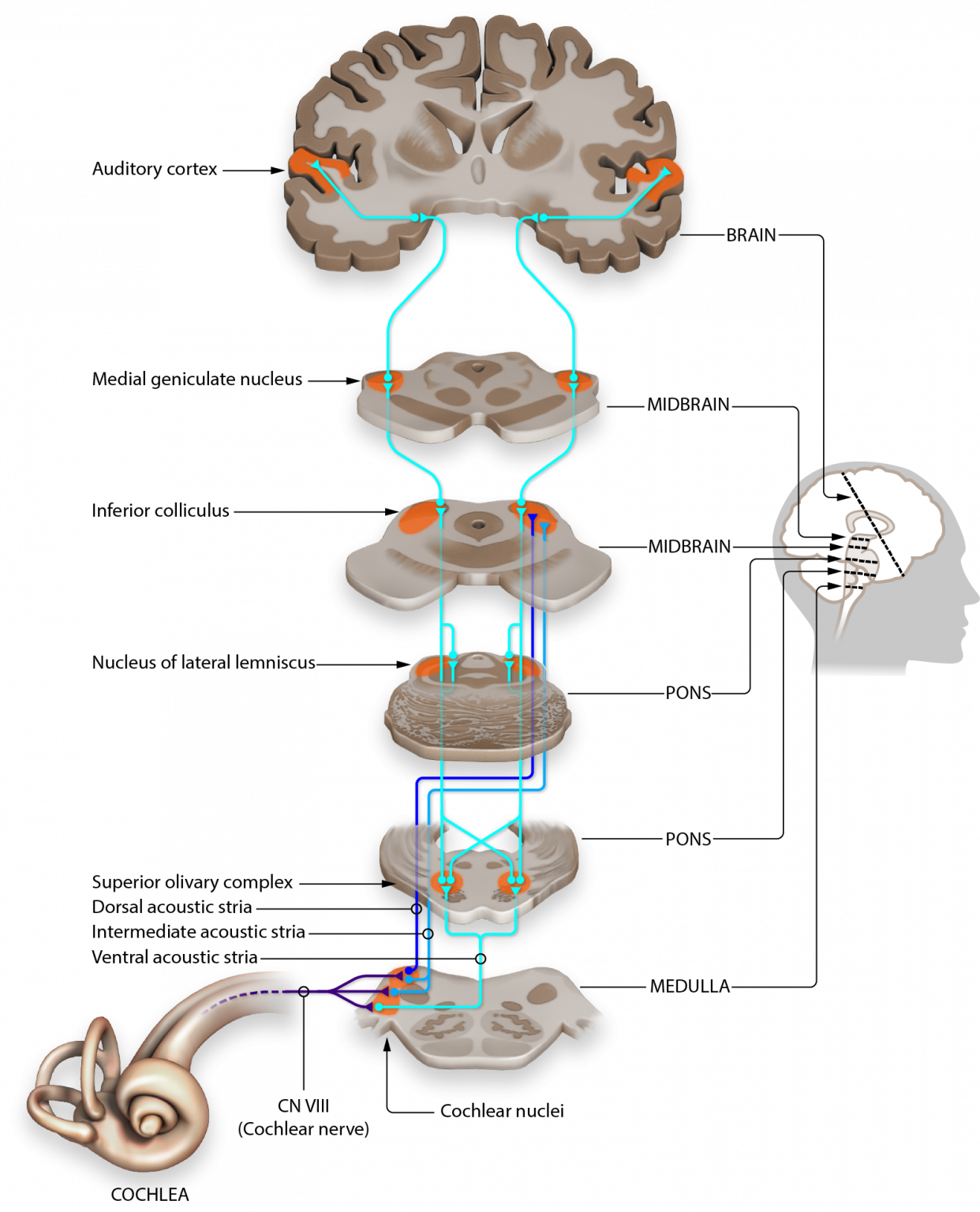

Neural processing of these cues begins early in the auditory pathway. The superior olivary complex in the brainstem contains specialized neurons that compare input from both ears, detecting interaural time and level differences. These signals are then integrated further in the auditory cortex to build a spatial map of your surroundings.

Cone of confusion implications

Even with ITD and ILD, there are positions in space that produce identical binaural cues. The cone of confusion is the set of all points that lie on a conical surface extending outward from each ear, symmetrical around the interaural axis (the imaginary line connecting your ears). Any sound source located on this cone produces the same ITD and ILD, so your brain can't distinguish between them using binaural cues alone.

This leads to specific ambiguities:

- Front-back confusion: A source directly in front of you and one directly behind you can produce nearly identical ITD and ILD values.

- Up-down confusion: Sources at different elevations on the same cone also share similar binaural cues.

Your auditory system resolves these ambiguities in two main ways:

- Head movements break the symmetry. Even a small turn of the head changes the ITD and ILD pattern, giving your brain new data to distinguish front from back or up from down.

- Spectral cues from the pinna (outer ear) provide direction-dependent filtering that differs for sources in front versus behind, or above versus below.

These ambiguities matter for technology too. Virtual audio systems that only model ITD and ILD without accounting for the cone of confusion will produce unconvincing spatial audio. Accurate HRTF modeling and head-tracking are needed to overcome this.

Spectral Cues and Localization Limitations

Spectral cues from the outer ear

Your pinna (the visible, folded part of your outer ear) acts as a direction-dependent filter. Sound waves bounce off its ridges and folds before entering the ear canal, and these reflections alter the frequency content of the sound in ways that depend on where the sound is coming from. Your brain learns these spectral patterns over a lifetime and uses them to infer source direction.

The Head-Related Transfer Function (HRTF) captures this entire filtering process mathematically. It describes how sound is transformed as it travels from a source to your ear canal, accounting for the effects of your pinna, head, and torso. Because everyone's anatomy is slightly different, HRTFs are unique to each person.

Spectral cues are especially important for elevation perception. Notches in the frequency spectrum (typically in the 5–10 kHz range) created by pinna reflections shift depending on whether a sound comes from above or below. Resonances in the ear canal and the concha (the bowl-shaped part of the outer ear) create spectral peaks that further help with vertical localization.

Unlike ITD and ILD, spectral cues are primarily monaural, meaning a single ear can use them. This makes them valuable for resolving cone-of-confusion ambiguities and for people with hearing loss in one ear. Combined with binaural cues, spectral cues enable full three-dimensional sound localization.

Limitations of sound localization

Minimum Audible Angle (MAA) is the smallest change in source position you can detect. For sounds directly in front of you, this is typically 1–2°. Accuracy drops significantly for sources off to the side (lateral positions), where the MAA can be much larger.

Distance perception is notably imprecise compared to directional hearing. Beyond a few meters, your brain relies on indirect cues:

- Intensity: Louder sounds seem closer, but this only works if you already know how loud the source should be.

- Direct-to-reverberant ratio: In a room, nearby sounds have more direct energy relative to reflections. Farther sources have proportionally more reverberation.

Several factors affect localization accuracy:

- Source characteristics: Broadband sounds (containing many frequencies) are easier to localize than pure tones. Longer sounds and sharp onsets also help.

- Environmental factors: Reverberation, background noise, and competing sources all degrade accuracy.

- Individual differences: Ear shape, hearing sensitivity, and experience all play a role.

Two phenomena help in complex listening environments:

- The cocktail party effect describes your ability to focus on one voice in a noisy room by using spatial cues (along with attention and familiarity) to separate sound sources.

- The precedence effect (also called the law of the first wavefront) causes your brain to suppress echoes arriving within roughly 5–40 ms of the direct sound, so you perceive the source at its true location rather than at the reflecting surfaces.

Reproducing accurate spatial hearing in technology remains challenging. Virtual and augmented reality systems need precise, individualized HRTF data and real-time head tracking. Hearing aid design must balance amplification with preserving the natural spatial cues that listeners depend on for localization.