Bayesian inference is a powerful statistical approach that updates beliefs based on new evidence. It combines prior knowledge with observed data to form posterior probabilities, allowing for more nuanced and flexible analysis than traditional frequentist methods.

This topic explores the foundations of Bayesian inference, including Bayes' theorem, prior and posterior distributions, and likelihood functions. It also compares Bayesian and frequentist approaches, discussing their strengths and limitations in statistical analysis and decision-making.

Foundations of Bayesian inference

- Bayesian inference forms a cornerstone of probabilistic reasoning in Theoretical Statistics

- Provides a framework for updating beliefs based on observed data and prior knowledge

- Allows for incorporation of uncertainty in statistical models and decision-making processes

Bayes' theorem

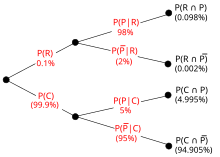

- Fundamental equation in Bayesian statistics expresses posterior probability in terms of prior, likelihood, and evidence

- Mathematical formulation:

- Enables updating of probabilities as new information becomes available

- Applied in various fields (medical diagnosis, machine learning, forensic science)

Prior vs posterior distributions

- Prior distribution represents initial beliefs or knowledge about parameters before observing data

- Posterior distribution incorporates both prior knowledge and observed data to update beliefs

- Relationship expressed as:

- Posterior distribution serves as the basis for Bayesian inference and decision-making

Likelihood function

- Probability of observing the data given specific parameter values

- Represents how well the model explains the observed data

- Denoted as where θ represents parameters and x represents data

- Plays crucial role in connecting prior and posterior distributions

Marginal likelihood

- Also known as evidence or model evidence

- Represents the probability of observing the data under all possible parameter values

- Calculated by integrating the product of likelihood and prior over all parameter values

- Used in model comparison and Bayes factor calculations

- Mathematically expressed as:

Bayesian vs frequentist approaches

- Bayesian and frequentist approaches represent two major paradigms in statistical inference

- Both aim to draw conclusions from data but differ in their philosophical foundations

- Understanding these differences enhances the ability to choose appropriate methods for statistical analysis

Philosophical differences

- Bayesian approach treats parameters as random variables with probability distributions

- Frequentist approach considers parameters as fixed, unknown constants

- Bayesians incorporate prior knowledge, while frequentists rely solely on observed data

- Bayesian inference focuses on updating beliefs, frequentist inference on long-run properties of estimators

Practical implications

- Bayesian methods provide direct probability statements about parameters

- Frequentist methods rely on p-values and confidence intervals for inference

- Bayesian approach allows for sequential updating of beliefs as new data arrives

- Frequentist methods often require larger sample sizes for reliable inference

- Bayesian analysis can handle small sample sizes more effectively

Strengths and limitations

- Bayesian strengths include intuitive interpretation and incorporation of prior knowledge

- Bayesian limitations involve computational complexity and sensitivity to prior choices

- Frequentist strengths include objectivity and well-established theoretical properties

- Frequentist limitations include difficulty in handling complex models and inability to update beliefs

Prior distributions

- Prior distributions play a crucial role in Bayesian inference by incorporating existing knowledge

- Represent beliefs about parameters before observing data

- Choice of prior can significantly impact posterior inference, especially with limited data

- Balancing informativeness and objectivity presents a key challenge in prior selection

Types of priors

- Discrete priors for parameters with finite possible values

- Continuous priors for parameters with infinite possible values

- Parametric priors with specific distributional forms (normal, gamma, beta)

- Non-parametric priors allowing for more flexible representations of uncertainty

Informative vs non-informative priors

- Informative priors incorporate strong prior beliefs or expert knowledge

- Non-informative priors aim to have minimal impact on posterior inference

- Jeffreys priors designed to be invariant under parameter transformations

- Reference priors maximize the expected Kullback-Leibler divergence between prior and posterior

Conjugate priors

- Priors that result in posterior distributions of the same family as the prior

- Simplify posterior calculations and enable closed-form solutions

- Examples include beta-binomial, normal-normal, and gamma-Poisson conjugate pairs

- Provide computational advantages in Bayesian inference

Improper priors

- Priors that do not integrate to a finite value

- Used to represent vague or minimal prior information

- Can lead to proper posterior distributions if likelihood is sufficiently informative

- Require careful consideration to ensure posterior propriety and valid inference

Posterior distribution

- Posterior distribution represents updated beliefs about parameters after observing data

- Combines prior knowledge with information from observed data through Bayes' theorem

- Serves as the basis for Bayesian inference, prediction, and decision-making

- Provides a complete probabilistic description of uncertainty about parameters

Derivation and interpretation

- Derived using Bayes' theorem:

- Represents the probability distribution of parameters given observed data

- Allows for direct probability statements about parameters of interest

- Interpretation depends on the choice of prior and the observed data

Credible intervals

- Bayesian alternative to frequentist confidence intervals

- Provide a range of values that contain the true parameter with a specified probability

- Equal-tailed credible interval uses quantiles of the posterior distribution

- Highest Posterior Density (HPD) interval minimizes the width for a given probability

Highest posterior density

- Region of the parameter space with highest posterior probability density

- Represents the most probable values of the parameter given the data and prior

- Used to construct HPD credible intervals

- Provides a concise summary of the posterior distribution's shape and location

Bayesian computation methods

- Bayesian computation methods enable inference for complex models and large datasets

- Address challenges in calculating posterior distributions analytically

- Provide numerical approximations to posterior distributions and related quantities

- Essential for practical implementation of Bayesian inference in real-world problems

Markov Chain Monte Carlo

- Family of algorithms for sampling from probability distributions

- Constructs a Markov chain with the desired distribution as its equilibrium distribution

- Enables sampling from high-dimensional and complex posterior distributions

- Widely used in Bayesian inference, statistical physics, and machine learning

Gibbs sampling

- Special case of Markov Chain Monte Carlo for multivariate distributions

- Samples each variable conditionally on the current values of other variables

- Particularly useful for hierarchical models and models with conjugate priors

- Converges to the target distribution under mild conditions

Metropolis-Hastings algorithm

- General-purpose MCMC algorithm for sampling from arbitrary target distributions

- Proposes new states and accepts or rejects based on acceptance probability

- Allows for sampling from distributions known only up to a normalizing constant

- Forms the basis for many advanced MCMC methods (Hamiltonian Monte Carlo, Reversible Jump MCMC)

Bayesian model selection

- Bayesian model selection provides a framework for comparing and choosing between competing models

- Incorporates model complexity and fit to data in a principled manner

- Allows for uncertainty quantification in model selection process

- Addresses limitations of frequentist model selection approaches

Bayes factors

- Ratio of marginal likelihoods of two competing models

- Quantify the relative evidence in favor of one model over another

- Interpretation based on scales proposed by Harold Jeffreys or Robert Kass and Adrian Raftery

- Calculated as:

Posterior model probabilities

- Probabilities assigned to each model after observing the data

- Incorporate prior model probabilities and Bayes factors

- Allow for direct statements about model plausibility

- Calculated using Bayes' theorem:

Bayesian Information Criterion

- Approximation to the log marginal likelihood for large sample sizes

- Balances model fit and complexity through a penalty term

- Defined as:

- Used for model selection when computing Bayes factors directly becomes infeasible

Hierarchical Bayesian models

- Hierarchical models represent complex systems with multiple levels of uncertainty

- Allow for sharing of information across groups or subpopulations

- Provide a flexible framework for modeling structured data

- Enable more robust inference and improved parameter estimation

Structure and components

- Multiple levels of parameters with dependencies between levels

- Lower-level parameters modeled as draws from distributions governed by higher-level parameters

- Typically represented as directed acyclic graphs (DAGs)

- Example structure: data → group-level parameters → population-level parameters

Hyperparameters

- Parameters that govern the distribution of other parameters in the model

- Often represent population-level characteristics or variability

- Allow for pooling of information across groups or individuals

- Estimated from data along with other model parameters

Applications in complex systems

- Multilevel regression models in social sciences and education research

- Random effects models in longitudinal studies and meta-analysis

- Spatial and spatiotemporal models in environmental sciences and epidemiology

- Topic models in natural language processing and text analysis

Bayesian decision theory

- Bayesian decision theory provides a framework for making optimal decisions under uncertainty

- Incorporates probability theory and utility theory to evaluate decision alternatives

- Allows for consideration of both prior knowledge and observed data in decision-making

- Widely applied in fields such as economics, finance, and artificial intelligence

Loss functions

- Quantify the cost or penalty associated with different decisions and outcomes

- Common loss functions include squared error, absolute error, and 0-1 loss

- Choice of loss function depends on the specific problem and decision-maker's preferences

- Mathematically represented as where θ is the true state and a is the action taken

Utility functions

- Represent the decision-maker's preferences over different outcomes

- Inverse of loss functions, measuring the desirability of outcomes

- Often assumed to be monotonic and continuous

- Examples include linear utility, logarithmic utility, and exponential utility functions

Optimal decision making

- Involves choosing actions that maximize expected utility or minimize expected loss

- Bayes risk defined as the expected loss over all possible outcomes

- Optimal decision rule minimizes the Bayes risk

- Incorporates both prior knowledge and observed data through the posterior distribution

Bayesian hypothesis testing

- Bayesian hypothesis testing provides an alternative to frequentist null hypothesis significance testing

- Allows for direct comparison of competing hypotheses or models

- Incorporates prior probabilities of hypotheses and observed data

- Provides more intuitive interpretation of evidence strength

Posterior odds

- Ratio of posterior probabilities of two competing hypotheses

- Combines prior odds with Bayes factor to update beliefs about hypotheses

- Calculated as:

- Allows for direct statements about the relative plausibility of hypotheses

Bayesian vs frequentist hypothesis tests

- Bayesian tests provide probabilities of hypotheses given data

- Frequentist tests calculate p-values as probabilities of data given null hypothesis

- Bayesian approach allows for evidence in favor of null hypothesis

- Frequentist approach focuses on rejecting or failing to reject null hypothesis

- Bayesian tests can incorporate prior information and update beliefs sequentially

Multiple hypothesis testing

- Bayesian approach naturally accounts for multiple comparisons

- Avoids issues of p-value adjustment in frequentist multiple testing

- Hierarchical models can be used to share information across tests

- False discovery rate control through posterior probabilities of null hypotheses

Bayesian regression

- Bayesian regression extends classical regression models to incorporate prior information

- Provides full posterior distributions for regression coefficients and other parameters

- Allows for uncertainty quantification in parameter estimates and predictions

- Enables more flexible modeling approaches and improved inference in small sample settings

Linear regression models

- Bayesian formulation of standard linear regression model

- Prior distributions specified for regression coefficients and error variance

- Posterior distributions obtained through Bayes' theorem

- Enables probabilistic statements about regression parameters and predictions

Logistic regression models

- Bayesian approach to modeling binary or categorical outcomes

- Prior distributions specified for logistic regression coefficients

- Posterior inference often requires MCMC methods due to non-conjugacy

- Allows for more reliable inference in cases of complete or quasi-complete separation

Model comparison and averaging

- Bayesian model comparison using Bayes factors or posterior model probabilities

- Model averaging combines predictions from multiple models weighted by their posterior probabilities

- Accounts for model uncertainty in inference and prediction

- Improves predictive performance by incorporating information from multiple plausible models