Big Data in Hydrologic Analysis

Big data is reshaping how hydrologists study water systems. By dramatically increasing both the spatial and temporal resolution of observations, large-scale datasets let us capture variability that older, sparser monitoring networks simply missed. But working with these datasets brings real challenges in storage, quality control, and computing power.

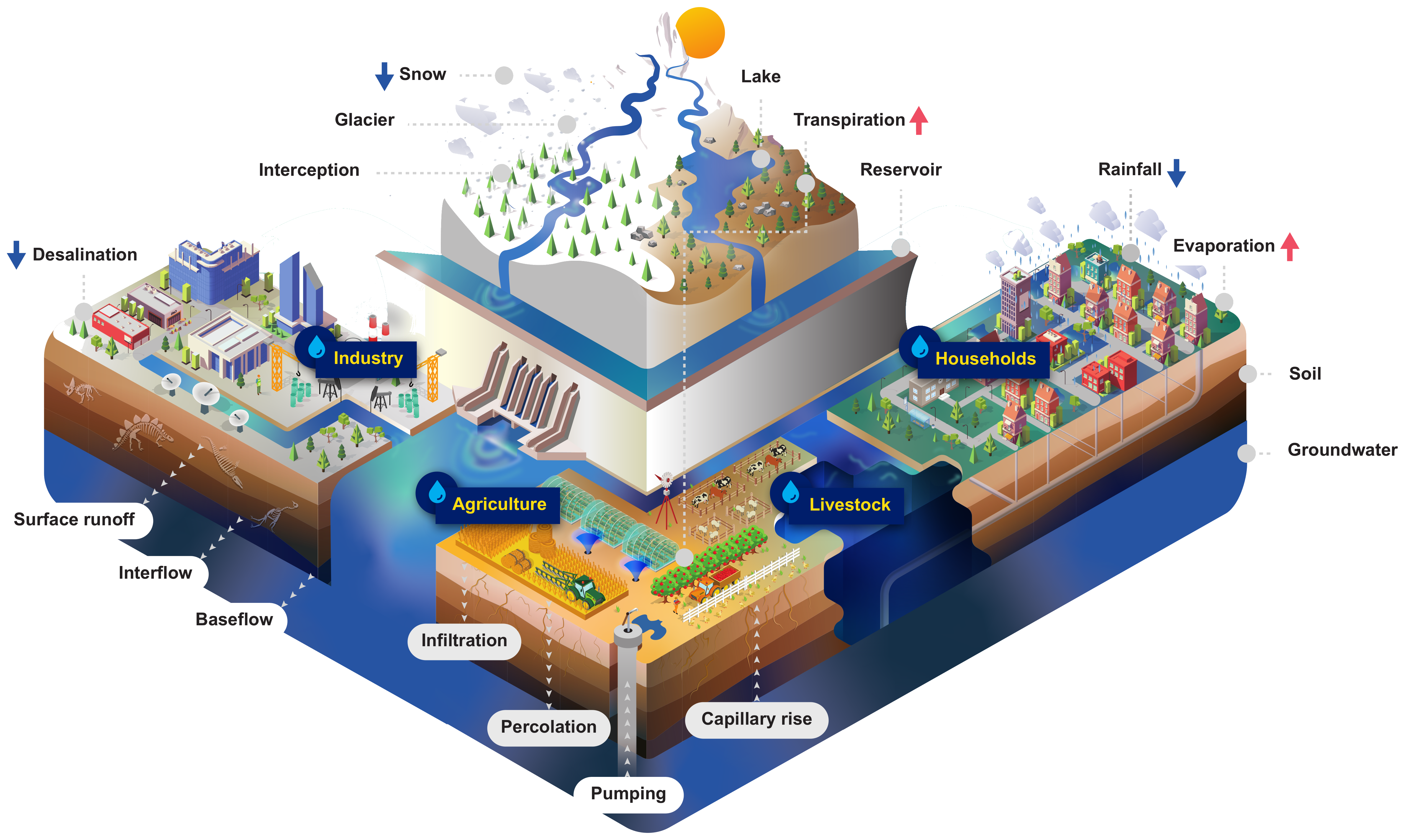

Sources of Big Data in Hydrology

High-resolution remote sensing provides spatially continuous coverage of hydrologic variables. Satellite imagery can track snow cover, soil moisture, and land use change across entire watersheds, while LiDAR generates detailed elevation models used for floodplain mapping and terrain analysis. The key advantage is capturing spatial heterogeneity: real landscapes aren't uniform, and high-res data reflects that.

Crowdsourced observations fill gaps that traditional monitoring networks can't cover. Citizen science projects (like CoCoRaHS for precipitation) and even social media reports during flood events increase spatial data density. This type of data also opens the door to real-time monitoring and early warning systems, since reports can arrive faster than official gauge readings.

Sensor networks provide continuous, automated monitoring of variables like precipitation, streamflow, and groundwater levels. Weather stations and stream gauges are classic examples, but newer deployments include soil moisture probes, water quality sondes, and low-cost IoT sensors. These networks improve our understanding of hydrologic processes across scales, from hillslope to continental.

Data Management Challenges

Collecting massive volumes of data is only useful if you can actually work with it. Three core challenges stand out:

- Storage and infrastructure. Hydrologic big data can reach terabytes or more. Cloud computing platforms and data warehouses have become standard solutions, but they introduce costs and require careful data architecture.

- Quality control and consistency. Data from different sensors, platforms, and citizen observers will have different accuracies, formats, and reporting intervals. Ensuring consistency requires robust QA/QC pipelines before any analysis begins.

- Data integration and harmonization. Combining satellite imagery with ground-based gauge data, for instance, means reconciling different spatial resolutions and temporal frequencies. Techniques like data fusion and spatial interpolation help bridge these mismatches, but each introduces its own assumptions.

Computational requirements scale with data volume. Processing large remote sensing datasets or running ML algorithms on high-dimensional inputs often demands high-performance computing, parallel processing, or GPU acceleration. Efficient algorithms matter just as much as raw computing power.

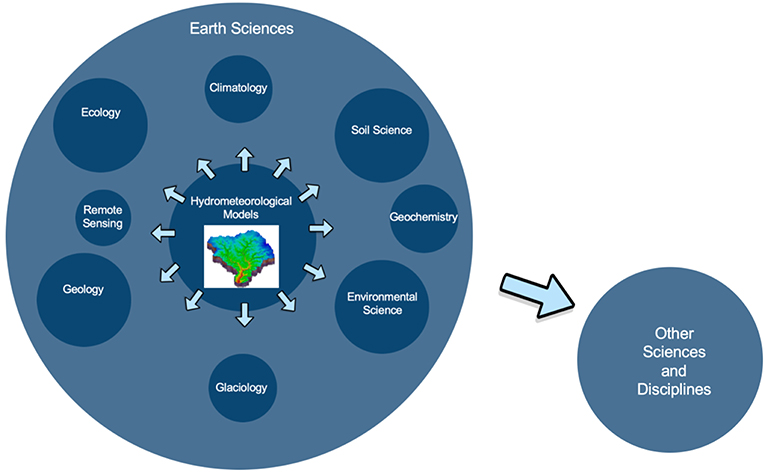

Machine Learning in Hydrologic Modeling

Machine learning (ML) gives hydrologists a set of powerful, data-driven tools for prediction. These algorithms learn patterns directly from data rather than relying on explicit physical equations. Three of the most widely used approaches in hydrology are artificial neural networks, support vector machines, and random forests.

Core Machine Learning Methods

Artificial Neural Networks (ANNs) are loosely inspired by biological neurons. They consist of layers of interconnected nodes that learn to map inputs to outputs by adjusting connection weights during training. ANNs excel at capturing complex, nonlinear relationships, which makes them well-suited for:

- Rainfall-runoff modeling (predicting streamflow from precipitation and other inputs)

- Groundwater level prediction

- Water quality forecasting

Support Vector Machines (SVMs) are supervised learning algorithms used for both classification and regression. An SVM finds the optimal hyperplane that best separates classes (for classification) or best fits the data (for regression). In hydrology, SVMs have been applied to:

- Flood forecasting

- Drought monitoring and classification

- Soil moisture estimation from remote sensing inputs

Random Forests (RFs) are ensemble methods built from many individual decision trees. Each tree is trained on a random subset of the data, and the final prediction aggregates results across all trees. This averaging reduces overfitting compared to a single decision tree. RF applications include:

- Streamflow prediction

- Evapotranspiration estimation

- Landslide susceptibility mapping

Machine Learning vs. Process-Based Models

This is one of the most important comparisons in modern hydrology. Both approaches have clear strengths and weaknesses.

ML models learn patterns directly from data. They often achieve higher prediction accuracy, especially for complex, nonlinear systems. But they're frequently called "black box" models because it's difficult to inspect why they produce a given output. Deep learning models and large ensembles are particularly hard to interpret.

Process-based models are built on physical laws: mass balance, energy balance, flow routing equations. They may not match ML models in raw predictive accuracy for every task, but they offer something ML models typically can't: interpretability. You can trace a result back to specific physical mechanisms, which matters for understanding system behavior under novel conditions (like climate change scenarios the model was never trained on).

A practical takeaway: ML models tend to perform best when you have abundant training data and your goal is prediction within the range of observed conditions. Process-based models are more trustworthy for extrapolation and for building physical understanding. Hybrid approaches that combine both are an active area of research.

Ethics of Data-Driven Hydrology

Using big data and ML in hydrology raises ethical questions that go beyond model performance.

Privacy and data use. Crowdsourced data may contain location information or personal details. Responsible use requires clear data governance policies.

Bias in data and algorithms. ML models learn from the data they're trained on. If monitoring stations are concentrated in wealthy regions, the model may perform poorly in underserved areas. Three types of bias to watch for:

- Sampling bias from uneven spatial coverage in data collection

- Algorithmic bias from model architectures or training procedures that favor certain patterns

- Historical bias when past data reflects inequitable water management practices

Strategies for responsible practice:

- Clearly communicate model assumptions, limitations, and uncertainties in any results

- Use diverse, representative datasets for training and validation

- Regularly audit models for bias and fairness across different regions or populations

- Engage affected stakeholders and communities in the modeling process

- Develop and follow guidelines for transparent, accountable use of ML in hydrology

These aren't just abstract principles. A flood forecasting model that works well in data-rich urban areas but fails in rural communities can have life-or-death consequences. The ethical dimension of data-driven hydrology is inseparable from the technical one.