Unification for Resolution

Understanding Unification in First-Order Logic

Unification is the process of finding a substitution that makes two logical expressions syntactically identical. In the context of resolution, this is what lets you combine clauses that contain complementary literals and derive new clauses from them.

Two literals are complementary when one is the negation of the other and they can be unified. For instance, and are complementary because the substitution makes identical to , which directly contradicts . Without unification, resolution would only work on ground (variable-free) literals, which severely limits its power.

Most General Unifier (MGU)

When two expressions can be unified, there are often many substitutions that work. The most general unifier (MGU) is the one that commits to as little as possible while still making the expressions identical. Any other valid unifier is just a more specific instance of the MGU.

- Given and , the MGU is . Notice that remains a free variable; the MGU doesn't bind it to anything because it doesn't need to.

- A substitution like also unifies these expressions, but it's more specific than necessary. The MGU keeps maximum generality, which matters for the efficiency of the resolution process.

If a set of clauses is unsatisfiable, the resolution algorithm can derive the empty clause () using unification. Deriving constitutes a proof of unsatisfiability.

Applying the Unification Algorithm

Recursive Matching of Terms

The unification algorithm takes two expressions as input and attempts to build a substitution that makes them identical. Here's how it proceeds:

- Compare outermost symbols. If both expressions are the same constant or the same function symbol with the same arity, proceed to their arguments. If they're different function symbols (or different arities), unification fails immediately.

- Handle variables. If one expression is a variable, bind it to the other expression (subject to the occurs check, described below). Apply this binding throughout both expressions before continuing.

- Recurse on arguments. For function terms, unify corresponding arguments left to right, accumulating bindings as you go. Each new binding is applied to all remaining arguments before the next comparison.

For example, to unify and :

- The outermost symbol matches in both, so recurse on the argument pairs.

- First argument pair: and . Bind .

- Second argument pair: and . The symbol matches, so recurse. Bind .

- Result: MGU is .

Occurs Check and Failure Conditions

Unification can fail in two ways:

- Symbol clash: Two non-variable terms have different outermost function symbols or constants (e.g., and ).

- Occurs check failure: A variable would need to be bound to a term that contains that same variable, producing an infinite term.

The occurs check is the subtler failure. Consider unifying with . The first argument pair gives . Applying this, the second pair becomes: unify with . This would require , but appears inside . Allowing this would create the infinite structure , so unification correctly fails.

If no failure occurs, the algorithm returns the MGU.

Implementing Resolution with Unification

Applying the Resolution Inference Rule

The resolution rule combines two clauses that contain complementary literals. The full procedure for a single resolution step:

- Select two clauses from your clause set, say and .

- Identify a complementary pair: a literal in and a literal in (or vice versa) such that and are unifiable.

- Compute the MGU of and .

- Form the resolvent: Apply to all literals in both clauses, then remove the complementary pair. The resolvent is .

Example: Given clauses and :

- Complementary pair: and .

- MGU: .

- Apply MGU to both clauses: and .

- Remove the complementary literals and .

- Resolvent: .

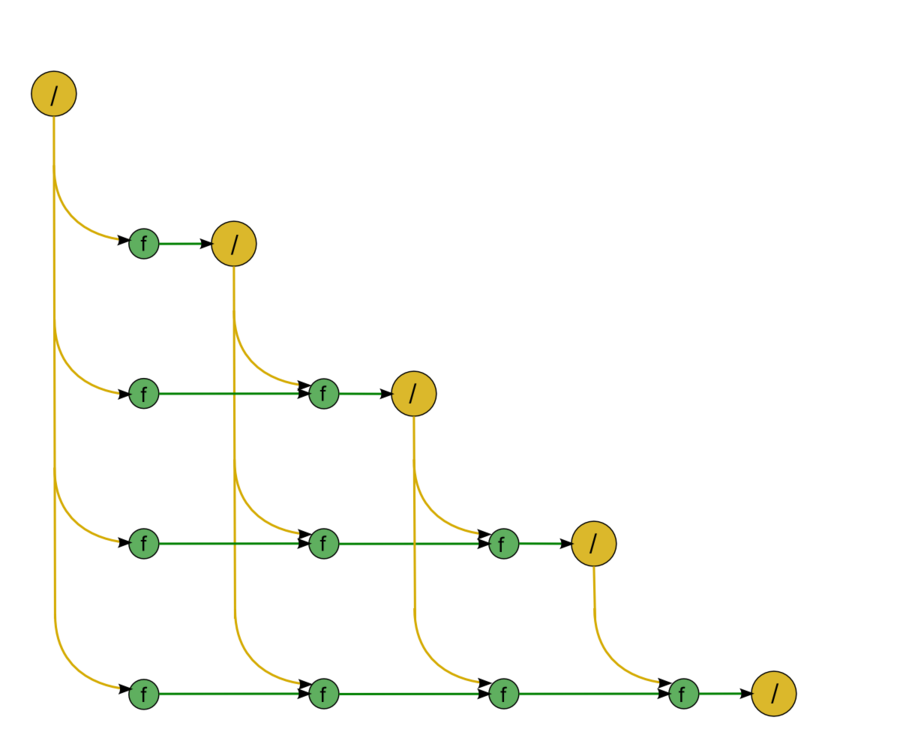

Systematic Exploration and Unsatisfiability Proof

The full resolution algorithm works as follows:

- Start with the initial set of clauses .

- Select two clauses from that contain a complementary pair.

- Compute the resolvent and add it to .

- If the resolvent is the empty clause , stop: the original clause set is unsatisfiable.

- If no new resolvents can be generated (every possible resolution has been tried), stop: the clause set is satisfiable, and resolution cannot derive a contradiction.

- Otherwise, return to step 2.

Deriving means you resolved two unit clauses like and where and unify. Once both literals are removed, nothing remains, giving you the empty clause.

Unification's Role in Resolution Efficiency

Efficiency Benefits of Unification

The MGU keeps derived clauses as general as possible, which directly reduces redundant work. Consider clauses and . Unification yields MGU (or equivalently ), producing the resolvent . This single resolvent covers every ground instance at once. If you had instead picked a specific constant and resolved with , you'd get , which only covers one case. You'd need to repeat the resolution for every constant in the domain.

Unification also prunes the search space: when two literals fail to unify, you know immediately that no resolution is possible between those clauses on those literals, so you skip them entirely.

Optimizations and Challenges

Even with MGUs, the resolution search space can grow rapidly. Several factors affect performance:

- Term depth: Deeply nested function terms increase the cost of each unification call and can produce large, complex resolvents.

- Variable count: Many variables in a clause increase the number of potential unification targets.

Practical resolution systems use additional techniques to manage this:

- Indexing organizes clauses by their predicate symbols and argument structure, so you can quickly find candidate clauses for resolution instead of checking every pair.

- Subsumption removes clauses that are strictly less general than another clause already in the set. If is in your clause set, there's no reason to keep since it's a special case.

- Linear-time unification algorithms (such as those based on union-find structures) keep the per-step cost of unification low even for large terms.