Propositional Logic Fundamentals

Propositional logic is the foundation of formal reasoning. It deals with declarative statements that are either true or false, and it uses logical connectives to build complex propositions from simpler ones. Since this is a Formal Logic II course, you should already be familiar with most of this material. This review sharpens those fundamentals and highlights the details that matter most going forward, especially around well-formed formulas, equivalence laws, and inference rules.

Basic Components and Truth Values

A proposition (or statement) is a declarative sentence that has exactly one truth value: true or false. "The sky is blue" is a proposition. "Close the door" is not, because commands don't carry truth values. Questions and exclamations are also excluded.

The five basic logical connectives:

- Negation (): "not"

- Conjunction (): "and"

- Disjunction (): "or" (inclusive or)

- Implication (): "if...then"

- Biconditional (): "if and only if"

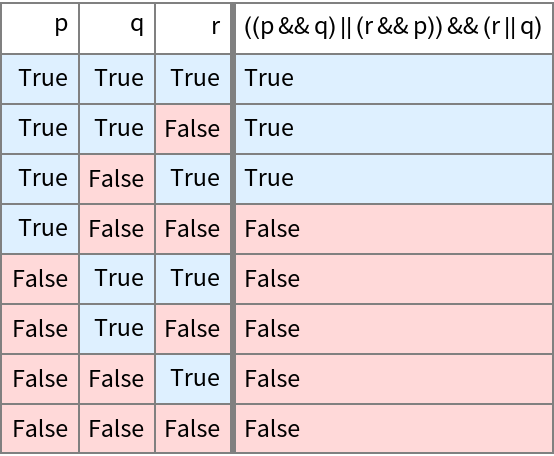

Truth tables systematically determine the truth value of a compound proposition for every possible combination of truth values of its components. For propositional variables, the table has rows.

Two special categories of compound propositions worth remembering:

- A tautology is true under every possible assignment of truth values (e.g., ).

- A contradiction is false under every possible assignment (e.g., ).

A proposition that is neither a tautology nor a contradiction is called a contingency.

Logical Equivalence

Two propositions are logically equivalent when they share the same truth value under every possible truth-value assignment to their components. This is denoted by (some texts use , but is a metalogical symbol, not a connective within the object language).

A classic example: . You can verify this by constructing truth tables for both sides and confirming every row matches.

The key practical consequence: if two formulas are logically equivalent, you can substitute one for the other anywhere in a larger formula without changing that formula's truth value. This substitutability is what makes equivalence laws so powerful in proofs and simplifications.

Constructing Well-Formed Formulas

Propositional Variables and Atomic Formulas

Propositional variables, typically lowercase letters like , , , stand in for simple propositions. For instance, let represent "It is raining" and represent "I have an umbrella."

An atomic formula is just a single propositional variable on its own (e.g., , ). These are the most basic well-formed formulas and serve as the building blocks for everything more complex.

Formation Rules and Compound Formulas

A well-formed formula (wff) is any expression built from propositional variables and logical connectives according to the following recursive formation rules:

- Every propositional variable is a wff.

- If is a wff, then is a wff.

- If and are wffs, then , , , and are wffs.

- Nothing else is a wff.

These rules guarantee that every wff is syntactically unambiguous. Compound formulas like , , and are all built by applying these rules.

The main connective (or dominant operator) of a wff is the connective that was applied last in its construction. It governs the truth value of the entire formula. In , the main connective is .

Parentheses disambiguate the order of operations. and have different truth tables. When parentheses are dropped, a standard precedence convention applies (from highest to lowest binding strength): , , , , . So is read as .

Evaluating Truth Values

Key Equivalence Laws

The truth value of a complex proposition is fully determined by the truth values of its atomic components and the connectives joining them. Several equivalence laws let you transform formulas into simpler or more useful forms.

De Morgan's Laws describe how negation distributes over conjunction and disjunction:

In short: negating an "and" flips it to "or" (and vice versa), while negating each component.

Distributive Laws let you factor or expand, much like distribution in algebra:

Absorption Laws simplify formulas where a variable appears redundantly:

These are worth internalizing. In proofs, recognizing when an absorption or De Morgan's step applies can save you several lines of work.

Negation and Implication

The negation of , written , simply flips the truth value: true becomes false, false becomes true.

Negating an implication is a common source of mistakes. The negation of is not . Instead:

Think of it this way: an implication is false in exactly one case, when is true and is false. So its negation asserts precisely that case.

Example: The negation of "If it is raining, then I have an umbrella" is "It is raining and I do not have an umbrella."

The contrapositive of is , and it is always logically equivalent to the original implication. Don't confuse the contrapositive with the converse () or the inverse (), neither of which is equivalent to the original.

Rules of Inference for Derivation

Arguments and Valid Inference Rules

An argument in propositional logic consists of a set of premises and a conclusion. The argument is valid if, whenever all the premises are true, the conclusion must also be true. Validity is about the logical structure, not whether the premises are actually true in the real world.

Three inference rules you'll use constantly:

- Modus Ponens (Law of Detachment): From and , derive .

- Modus Tollens: From and , derive . (This works because modus tollens is essentially modus ponens applied to the contrapositive.)

- Hypothetical Syllogism: From and , derive . This lets you chain implications together.

Resolution and Conjunction Introduction

Resolution is a powerful rule used heavily in automated theorem proving. It derives a new clause from two clauses that contain complementary literals (a literal is a propositional variable or its negation).

How resolution works, step by step:

- Take two clauses, each written as a disjunction of literals.

- Identify a variable that appears positive in one clause and negated in the other.

- Remove that complementary pair and combine the remaining literals into a single disjunction.

Example: From and , the variable appears positive in the first clause and negated in the second. Removing the complementary pair and combining the rest gives .

Conjunction Introduction (also called Adjunction) is straightforward: if you've derived and you've derived as separate results, you can combine them into . Simple, but essential for assembling conclusions in formal proofs.