Point estimation efficiency is all about finding the best way to guess a population's true value. It's like trying to hit a bullseye – the closer you get, the more efficient your estimate is.

Efficiency compares different estimation methods, looking at how spread out their guesses are. The method with less spread (lower variance) is more efficient. It's a balancing act between accuracy and precision in statistical guesswork.

Efficiency in Point Estimation

Concept of efficiency in estimation

- Efficiency in point estimation measures quality and precision of estimator

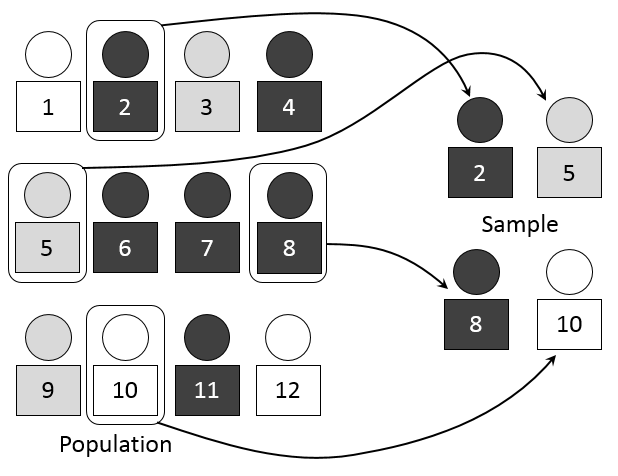

- Relative efficiency compares two estimators based on variances

- Fisher information quantifies parameter information inversely related to efficient estimator variance

- Cramér-Rao lower bound sets theoretical minimum variance for unbiased estimator used as efficiency benchmark

Efficiency comparison of unbiased estimators

- Lower variance indicates higher efficiency when comparing estimators

- Calculate and directly compare variances of different estimators

- Relative efficiency ratio computed as

- Asymptotic efficiency evaluates as sample size approaches infinity

- Sufficient statistics contain all parameter information often yielding efficient estimators (sample mean for normal distribution)

Mean Squared Error (MSE)

Components of mean squared error

- MSE defined as expected squared difference between estimator and parameter

- Bias component measures systematic deviation from true value

- Variance component quantifies estimator spread around expected value

- Bias-variance decomposition expresses MSE as

- Trade-off between bias and variance may lead to biased estimators with lower overall MSE (ridge regression)

Calculation and interpretation of MSE

- Calculate MSE by:

- Determine estimator's expected value

- Calculate bias

- Compute variance

- Sum squared bias and variance

- Lower MSE indicates better overall estimator performance (comparing OLS vs ridge regression)

- MSE for unbiased estimators equals variance when

- Consistent estimators have MSE approaching zero as sample size increases (maximum likelihood estimators)

- Related measures include Root Mean Squared Error (RMSE) and Mean Absolute Error (MAE)