The Rao-Blackwell theorem and UMVUE are key concepts in statistical estimation. They provide methods for improving estimators and finding the best unbiased estimator for a parameter. These tools help statisticians create more precise and efficient estimates from sample data.

Understanding these concepts is crucial for developing optimal estimators in various fields. The Rao-Blackwell theorem shows how to reduce estimator variance, while UMVUEs represent the pinnacle of unbiased estimation. Together, they form a powerful framework for statistical inference and decision-making.

Rao-Blackwell theorem

- The Rao-Blackwell theorem provides a method for improving an estimator by conditioning on a sufficient statistic

- It enables the construction of a new estimator with a smaller variance than the original estimator

- The theorem is named after C. R. Rao and David Blackwell, who independently proved the result in the 1940s

Sufficient statistics in Rao-Blackwell theorem

- A statistic is sufficient for a parameter if the conditional distribution of the sample given does not depend on

- Sufficient statistics capture all the relevant information about the parameter contained in the sample

- Examples of sufficient statistics include the sample mean for the population mean and the sample variance for the population variance

- The Rao-Blackwell theorem relies on the existence of a sufficient statistic to improve an estimator

Conditions for Rao-Blackwell theorem

- The original estimator must be unbiased, meaning that its expected value is equal to the true parameter value

- A sufficient statistic for the parameter must exist

- The improved estimator is obtained by conditioning the original estimator on the sufficient statistic:

- The Rao-Blackwell estimator has a variance less than or equal to the variance of the original estimator

Proof of Rao-Blackwell theorem

- The proof relies on the law of total variance, which states that

- Since the original estimator is unbiased, is also unbiased

- The term is non-negative, so

- Therefore, the Rao-Blackwell estimator has a variance less than or equal to the variance of the original estimator

Applications of Rao-Blackwell theorem

- The Rao-Blackwell theorem is used to improve the efficiency of estimators in various statistical problems

- It is particularly useful when the original estimator is unbiased but not efficient, and a sufficient statistic is available

- Applications include improving the sample mean estimator by conditioning on the sample variance and improving the sample proportion estimator by conditioning on the sample size

- The theorem is also used in the construction of uniformly minimum variance unbiased estimators (UMVUEs)

Uniformly minimum variance unbiased estimator (UMVUE)

- A UMVUE is an unbiased estimator that has the lowest variance among all unbiased estimators for a given parameter

- It is considered the "best" unbiased estimator in terms of minimizing the mean squared error

- The concept of UMVUE is closely related to the Rao-Blackwell theorem and the Cramér-Rao lower bound

Definition of UMVUE

- An estimator is a UMVUE for a parameter if it satisfies two conditions:

- Unbiasedness: for all values of

- Minimum variance: for any other unbiased estimator

- The UMVUE is unique when it exists, meaning that there cannot be two different UMVUEs for the same parameter

Relationship between UMVUE and Rao-Blackwell theorem

- The Rao-Blackwell theorem is a key tool in finding UMVUEs

- If an unbiased estimator is not a function of a sufficient statistic, the Rao-Blackwell theorem can be used to improve it

- The improved estimator obtained through the Rao-Blackwell theorem is a candidate for the UMVUE

- However, not all Rao-Blackwell estimators are UMVUEs, as they may not have the minimum variance among all unbiased estimators

Conditions for existence of UMVUE

- The existence of a UMVUE depends on the statistical model and the parameter of interest

- A necessary and sufficient condition for the existence of a UMVUE is the completeness of the sufficient statistic

- A statistic is complete if for all implies that with probability 1

- In some cases, a UMVUE may not exist, such as when the parameter space is not convex or when the sample size is too small

Finding UMVUE using Rao-Blackwell theorem

- To find the UMVUE using the Rao-Blackwell theorem, follow these steps:

- Find a sufficient statistic for the parameter

- Check if the sufficient statistic is complete

- If the sufficient statistic is complete, find an unbiased estimator for

- Apply the Rao-Blackwell theorem to obtain the improved estimator

- The resulting estimator is the UMVUE for

- If the sufficient statistic is not complete, the Rao-Blackwell estimator may not be the UMVUE, and other methods may be needed to find the UMVUE

Examples of UMVUE

- For the normal distribution with unknown mean and known variance , the sample mean is the UMVUE for

- For the Bernoulli distribution with unknown success probability , the sample proportion is the UMVUE for

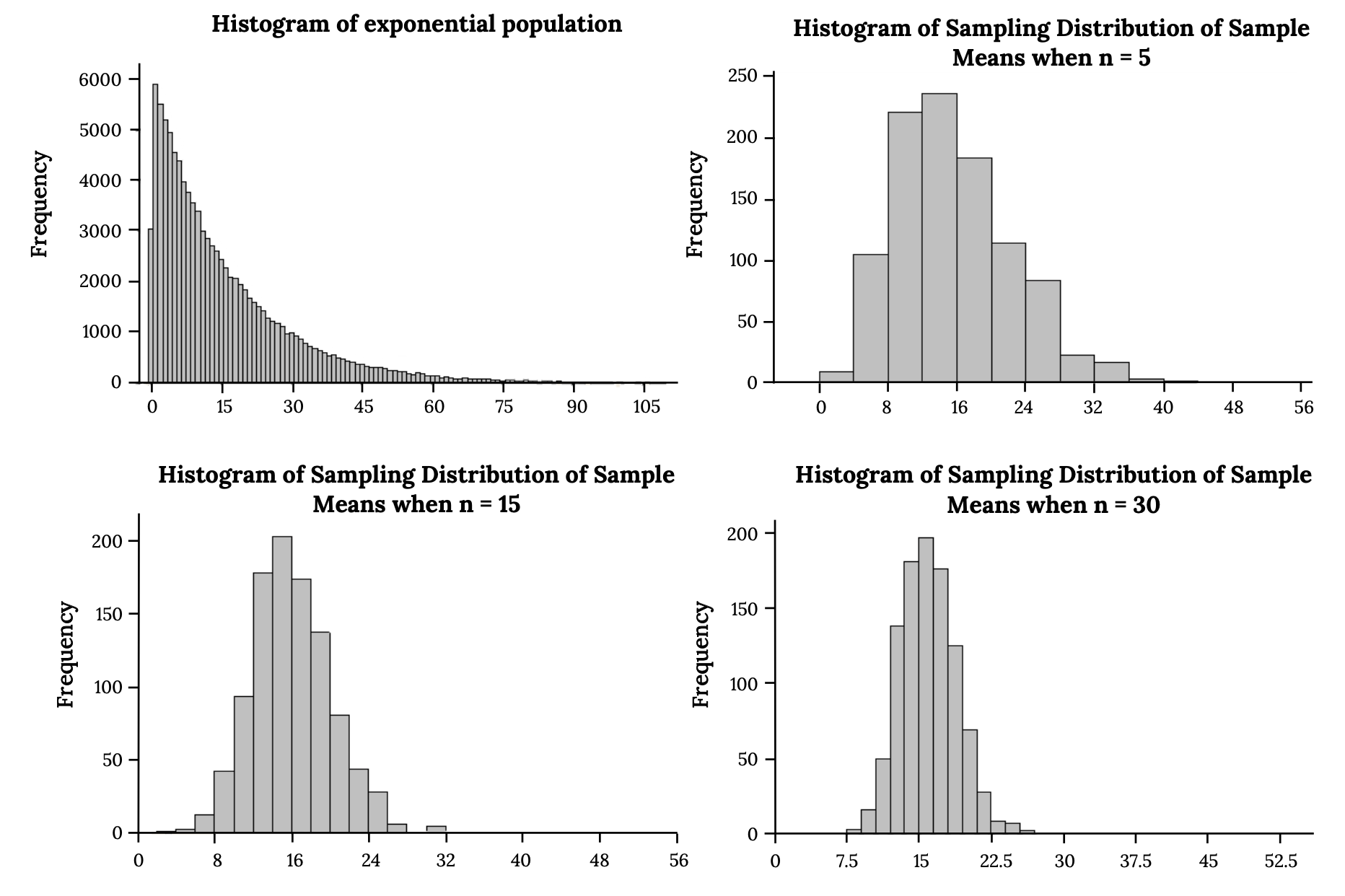

- For the exponential distribution with unknown rate parameter , the reciprocal of the sample mean is the UMVUE for

- In some cases, the UMVUE may be a complicated function of the sufficient statistic, and its expression may not have a closed form

Comparison of estimators

- When multiple estimators are available for a parameter, it is important to compare their properties to select the most appropriate one

- Estimators can be compared based on various criteria, such as unbiasedness, efficiency, consistency, and robustness

- The Rao-Blackwell theorem and the concept of UMVUE provide a framework for comparing and improving estimators

Efficiency of estimators

- Efficiency is a measure of how close an estimator's variance is to the minimum possible variance among all unbiased estimators

- The efficiency of an estimator is defined as the ratio of the minimum variance to the estimator's variance:

- An estimator with an efficiency of 1 is considered fully efficient, while an estimator with an efficiency less than 1 is suboptimal

- The Rao-Blackwell theorem can be used to improve the efficiency of an estimator by reducing its variance

Variance of estimators

- The variance of an estimator measures its expected squared deviation from the true parameter value

- A smaller variance indicates that the estimator is more precise and less dispersed around the true value

- The Cramér-Rao lower bound provides a lower limit for the variance of any unbiased estimator

- The UMVUE, when it exists, achieves the Cramér-Rao lower bound and has the minimum variance among all unbiased estimators

Bias vs variance trade-off

- Bias and variance are two sources of error in estimators

- Bias refers to the difference between the expected value of the estimator and the true parameter value

- Variance refers to the variability of the estimator around its expected value

- There is often a trade-off between bias and variance: reducing one may increase the other

- Unbiased estimators, such as the UMVUE, prioritize the elimination of bias at the potential cost of higher variance

- In some cases, allowing a small amount of bias can lead to a significant reduction in variance, resulting in a more accurate estimator overall

Advantages of UMVUE over other estimators

- UMVUEs have the smallest variance among all unbiased estimators, making them the most precise unbiased estimators

- They are often easy to interpret and communicate, as they are unbiased and have a clear optimality property

- UMVUEs are unique when they exist, providing a definitive choice for the best unbiased estimator

- They are derived using the Rao-Blackwell theorem, which is a powerful and general tool for improving estimators

- UMVUEs are widely used in statistical inference and form the basis for many standard estimators in various fields

Applications in real-world scenarios

- The Rao-Blackwell theorem and the concept of UMVUE have numerous applications in real-world data analysis and decision-making

- They provide a rigorous framework for constructing and evaluating estimators in various domains, such as science, engineering, economics, and social sciences

- The use of these tools can lead to more accurate and reliable estimates, which in turn can inform better decisions and policies

Use of Rao-Blackwell theorem in data analysis

- The Rao-Blackwell theorem is used to improve the efficiency of estimators in data analysis

- It is particularly useful when working with large datasets or when the sample size is limited

- By conditioning on sufficient statistics, the Rao-Blackwell theorem can help reduce the variance of estimators and provide more precise estimates

- This can lead to more accurate conclusions and better-informed decisions based on the analyzed data

UMVUE in parameter estimation

- UMVUEs are widely used in parameter estimation problems, where the goal is to estimate unknown population parameters from sample data

- They provide the best unbiased estimates for parameters of interest, such as means, proportions, and variances

- UMVUEs are particularly valuable when the sample size is small, and the efficiency of the estimator is crucial

- In many standard statistical models, UMVUEs have simple and intuitive forms, making them easy to compute and interpret

Rao-Blackwell theorem and UMVUE in decision making

- The Rao-Blackwell theorem and UMVUEs can inform decision-making by providing accurate and reliable estimates of key parameters

- In fields such as business, healthcare, and public policy, decisions often rely on estimated values of population characteristics

- Using UMVUEs ensures that the decisions are based on the best available unbiased estimates, minimizing the potential for errors and biases

- The Rao-Blackwell theorem can be used to improve existing decision-making processes by refining the estimators used

Limitations of Rao-Blackwell theorem and UMVUE

- The Rao-Blackwell theorem and the concept of UMVUE have some limitations that should be considered in real-world applications

- The existence of a UMVUE depends on the completeness of the sufficient statistic, which may not always hold in practice

- In some cases, the UMVUE may have a complicated form or be difficult to compute, limiting its practical usefulness

- The optimality of UMVUEs is based on the unbiasedness and minimum variance criteria, which may not be the most appropriate in all situations

- In some cases, biased estimators with lower mean squared error may be preferred over UMVUEs

- The Rao-Blackwell theorem and UMVUEs are based on the assumption of a correct statistical model, and their performance may be affected by model misspecification or violations of assumptions