Nonlinear differential equations are tricky beasts. They can have multiple solutions, or none at all. This section dives into the nitty-gritty of when and why solutions exist, and how to find them.

We'll also look at systems of equations and stability. Understanding these concepts helps us predict how complex systems behave over time. It's like having a crystal ball for math!

Solutions of Nonlinear Differential Equations

Existence and Uniqueness of Solutions

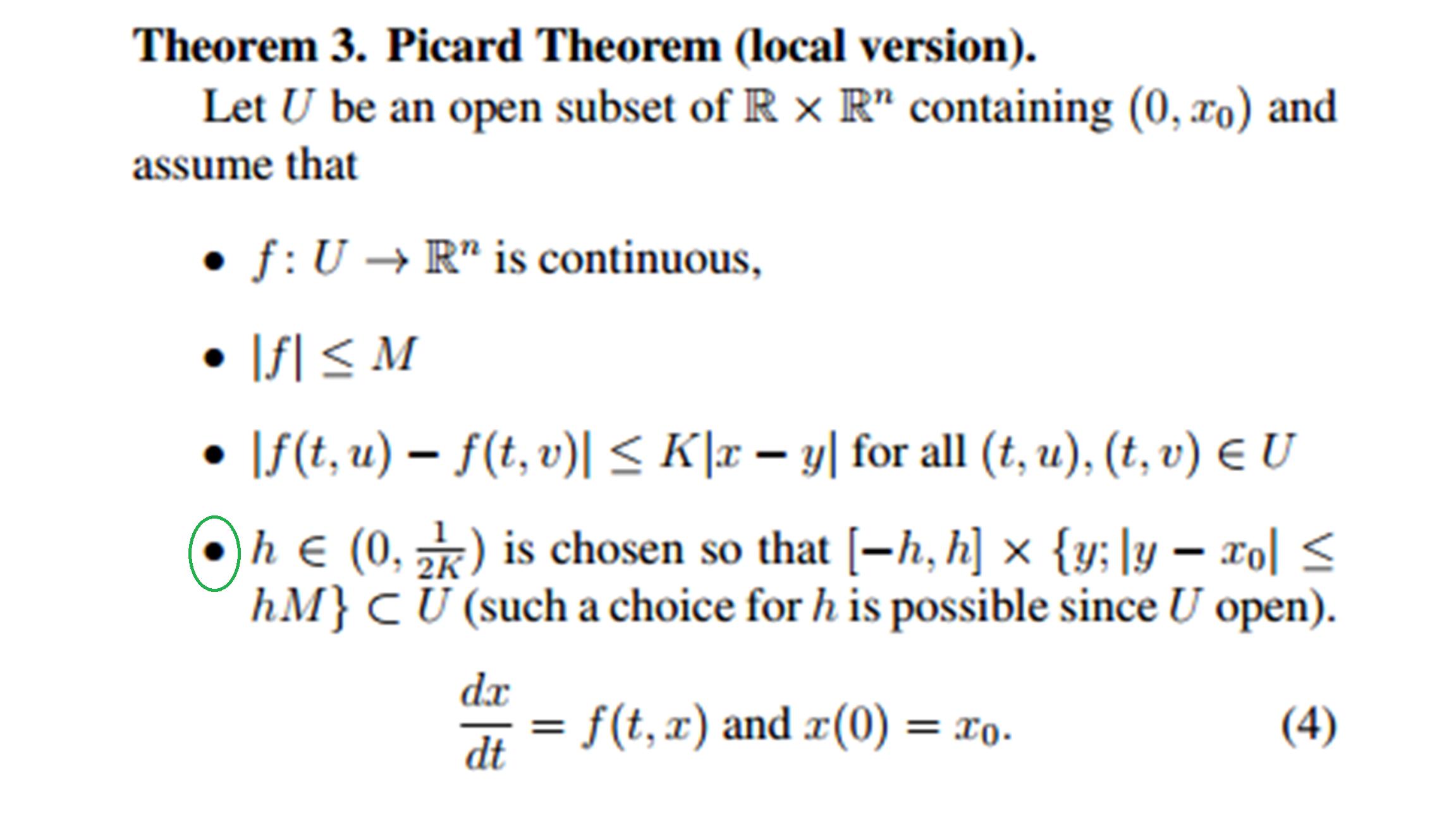

- The Picard-Lindelöf theorem, also known as the Cauchy-Lipschitz theorem, guarantees the existence and uniqueness of solutions to initial value problems for first-order nonlinear differential equations under certain conditions

- The conditions for the Picard-Lindelöf theorem include:

- The continuity of the function in the differential equation

- The Lipschitz continuity of with respect to

- The Lipschitz condition ensures that the solutions of the differential equation do not diverge too quickly, which is essential for proving the uniqueness of solutions

- Example: The differential equation with the initial condition satisfies the conditions of the Picard-Lindelöf theorem and has a unique solution for

Solution Methods for Nonlinear Differential Equations

- The contraction mapping principle is a key tool in proving the Picard-Lindelöf theorem and establishing the existence and uniqueness of solutions

- The Picard iteration method is a constructive approach to finding the solution of a nonlinear differential equation, which involves iteratively approximating the solution using a sequence of functions

- The Picard iteration starts with an initial approximation and generates a sequence of functions that converge to the actual solution

- Each iteration is defined by , where is the initial condition and is the initial time

- Example: Applying the Picard iteration method to the differential equation with the initial condition yields the sequence of approximations , , , and so on, which converge to the exact solution

Systems of Linear Differential Equations

Matrix Representation and General Solution

- A system of linear differential equations can be represented in matrix form as , where is a vector of functions and is a constant matrix

- The general solution of a system of linear differential equations can be expressed as a linear combination of exponential functions, where the exponents are the eigenvalues of the matrix

- The general solution of the system can be written as , where:

- are arbitrary constants

- are the eigenvectors corresponding to each eigenvalue

- are the corresponding eigenvalues

- Example: Consider the system of linear differential equations , . The matrix representation is . The eigenvalues are and , and the corresponding eigenvectors are and . The general solution is

Eigenvalues and Eigenvectors

- Eigenvalues and eigenvectors of the matrix play a crucial role in determining the behavior and stability of the solutions to the system of linear differential equations

- The eigenvalues of the matrix can be found by solving the characteristic equation , where represents the eigenvalues and is the identity matrix

- The eigenvectors corresponding to each eigenvalue can be found by solving the equation , where represents the eigenvectors

- The eigenvalues determine the growth or decay of the solutions, while the eigenvectors determine the direction of the solutions in the phase space

- Example: For the matrix , the characteristic equation is . The eigenvalues are and . The corresponding eigenvectors are and

Laplace Transform for Initial Value Problems

Definition and Properties of the Laplace Transform

- The Laplace transform is an integral transform that converts a function of time, , into a function of a complex variable, , in the frequency domain

- The Laplace transform of a function is defined as , where is a complex variable

- The Laplace transform has several important properties, such as linearity, scaling, shifting, differentiation, and integration, which facilitate the manipulation of transformed functions

- Example: The Laplace transform of the exponential function is for

Solving Initial Value Problems using the Laplace Transform

- The Laplace transform is particularly useful for solving initial value problems involving higher-order linear differential equations with constant coefficients

- The Laplace transform of the nth derivative of a function is given by , where , , ..., are the initial conditions

- By taking the Laplace transform of a linear differential equation, the equation is transformed into an algebraic equation in terms of , which can be solved for using algebraic manipulation

- The solution in the time domain, , can be obtained by applying the inverse Laplace transform to using techniques such as partial fraction decomposition, the convolution theorem, or tables of Laplace transforms

- Example: Consider the initial value problem , , . Taking the Laplace transform yields , where . Substituting the initial conditions and solving for gives . Using partial fraction decomposition and the inverse Laplace transform, the solution in the time domain is

Stability of Equilibrium Points

Concept of Stability

- Stability refers to the behavior of solutions to a nonlinear system near equilibrium points, which are the points where the rate of change of the system variables is zero

- An equilibrium point is stable if nearby solutions remain close to the equilibrium point as time progresses, while an unstable equilibrium point is one where nearby solutions diverge from the equilibrium point over time

- The stability of an equilibrium point can be classified as:

- Asymptotically stable: Nearby solutions converge to the equilibrium point as time approaches infinity

- Stable: Nearby solutions remain close to the equilibrium point but may not converge to it

- Unstable: Nearby solutions diverge from the equilibrium point over time

- Example: Consider the nonlinear system , . The equilibrium points are and . The point is unstable, while the point is asymptotically stable

Methods for Analyzing Stability

- Lyapunov stability theory provides a framework for analyzing the stability of equilibrium points without explicitly solving the nonlinear system

- Lyapunov functions are scalar-valued functions that can be used to determine the stability of an equilibrium point based on the sign of the function's time derivative along the system trajectories

- If a Lyapunov function exists and its time derivative is negative definite (or negative semidefinite for stability), then the equilibrium point is stable (or asymptotically stable)

- The linearization method involves approximating the nonlinear system near an equilibrium point using a linear system and analyzing the stability of the linearized system using eigenvalues of the Jacobian matrix

- The Jacobian matrix is the matrix of partial derivatives of the system functions evaluated at the equilibrium point

- If the real parts of all eigenvalues of the Jacobian matrix are negative, the equilibrium point is asymptotically stable; if at least one eigenvalue has a positive real part, the equilibrium point is unstable

- Example: For the nonlinear system , , the equilibrium points are and . Using the linearization method, the Jacobian matrix at is , which has negative eigenvalues, indicating that is asymptotically stable. The Jacobian matrix at is , which has one positive and one negative eigenvalue, indicating that is unstable

Solutions Near Singular Points

Classification of Singular Points

- Singular points, also known as critical points or equilibrium points, are points in the phase space where the rate of change of the system variables is zero

- The behavior of solutions near singular points can be classified into different types based on the eigenvalues of the Jacobian matrix evaluated at the singular point:

- Node: Both eigenvalues are real and have the same sign (stable node if negative, unstable node if positive)

- Saddle: Both eigenvalues are real, but one is positive and the other is negative

- Center: Both eigenvalues are purely imaginary (stable, but not asymptotically stable)

- Spiral: Both eigenvalues are complex with nonzero real and imaginary parts (stable spiral if the real part is negative, unstable spiral if the real part is positive)

- Example: Consider the system , . The singular point is . The Jacobian matrix at is , which has eigenvalues and . Since the eigenvalues are complex with a positive real part, the singular point is an unstable spiral

Phase Portraits and Bifurcations

- The phase portrait is a graphical representation of the trajectories of a dynamical system in the phase space, which provides a qualitative understanding of the system's behavior

- Constructing a phase portrait involves:

- Identifying the singular points

- Determining their stability

- Sketching the trajectories in the phase space based on the eigenvalues and eigenvectors of the Jacobian matrix

- Nullclines, which are curves in the phase space where one of the system variables has a zero rate of change, can be used to locate singular points and understand the flow of trajectories in the phase portrait

- Bifurcations, which are qualitative changes in the phase portrait as a parameter of the system varies, can be studied to understand how the behavior of the system changes with respect to the parameter

- Examples of bifurcations include saddle-node bifurcation, pitchfork bifurcation, and Hopf bifurcation

- Example: The system , , where is a parameter, exhibits a pitchfork bifurcation at . For , there is one stable equilibrium point at . For , the point becomes unstable, and two new stable equilibrium points appear at