Moment-generating functions and characteristic functions are powerful tools for analyzing random variables. They help us understand a variable's distribution, calculate moments, and work with sums of independent variables. These functions are especially useful when dealing with complex probability distributions.

These concepts build on earlier topics in the chapter about functions of random variables. They provide alternative ways to describe and analyze random variables, offering insights that may not be immediately apparent from the probability distribution alone.

Moment-generating functions

Definition and computation

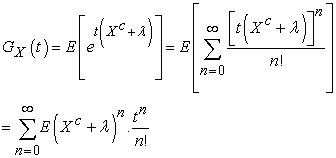

- The moment-generating function (MGF) of a random variable X is defined as , where denotes the expected value and is a real number

- For a discrete random variable X with probability mass function , the MGF is given by , where the sum is taken over all possible values of X (coin flips, die rolls)

- For a continuous random variable X with probability density function , the MGF is given by , where the integral is taken over the entire range of X (heights, weights)

- The MGF of a random variable uniquely determines its probability distribution, provided that the MGF exists in a neighborhood around

Moments and cumulants

- The n-th moment of a random variable X is defined as and can be obtained by differentiating the MGF n times and evaluating at : , where denotes the n-th derivative of with respect to

- The first moment, , is the mean of the random variable

- The second moment, , is related to the variance by

- The n-th cumulant of a random variable X, denoted by , is defined as the n-th derivative of the logarithm of the MGF evaluated at :

- Cumulants are related to moments, with the first cumulant being the mean and the second cumulant being the variance

- Higher-order cumulants provide information about the shape of the distribution, such as skewness () and kurtosis ()

Applications of moment-generating functions

Uniqueness and distribution identification

- The MGF of a random variable uniquely determines its probability distribution, provided that the MGF exists in a neighborhood around

- If two random variables have the same MGF, they must have the same probability distribution

- In some cases, the MGF may be easily recognizable as belonging to a known distribution, allowing for immediate identification of the probability distribution without explicit derivation (exponential, normal)

Deriving probability distributions

- To derive the probability distribution from the MGF, one can use techniques such as partial fraction decomposition or power series expansion

- For discrete random variables, the probability mass function can be obtained by expanding the MGF as a power series and identifying the coefficients of the series as the probabilities

- For continuous random variables, the probability density function can be obtained by inverting the MGF using techniques like the inverse Laplace transform or the Bromwich integral

Properties of characteristic functions

Definition and existence

- The characteristic function (CF) of a random variable X is defined as , where is the imaginary unit and is a real number

- The CF always exists for any random variable, unlike the MGF, which may not exist for some distributions (Cauchy distribution)

Properties and applications

- The CF uniquely determines the probability distribution of a random variable, and the probability density function (if it exists) can be obtained by taking the inverse Fourier transform of the CF

- CFs have several properties:

- , where denotes the complex conjugate

- CFs are particularly useful for studying the sum of independent random variables, as the CF of the sum is the product of the individual CFs

Distribution derivation using functions

Moment-generating functions

- To derive the probability distribution from the MGF, one can use techniques such as partial fraction decomposition or power series expansion

- For discrete random variables, the probability mass function can be obtained by expanding the MGF as a power series and identifying the coefficients of the series as the probabilities (Poisson distribution)

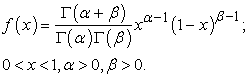

- For continuous random variables, the probability density function can be obtained by inverting the MGF using techniques like the inverse Laplace transform or the Bromwich integral (gamma distribution)

Characteristic functions

- To derive the probability distribution from the CF, one can use the inverse Fourier transform

- For continuous random variables, the probability density function can be obtained by taking the inverse Fourier transform of the CF:

- This technique is particularly useful for distributions that do not have a closed-form expression for their probability density function (stable distributions)