Variance and Standard Deviation

Definition and Basic Properties

Variance measures how spread out a random variable's values are from its mean. It's defined as the expected value of the squared deviation from the mean:

You'll also see it written as . Standard deviation is just the square root of variance, denoted or . The reason standard deviation gets used so often is that it's in the same units as the original data, while variance is in squared units.

A few core properties to know:

- Variance is always non-negative. It equals zero only when the random variable is constant (no spread at all).

- Adding a constant shifts the distribution but doesn't change the spread:

- Scaling by a constant squares the effect on variance:

- For standard deviation, the scaling rule uses absolute value:

- For independent random variables and :

That squaring in the scaling rule is what makes variance "non-linear." If you double , the variance quadruples, not doubles. This trips people up on exams.

Interpretation and Significance

- Larger variance means data points are more spread out from the mean (think volatile stock prices).

- Smaller variance means values cluster tightly around the mean (think consistent manufacturing output).

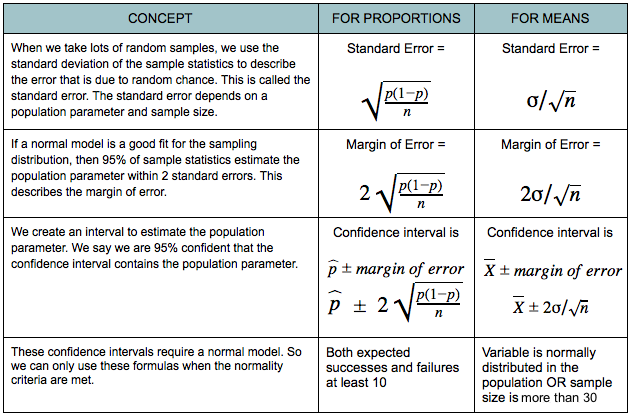

- Variance and standard deviation show up everywhere: risk assessment in finance, quality control in engineering, confidence intervals and hypothesis testing in statistics.

- Standard deviation is often preferred for interpretation because its units match the data.

Calculating Variance and Standard Deviation

Discrete Random Variables

For a discrete random variable with probability mass function , here's the process:

-

Find the mean:

-

Compute variance using the definition:

-

Take the square root for standard deviation:

There's also a shortcut formula that's often easier to compute:

where . This avoids subtracting the mean from every value. Both formulas give the same result, so use whichever feels cleaner for the problem.

Calculation Examples

Fair six-sided die:

- Each outcome has probability for

- Mean:

- Variance:

Biased coin with P(Heads) = 0.6:

- Let for Heads, for Tails

- Mean:

- Variance:

This is a Bernoulli random variable, and you can verify with the general Bernoulli variance formula: .

Variance and Standard Deviation for Continuous Variables

Computation Methods

For a continuous random variable with probability density function , the formulas mirror the discrete case but with integrals replacing sums:

-

Find the mean:

-

Compute variance:

-

Take the square root:

The shortcut formula works here too: where . All integrals are taken over the entire support of .

Application to Specific Distributions

For common continuous distributions, the variance formulas are already worked out:

- Uniform distribution :

- Example: has variance

- Exponential distribution with rate :

- Example: has variance

- Normal distribution : Variance is by definition.

- Example: The standard normal has variance 1.

Knowing these formulas saves you from doing the integral every time. On exams, you'll typically be expected to recognize which distribution you're working with and apply the right formula.

Variance Properties for Independent Variables

Additive Properties

These properties only hold when the random variables are independent:

- Sum:

- Difference:

That second one catches many students off guard. Variance measures spread, and subtracting one random variable from another still introduces variability from both. The variances add either way.

More generally, for a linear combination with constants and :

And for standard deviation of a sum or difference:

These extend naturally to more variables. For independent :

Applications and Limitations

- Portfolio analysis: If two investments have independent returns, the variance of the combined portfolio uses the additive property. This is the math behind diversification.

- Error propagation: When combining multiple independent measurement sources, total error variance is the sum of individual error variances.

- Experimental design: In multi-factor experiments, overall variability comes from adding the variance contributions of each independent factor.

The big limitation: if and are not independent, you need a covariance term:

Forgetting this when variables are dependent is a common mistake. Always check whether independence is stated in the problem before using the simpler additive rule. Also note that these linear combination rules don't generalize to non-linear functions of random variables; for something like , you'd need a different approach entirely.